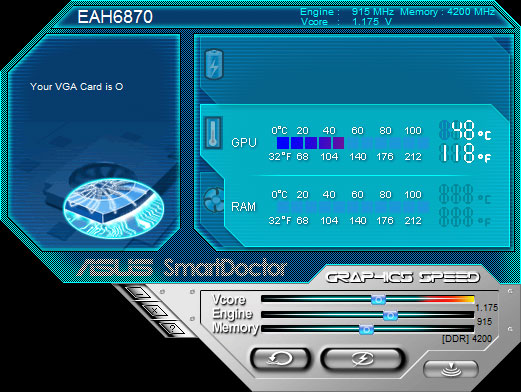

Asus EAH6870 is the first factory overclocked HD6870, with its GPU overclocked to 915 MHz (15MHz higher than the reference card). Bundled with ASUS SmartDoctor, it can be overclocked easily with a slight increase in voltage.

INTRODUCTION

AMD officially kicked off the holiday shopping season with the release of the Radeon HD6870 and HD6850, the first line up from the latest generation of the graphic cards. These cards are targeted at the $200 price bracket where most gamers would be spending the money on. Our initial review on the Radeon HD 6870 and Radeon HD 6850 shows that these cards performs quite well. The HD6850 is capable of taking Nvidia’s GTX 460 head on. The HD6870 comes close to the GTX 470, and occasionally even outperforms AMD’s own HD5870.

With such great performance, it is no wonder that manufactures are quickly putting out cards based with their own signature. This is especially important because holiday shopping is just around the corner, and manufacturers want their products on the market as soon as possible.

ASUS is one of AMD’s oldest partners, and has always produced cards with their own little tweaks. Their latest HD6870 carries over the Voltage Tweak branding that we first saw on the Geforce GTX 470 and the Radeon HD5870. Unlike older Voltage Tweak cards that often come with a better cooler, the HD 6870 Voltage Tweak card uses nearly the same design as the reference card. The only difference is that ASUS put their own cooling shroud with the ASUS logo on the card. According to the marketing info on the box, the Voltage Tweak allows users to boost GPU voltage with the ASUS SmartDoctor software, to achieve up to 50% of performance gain. Let’s see how far we can push the card.

ASUS EAH6870 HD 6870

The box image on the HD 6870 Voltage Tweak is exactly like that of the HD5870 voltage tweak. ASUS even used the same image of the horse-riding creature…demon déjà vu.

The back of the box displays a few special features of the card, including Voltage Tweak, Eyefinity, and the array of outputs.

The card is further packaged in black cardboard, and is well protected by styrofoam padding. The accessories are placed in a little box above the card and in the compartment next to it.

ASUS is following the recent trend of cutting down on excessive accessories. The HD6870 only comes with useful ones: a CrossFire bridge, a PCIE power adapter, a driver CD, and a manual CD.

As we stated in the Introduction, the HD6870 Voltage Tweak uses the same cooler as the reference card. This is probably due to the fact that the HD6870 does not run as hot, leaving enough headroom for overclocking. Of course, knowing ASUS, we may expect to see an ROG (Republic of Gamer) or Matrix edition sometime in the future, like what we saw with the HD5870.

While the shroud looks different than the reference HD6870, it is actually based on the same design, with a radial blower fan to keep the card’s cool. One potentially irritating factor is that the shroud of the card extends over the length of the GPU slightly. As a result, the card actually extends over the edge of the motherboard. For users who have a tight case, it can be difficult to fit the card in it.

ASUS increased the clock speed from 900MHz to 915MHz, but the memory speed is kept the same at 4200 MHz (4x1050MHz). Backed up by 1,120 shaders that are more efficient than the previous generation, the HD6870 is a good replacement for the HD5870, and a capable card to compete against GTX 470. The card comes with 1GB of GDDR5 memory with 256bit interface.

The core refinement allows the HD6870 to utilize less power, so it only requires two 6 pin PCI-E power connectors that gives a maximum 151W TDP, same as the Radeon HD 5850. A single CrossFireX connector is located at its usual place, allowing two cards to run together.

The card has plastic covers over the PCI-E contacts and the DVI ports to prevent damage.

ASUS retains the same connectors as the reference board. There are two DVI ports, one HDMI 1.4a, and two mini DisplayPort 1.2. One thing we wish to point out is that only one of the two DVI ports is dual-link, supporting resolutions up to 2560×1600. For users who wish to run two 30’’ monitors, it is only possible to use one of the DVI ports for the second monitor. However, the DisplayPort 1.2 doubles the bandwidth of the 1.1, which allows the single connector to drive more than one display. With this port, users can daisy-chain monitors, or use the port in conjunction with a hub to power multiple monitors with different types of connectors.

AMD upped the ante with the Eyefinity capabilities of the HD6000 series. The HD6870 now supports up to 6 displays. To use six displays, however, users must use the DisplayPort. Natively, the card will power up 4 monitors: two via the DisplayPort and two via the combination of the DVI and HDMI.

Top to Bottom: HD5870, HD6870, HD6850

A size comparison of the HD5870, HD6870, and HD6850. As we can see, the HD6870 is slightly smaller than the HD5870, but a tad longer than the HD6850.

As with the rest of the HD6000 series, the ASUS HD6870 also supports AMD APP Technology, which allows the user to utillize the GPU for computing work, HD3D for 3D stereoscopy gaming and movies, and UVD3 for hardware assisted video encoding.

ASUS SMARTDOCTOR

ASUS SmartDoctor is the software utility bundled with the card. ASUS has always bundled such software tool with their graphics cards. The SmartDoctor can only work with ASUS cards. When we installed other manufacturers’ cards, the software would not launch.

The program lets users adjust the GPU voltage from 0.952V through 1.35V, with the default voltage set to 1.175V. The GPU clock speed can go from 775MHz to as high as 1000MHz, and the memory frequency can be adjusted from 3520 MHz to 5000MHz.

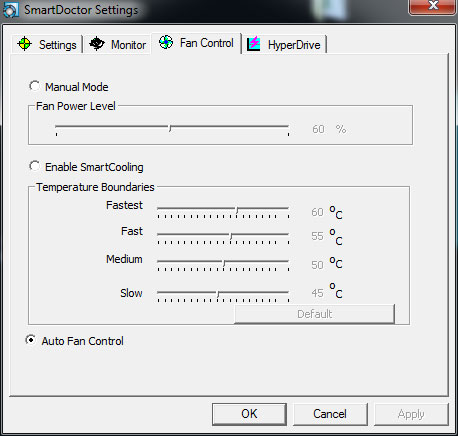

One thing we loved about the SmartDoctor is the fan speed adjustment. While the performance of the video card often receives the most spotlight, the noise produced is always a balancing factor. We are glad to see that ASUS has taken this into account, giving the user the option to fine-tune the fan settings so that users can reach a balanced noise-temperature setting. ASUS has really tried hard over the last few years to add more control over the fan speed setting and temperature across their hardware, both in the graphic cards and the motherboards.

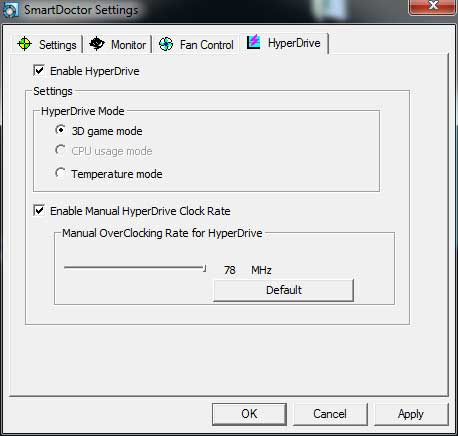

SmartDoctor features a HyperDrive, a dynamic overclocking mechanism that will dynamically switch between the normal clockspeed and the manual overclocked speed.

The latest version of the SmartDoctor really needed a bit of a face-lift to get it up to date, because the text can be somewhat small to read and the adjustment is hard to use because it does not offer incremental changes with the keyboard. Instead, it relies on a slider, so it’s hard to get an exact setting. However, it does get the job done when it comes to overclocking.

OVERCLOCKING

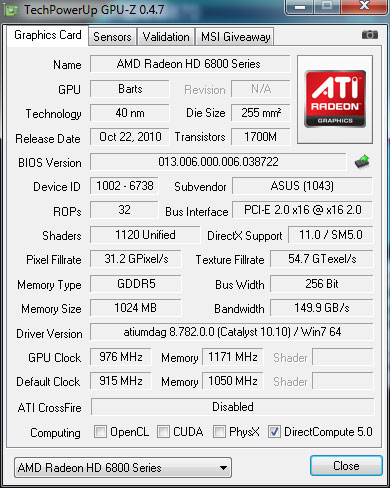

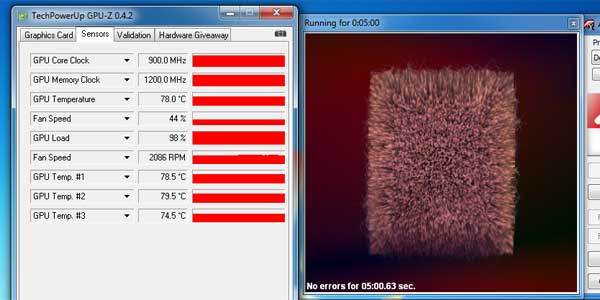

At default voltage, we were only able to get the GPU core overclocked to 975MHz from the default 915MHz, and the memory overclocked to 1171 MHz (4684MHz effective). At this setting, the card passed the Furmark torture test for 15 minutes.

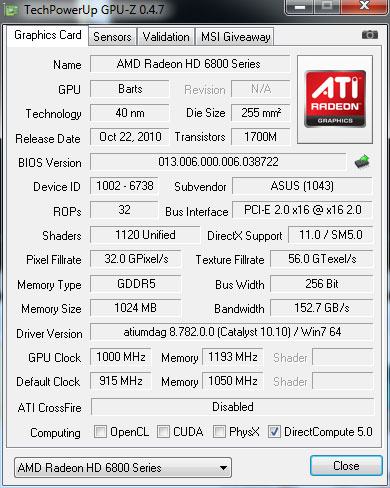

Not satisfied with such a result, we upped the voltage from the default 1.175V to 1.22V. With this slight bump in the voltage, we were able to squeeze out an extra 25MHz on the GPU, reaching the highest setting the software allows: 1000MHz. The extra voltage allows the memory to be overclocked to 1193MHz (4772MHz effective).

When the GPU was running at 1000MHz and memory was running at 1193MHz, we were able to score 424 more points in the 3DMark Vantage overall score and 510 more points in the GPU score. Note, however, that under load, the GPU reaches 90C and and the fan is loud.

TESTING & METHODOLOGY

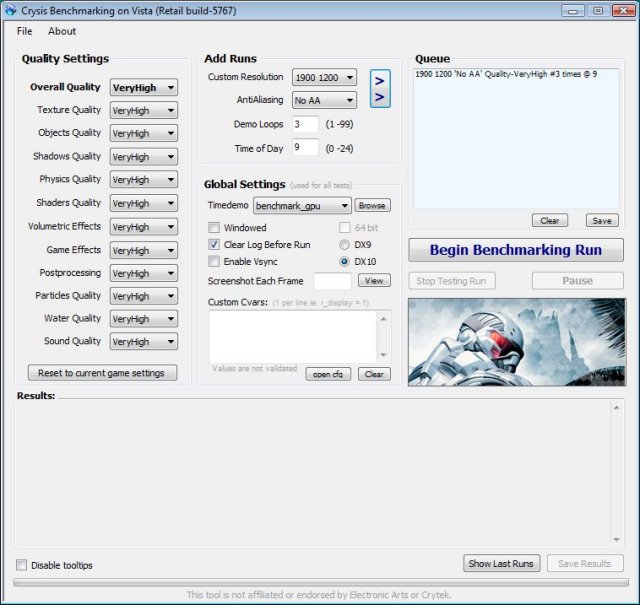

To test the Sapphire HD6850 we did a fresh load of Windows 7 Ultimate, applied all the updates we could find, installed the latest motherboard drivers for the Gigabyte EX58-UD4P, updated the BIOS, and loaded our test suite. We didn’t load graphic drivers because we wanted to pause to clone the HD with the fresh load of Windows 7 without graphic drivers. That way we have a complete OS load with testing suite and it’s not contaminated with GPU drivers. Should we need to switch GPU’s or run CrossFire, later all we have to do is clone our drive and install GPU drivers and we are good to go.

We ran each test a total of 3 times and report the average here. In the case of a screenshot of a benchmark we ran the benchmark 3 times, tossed out the high and low and post the median result from the benchmark. Any erroneous results were discarded and the test was rerun.

Test Rig

| Test Rig “Quadzilla” |

|

| Case Type | None |

| CPU | Intel Core i7 920 |

| Motherboard | Gigabyte EX58-UD4P |

| Ram | Kingstone HyperX 1600 |

| CPU Cooler | Prolimatech Megahalem |

| Hard Drives | Seagate 7200.11 1.5 TB |

| Optical | None |

| GPU | Asus HD 6870 (EAH6870) Gigabyte HD 5770 Super Overclock Asus HD 5870 Matrix Platinum GTX 460 1GB Palit GTS 450 Sonic Platinum Drivers for Nvidia GPU’s 260.89 Drivers for ATI GPU’s 10.10 |

| Case Fans | 120mm Fan cooling the MOSFETs and CPU |

| Docking Stations | None |

| Testing PSU | Cooler Master UCP 900W |

| Legacy | None |

| Mouse | Microsoft Intellimouse |

| Keyboard | Logitech Keyboard |

| Speakers | None |

Synthetic Benchmarks & Games

| Synthetic Benchmarks & Games | |

| 3DMark Vantage | |

| HAWX | |

| Crysis v. 1.2 | |

| Dirt 2 | |

| FarCry 2 | |

| Stalker COP | |

| Crysis Warhead | |

| Unigine Heaven v.2.0 | |

| Metro 2033 | |

Our benchmarks are very comprehensive, including DX9, DX10, DX11, and Tessellation. We wanted as wide a representative sample as possible in the time available.

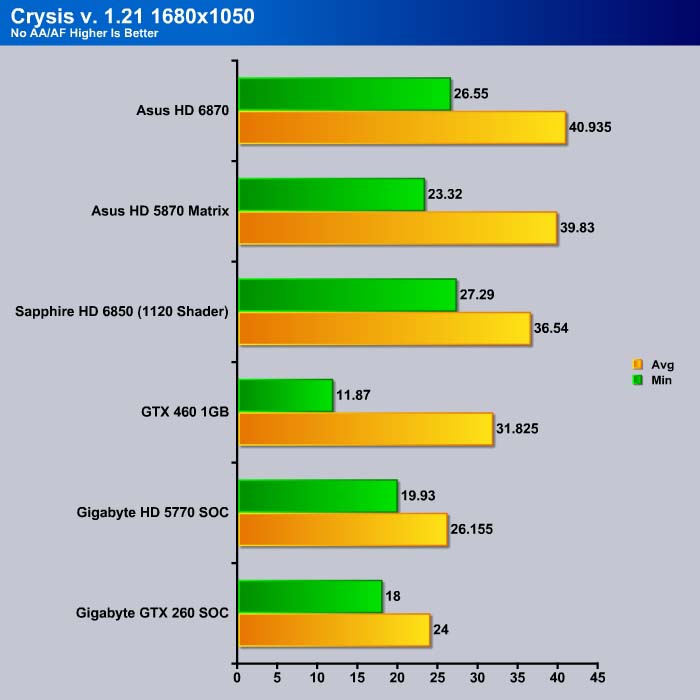

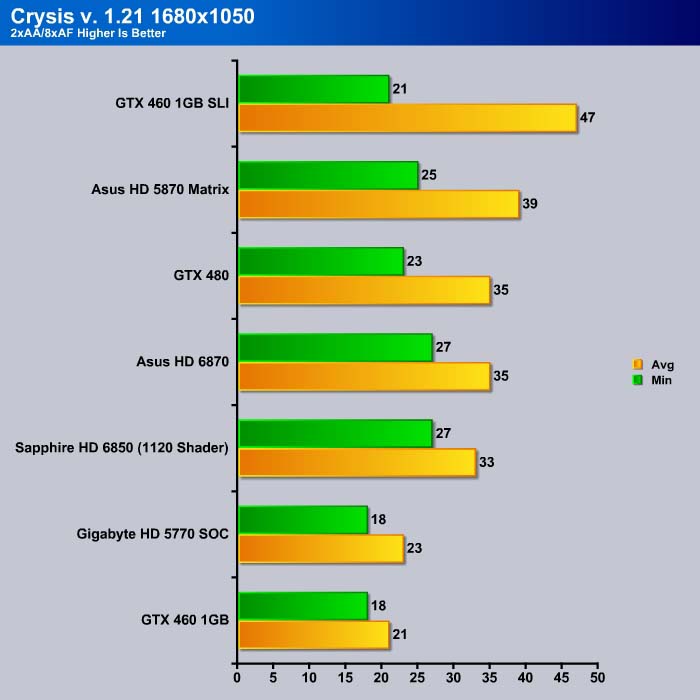

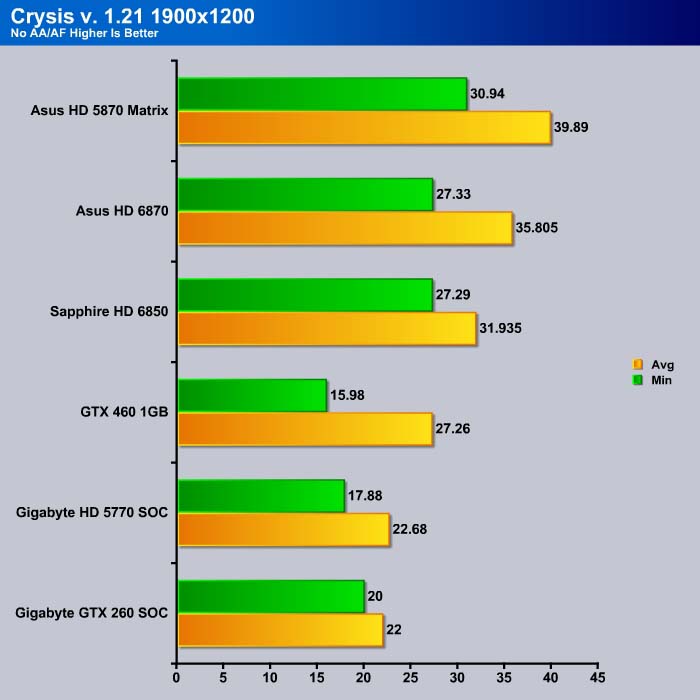

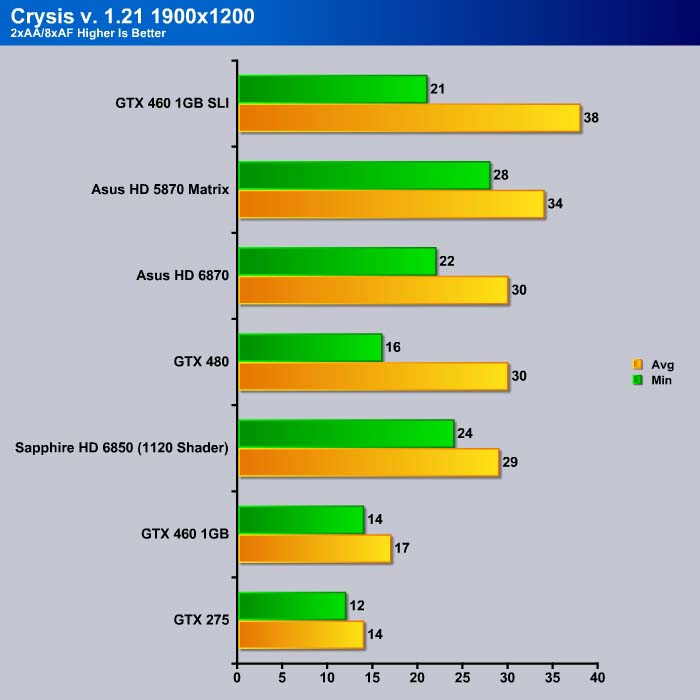

Crysis v. 1.21

Crysis was the most highly anticipated game to hit the market in the last several years. Crysis is based on the CryENGINE™ 2 developed by Crytek. The CryENGINE™ 2 offers real time editing, bump mapping, dynamic lights, network system, integrated physics system, shaders, shadows, and a dynamic music system, just to name a few of the state-of-the-art features that are incorporated into Crysis. As one might expect with this number of features, the game is extremely demanding of system resources, especially the GPU. We expect Crysis to be a primary gaming benchmark for many years to come.

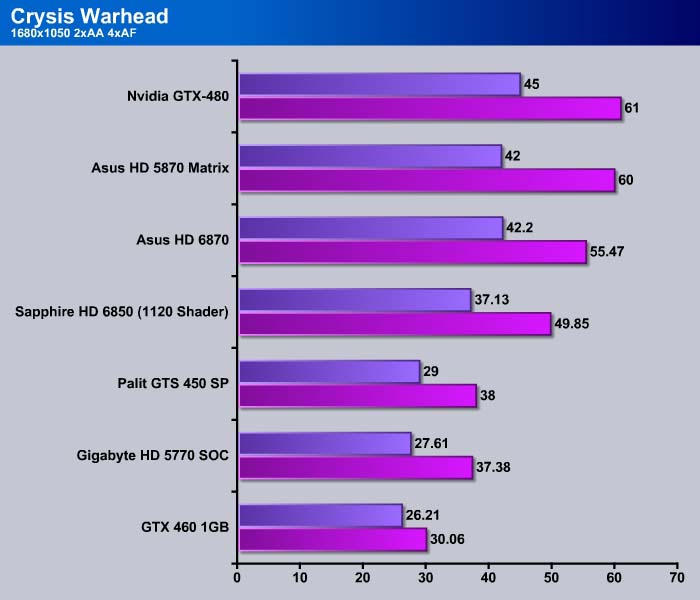

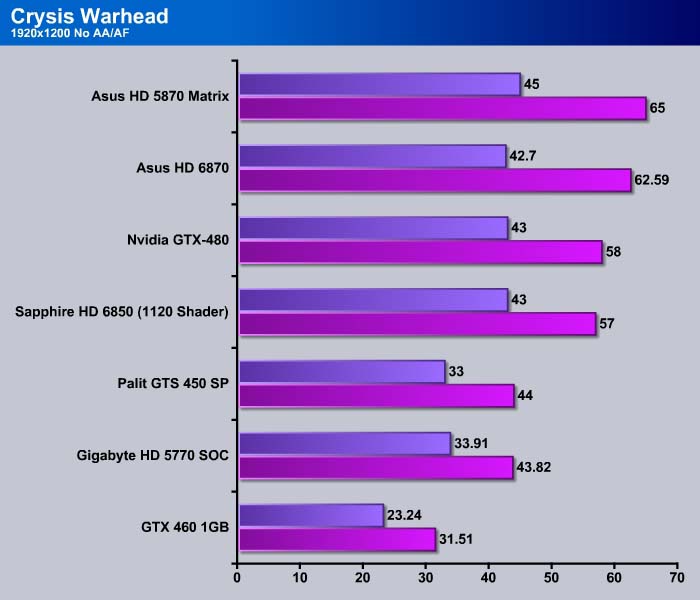

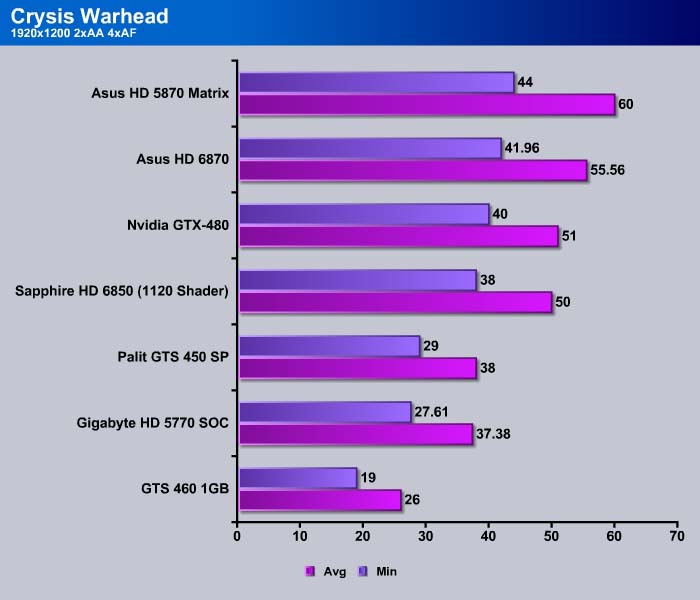

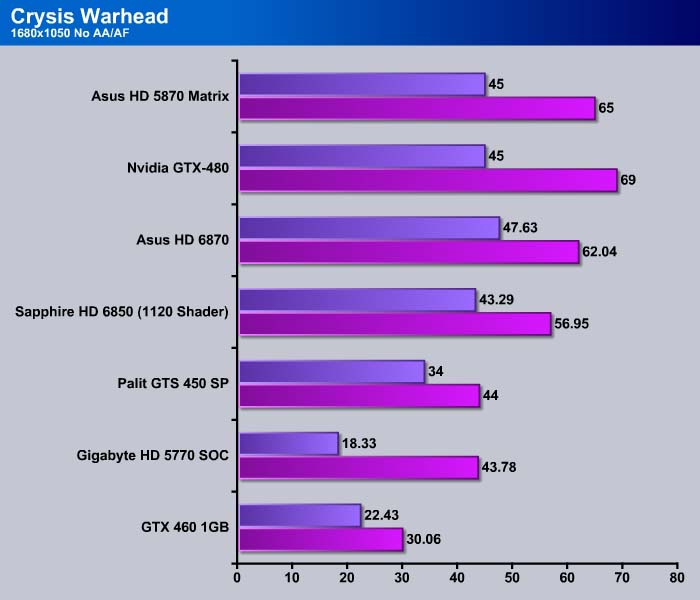

CRYSIS WARHEAD

Crysis Warhead is the much anticipated standalone expansion pack to Crysis, featuring an updated CryENGINE™ 2 with better optimization. It was one of the most anticipated titles of 2008.

At 1680×1050, the HD6870 maintains a substantial lead over the GTX 460. The card maintains 95% of the HD5870’s performance. Compared to the GTX 480, the HD6870 scores at 90% of the GTX 480’s average FPS, and edges out the GTX 480 in the minimum FPS.

We can see the HD 6870 comes ahead of the Sapphire’s HD 6850 with 1120 shader. While having the same number of shaders, the extra clockspeed on the HD 6870 helps out with its performance where it is able to yield 5 extra frames more than the HD 5870 with 1120 shader. The HD 6870 scored 5 average frames per second less than the HD 5870 but edgest out the HD 5870 in the minimum frames per second with 42.2 FPS.

As we turn up the resolution, we can see the HD6870 still maintains its lead over the HD6850 with 1120 shaders. The HD5870 still performed better than the HD6870, but with lower power consumption on the HD6870, we feel that the HD6870 would be more preferred than the HD5870.

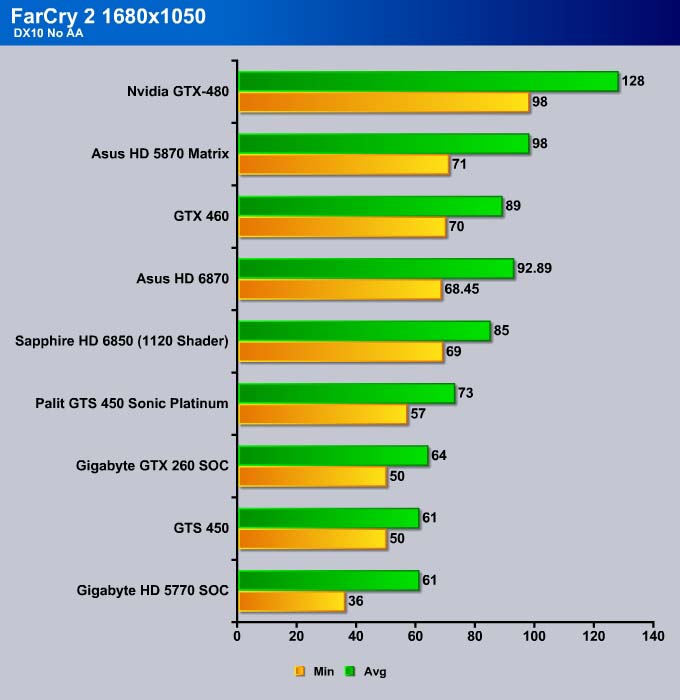

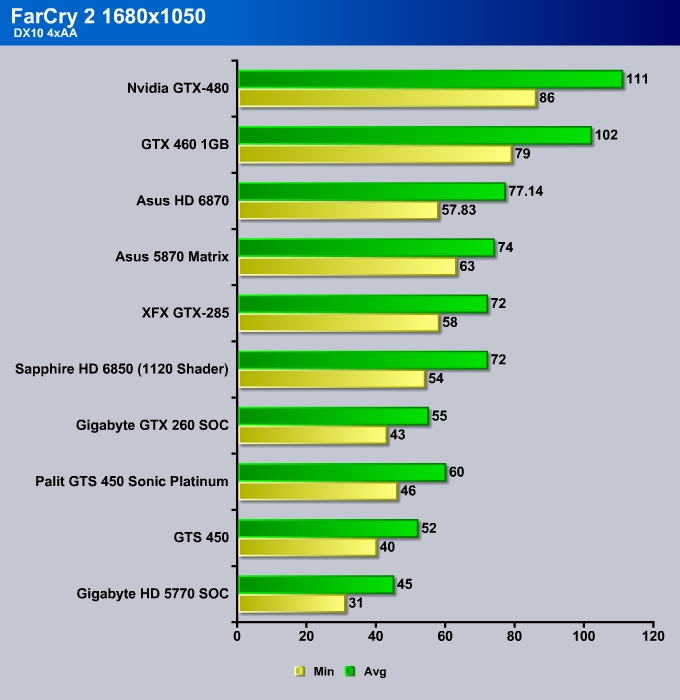

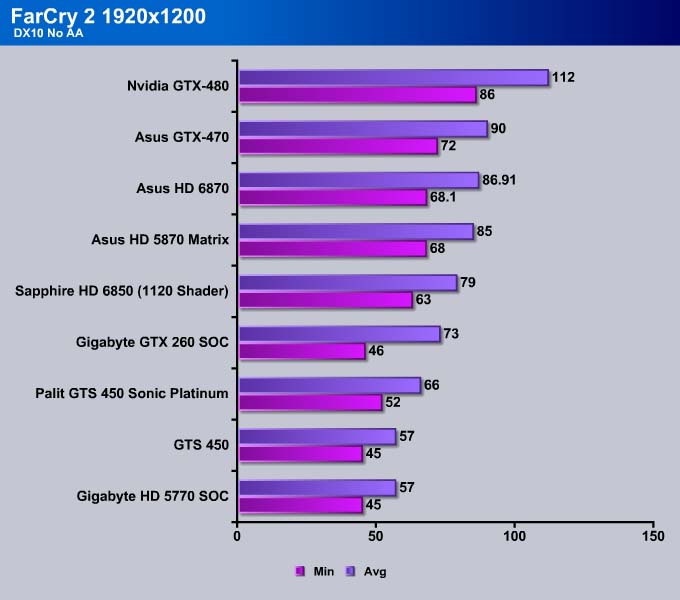

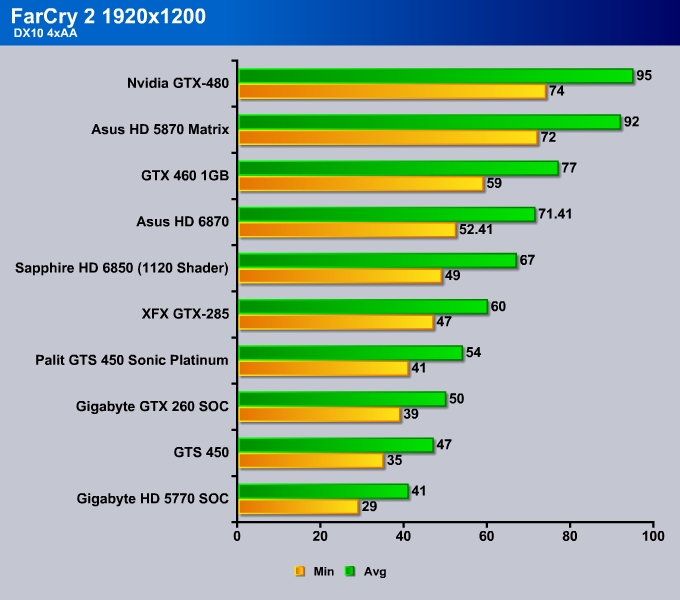

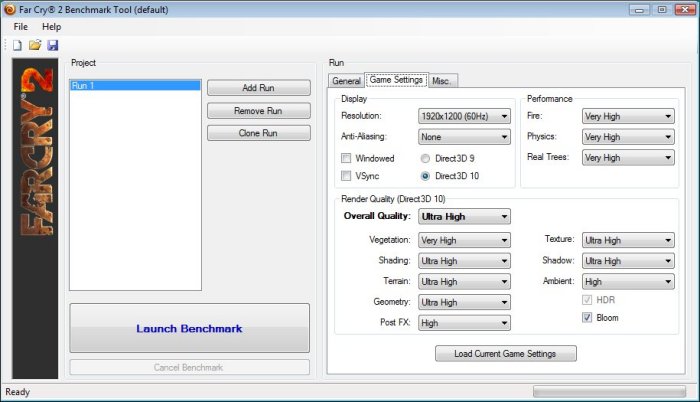

Far Cry 2

Far Cry 2, released in October 2008 by Ubisoft, was one of the most anticipated titles of the year. It’s an engaging state-of-the-art First Person Shooter set in an un-named African country. Caught between two rival factions, you’re sent to take out “The Jackal”. Far Cry 2 ships with a full featured benchmark utility and it is one of the most well designed, well thought out game benchmarks we’ve ever seen. One big difference between this benchmark and others is that it leaves the game’s AI (Artificial Intelligence) running while the benchmark is being performed.

The Settings we use for benchmarking FarCry 2

The Settings we use for benchmarking FarCry 2

The ASUS HD6870 beat out the GTX 460 1GB in this test, while the HD6850 was not able to.

At 1680×1050 with 4xAA, the HD6870 beats the HD5870 with 3 extra average frame rates but the HD5870 is able to offer 6 extra minimum frames. Nvidia cards often come out ahead of AMD cards in Far Cry 2, so it is no surprise that when we enabled the AA, we saw a big performance difference between the GTX 460 and the HD6870.

At higher resolution, the HD6870 came out ahead of the HD5870, but slightly under the GTX 470. The improved shader power on the HD6870 is what helped the card to score well.

When we turn up the eye-candies, the HD5870 comes alive and offers 30% extra performance. The HD6870 also performed worse than GTX 460 1GB, offering 10% lower performance than the GTX 460 1GB. The HD6870 was, however, able to yield 4 more frames compared to the HD6850 with 1120 shaders.

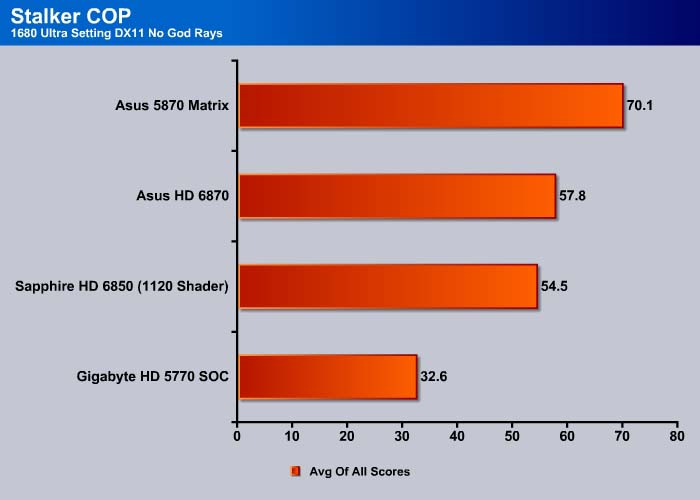

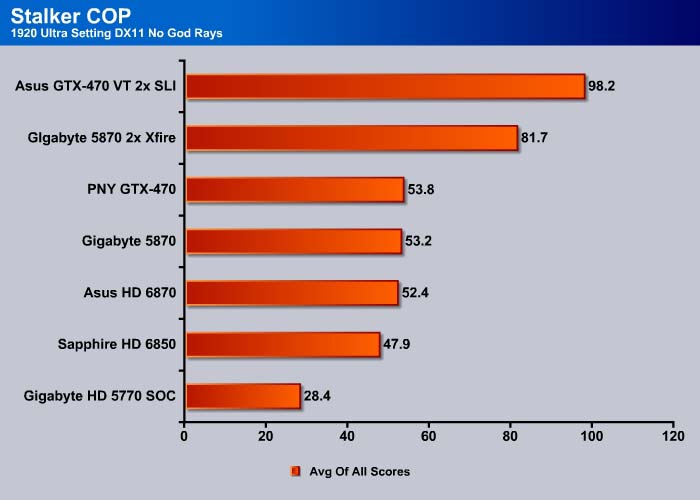

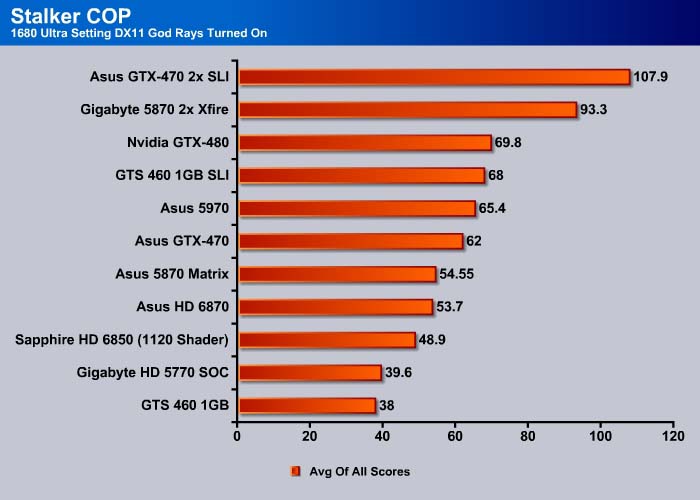

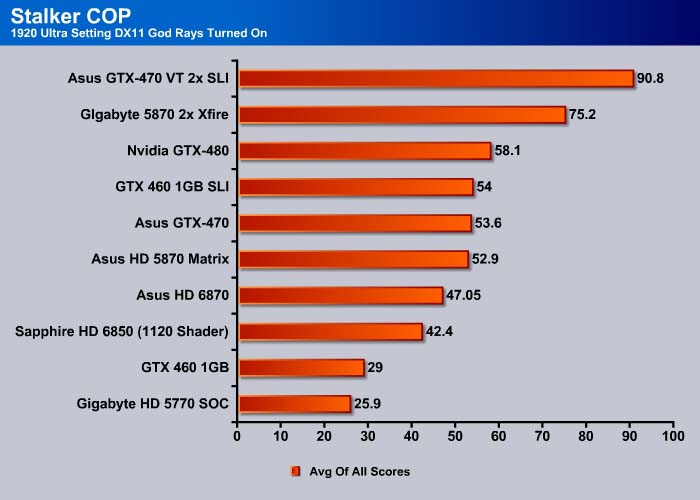

S.T.A.L.K.E.R.: CALL OF PRIPYAT

Call of Pripyat is the latest addition to the S.T.A.L.K.E.R. franchise. S.T.A.L.K.E.R. has long been considered the thinking man’s shooter, because it gives the player many different ways of completing the objectives. The game includes new advanced DirectX 11 effects as well as the continuation of the story from the previous games.

Let’s start by compare the AMD cards when we took the God Rays (Sun Shafts) off the equation.

The HD5870 came in ahead of the ASUS HD6870. With the same numbers of shader units, our HD6870 and HD6850 performed very similarly, with a difference of only 3. This is mostly due to the difference in clockspeed.

At 1920×1080, the HD6870 and the HD5870 performed neck and neck. The HD6870 card came in just under the GTX 470.

At higher resolution, the HD6870 showed its weakness, and scored 5 points lower than the HD5870. While it still out-performed the GTX 460 1GB, it came in 10% behind the GTX 470.

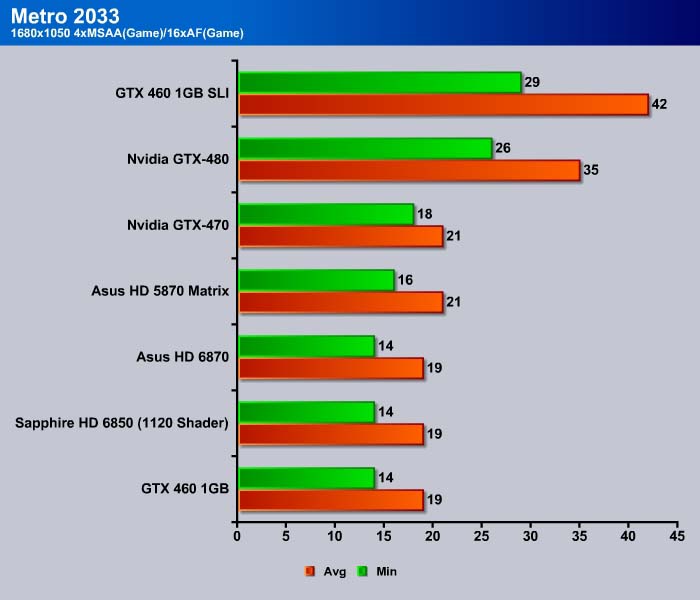

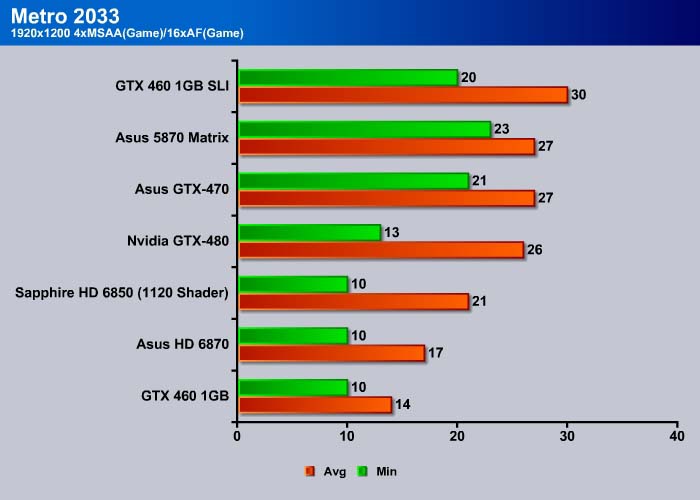

Metro 2033

Metro 2033 is an action-oriented video game with a combination of survival horror, and first-person shooter elements. The game is based on the novel Metro 2033 by Russian author Dmitry Glukhovsky.

The enemies that the player encounters range from human renegades to giant mutated rats and even paranormal forces known only as “The Dark Ones”. Players frequently have to defend themselves with makeshift combination’s of different weapons, e.g a revolver with a sniper scope attached.

Ammunition is also scarce, and the more rare Military Grade bullets are used as currency (to purchase supplies and guns), or in combat as a last resort, giving an added damage boost, forcing the player to hoard supplies.

The game lacks a health meter, relying on audible heart rate and blood spatters to show the player what state they are in and how much damage was done. A gas mask must be worn at all times when exploring the surface due to the harsh air and radiation. There is no on-screen indicator to tell how long the player has until the gas mask’s filters begin to fail, so players must set a wrist watch, and continue to check it every time they wish to know how long they have until their oxygen runs out, requiring the player to replace the filter (found throughout the game). The gas mask also indicates damage in the form of visible cracks, warning the player when a new mask is needed. The game does feature traditional HUD elements, however, such as an ammunition indicator and a list of how many gas mask filters and adrenaline shots remain.

The performance of the HD6870 and HD6850 (1120 shader) is almost identical in this test. This is expected because Metro 2033 is a shader heavy game. The decreased shaders on the HD6000 series has an impact on the performance, as we can see the HD5870 pull ahead with 20% better results compare to the HD 6000 series.

Compare to the GTX 460 1GB, the HD 6870 performs quite well with 30% performance gain. However, the HD 6870 cannot compete against the GTX 470.

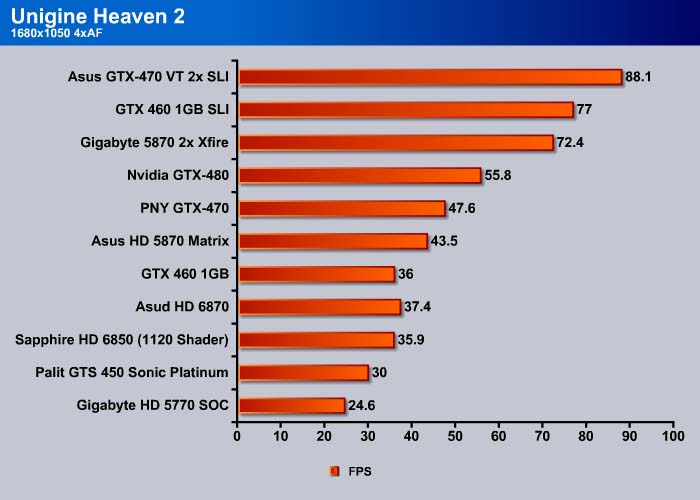

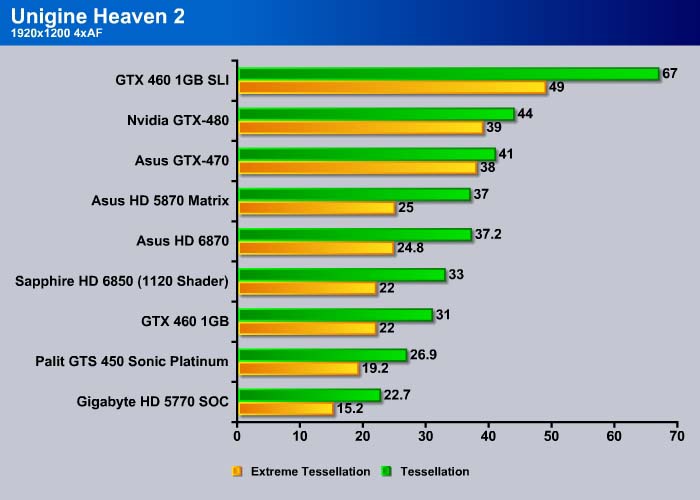

Unigine Heaven 2.0

Unigine Heaven is a benchmark program based on Unigine Corp’s latest engine, Unigine. The engine features DirectX 11, Hardware tessellation, DirectCompute, and Shader Model 5.0. All of these new technologies combined with the ability to run each card through the same exact test means this benchmark should be in our arsenal for a long time.

The settings we used in Unigine Heaven

At 1680×1050 and normal tessellation, the ASUS HD6870 manages to beat out the GTX 460 1GB, but still falls behind the HD5870 and the GTX 470.

With the same number of shaders, the HD6870 is able to score 10% higher performance than the HD6850, and comes close to the HD5870. The improvements of the Tessellation engine on the HD6870 can be seen here. While the HD6870 is able to come close to the HD5870, it only outperforms the GTX 460, falling behind the GTX 470.

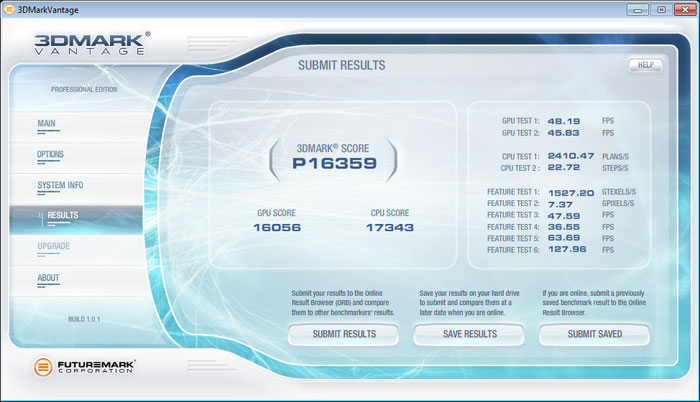

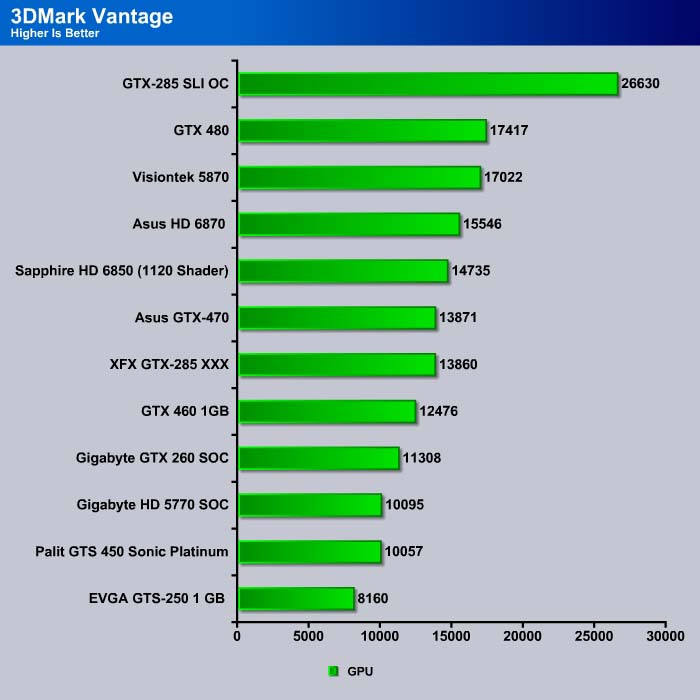

3DMark Vantage

For complete information on 3DMark Vantage Please follow this Link:

www.futuremark.com/benchmarks/3dmarkvantage/features/

The newest video benchmark from the gang at Futuremark. This utility is still a synthetic benchmark, but one that more closely reflects real world gaming performance. While it is not a perfect replacement for actual game benchmarks, it has its uses. We tested our cards at the ‘Performance’ setting.

3DMark Vantage places the ASUS HD6870 ahead of the GTX 460 and GTX 470, but behind the GTX 480.

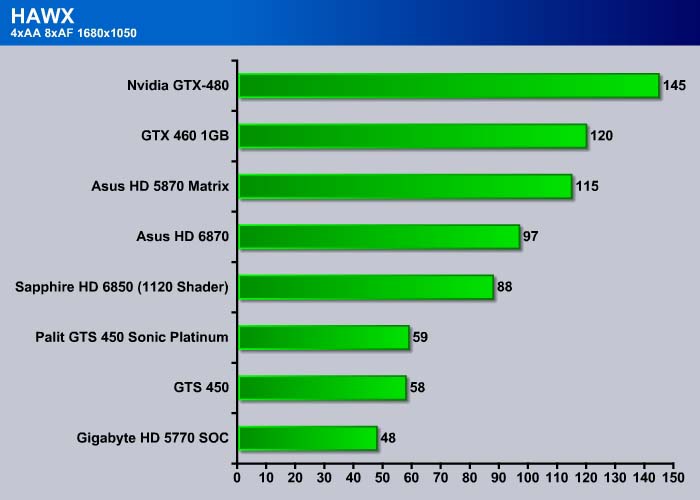

HawX

The story begins in the year 2012. As the era of the nation–state draws quickly to a close, the rules of warfare evolve even more rapidly. More and more nations become increasingly dependent on private military companies (PMCs), elite mercenaries with a lax view of the law. The Reykjavik Accords further legitimize their existence by authorizing their right to serve in every aspect of military operations. While the benefits of such PMCs are apparent, growing concerns surrounding giving them too much power begin to mount.

Tom Clancy‘s HAWX is the first air combat game set in the world–renowned Tom Clancy‘s video game universe. Cutting–edge technology, devastating firepower, and intense dogfights bestow this new title a deserving place in the prestigious Tom Clancy franchise. Soon, flying at Mach 3 becomes a right, not a privilege.

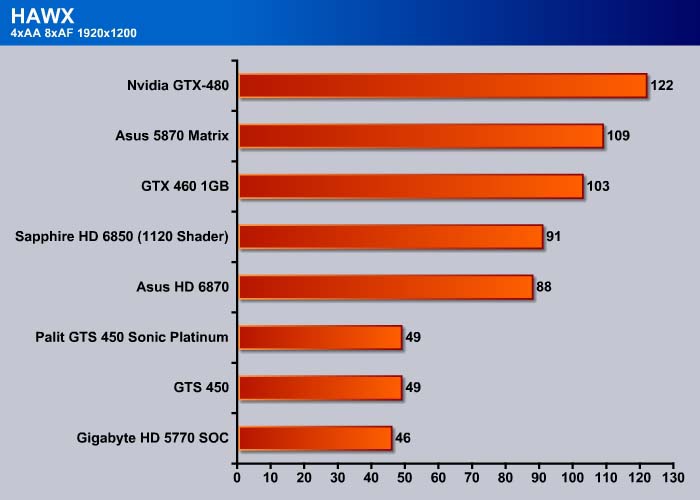

HAWX is another game where Nvidia cards often have better performance. Here the HD6870 scored 97 points, 9 points higher than the HD6850 (1120 shader). The GTX 460 beats out both HD6870 and HD6850.

At 1920×1200, the number of shaders is the determining factor, and the HD6870 and HD6850 perform almost identically, with the HD 6850(1120 shaders) coming in 2 frames ahead.

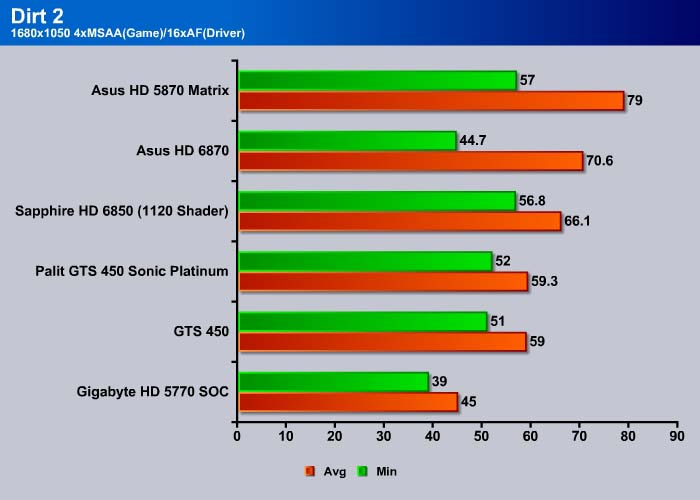

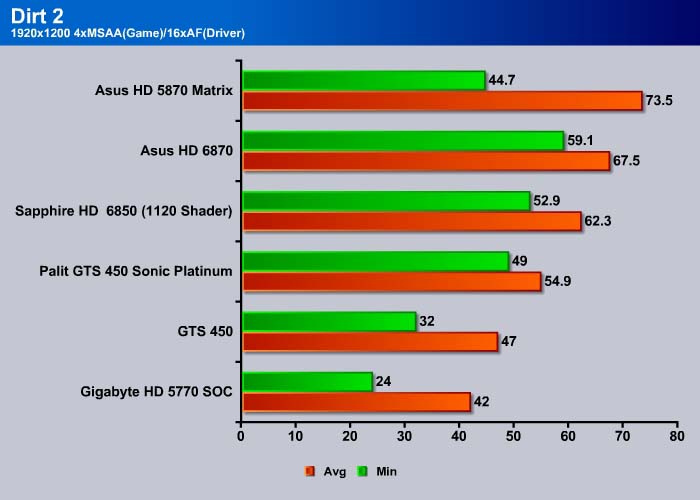

Dirt 2

Colin McRae: Dirt 2 (known as Dirt 2 outside Europe and stylized, DiRT) is a racing game released in September 2009, and is the sequel to Colin McRae: Dirt. This is the first game in the McRae series since McRae’s death in 2007. It was announced on 19 November 2008 and features Ken Block, Travis Pastrana, Tanner Foust, and Dave Mirra. The game includes many new race-events, including stadium events. Along with the player, an RV travels from one event to another, and serves as ‘headquarters’ for the player. It features a roster of contemporary off-road events, taking players to diverse and challenging real-world environments. The game takes place across four continents: Asia, Europe, Africa and North America. The game includes five different event types: Rally, Rallycross, ‘Trailblazer,’ ‘Land Rush’ and ‘Raid.’ The World Tour mode sees players competing in multi-car and solo races at new locations, and also includes a new multiplayer mode.

Colin McRae: Dirt is the first PC video game to use Blue Ripple Sound’s Rapture3D sound engine by default.

A demo of the game was released on the PlayStation Store and Xbox Live Marketplace on 20 August 2009. The demo appeared for the PC on 29 November 2009; it features the same content as the console demo with the addition of higher graphic settings and a benchmark tool.

Dirt 2 is the last set of benchmarks examining the performance scaling of the AMD cards for DirectX 11. Here we can see the HD6870 manages to score 10% higher than the HD6850 (1120 shader), but comes behind the HD5870.

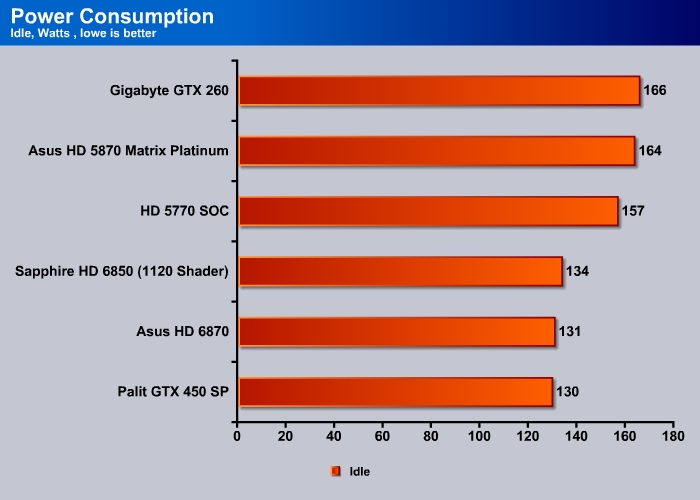

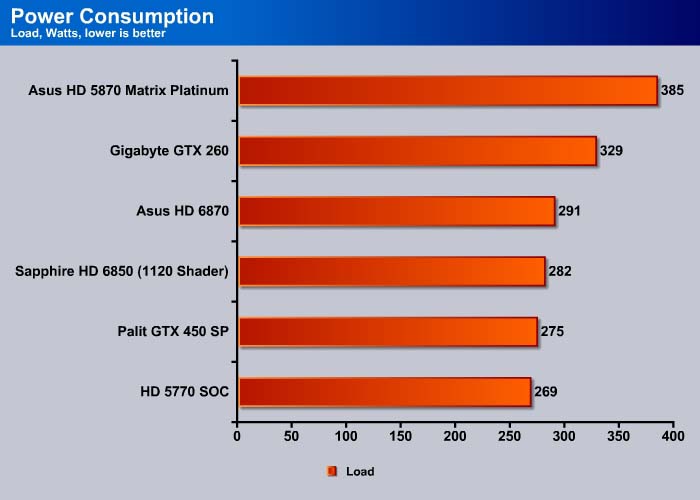

POWER CONSUMPTION

To get our power consumption numbers we plugged in our Kill A Watt power measurement device and took the Idle reading at the desktop during our temperature readings. We left it at the desktop for about 15 minutes and took the idle reading. Then we ran Furmark for 10 minutes and recorded the highest power usage.

The HD6870 comsumes 131W of power at idle. This is 3 watts less than the Sapphire HD6850 which is clocked at 65MHz less, though containing the same number of shaders. Under load, the HD6870 consumes slightly more, at 291W compared to the HD6850’s 282W. For extra 10W in power consumption, we actually get about 5~10% performance gain for the HD 6870, which is not too shabby.

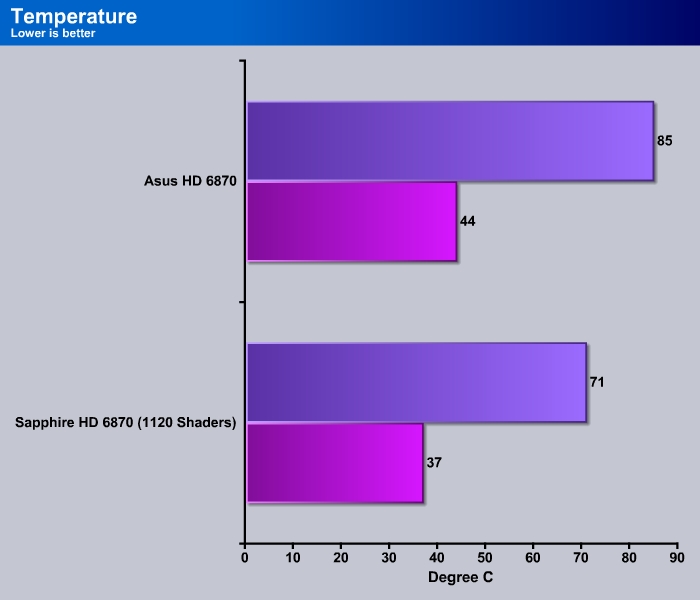

TEMPERATURES and noise-level

Conclusion

We already know that the HD 6870 is a good midrange card that offers good performance at resolutions up to 1920×1080. It often is able to keep up with the HD5870, and sometimes out-performs the GTX 460 1GB, though it falls just a bit under the GTX 470.

The Asus EAH6870 is actually one of the first cards in the HD6870 line up that is factory overclocked. Even though it is only overclocked 15MHz higher than the reference design, it is nonetheless a good performance boost out of the box. The good news is that ASUS keeps the price of the card competitive, even with the factory overclock. A quick search on Newegg shows that the card can be purchased for $239.99 (it’s currently out of stock unfortunately), which is equal to other HD6870’s, and even cheaper than some manufacturers’ cards. Users get the slight bump in performance without incurring extra cost.

We believe ASUS could have improved this card even more by putting on their own heatsink, because the noise on the card is slightly higher than what we would have liked. While under normal use, the fan noise is acceptable, but under heavy load, we can definitely hear the fan blowing. What is worse is that we feel the cooling on the card actually hurts its overclocking potential, to the point where we were only able to overclock the GPU to 1000MHz and memory to 4772MHz.

All things considered, the ASUS HD6870 is not a terrible choice for those looking to buy a midrange graphics card. We like the new features, such as the ability to power 6 displays, ample choice of outputs, and the UVD3, that the HD6870 brings. ASUS warranty covers the card for up to 3 years, and its SmartDoctor offers an easy way to overclock the card and adjust fan speeds.

| OUR VERDICT: ASUS EAH6870 | ||||||||||||||||||

|

||||||||||||||||||

| Summary: Clocked at 15MHz higher than the reference HD6870, ASUS’s card offers slight performance gain without incurring extra cost to the customer. This makes the Asus EAH6870 a good choice among the current HD6870’s on the market. The only thing we wish to see is a quieter cooler. This card receives the Bjorn3D Seal of Approval. |

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996