NVIDIA’s release of the GeForce GTX 560 Ti comes with a new chip (the GF114). The card is intended to replace the old GTX 460 (GF104), and fill the lowest high-end slot for the GeForce 500 family.

introduction

With the release of the newest high performance cards, like GTX 580 and 570, which utilize a redesigned and optimized GF110 chip, NVIDIA was able to take the crown for the production of the fastest and quietest single GPU cards in the world. Both the GTX 580 and 570 were able to excel in performance, and the GTX 480 with its GF100 chip became obsolete. Unlike the GF100 chip, which had 512 CUDA cores but was able to utilize only 480, the GF110 lives up to it full potential and uses all 512 CUDA cores. The GTX 560 Ti is also quipped with 8 PolyMorph Engines dedicated to tesselation and additional raster engines that covert polygonal shapes to pixel fragments to allow faster culling.

The successful release of GTX 580 and 570 has left many gaming ethusiasts wondering about the release date of lower-budget high performance GTX 560. Its predecessor, the GTX 460, was a tremendous success, providing excellent performance at a significantly lower price than GTX 470 or 480. We gave the GTX 460 in SLI our Golden Bear Award and Best Bang For the Buck Award. As of January 25, 2011 NVIDIA is officially releasing the GTX 560 Ti. In designing the GTX 560 Ti, NVIDIA tried to create a graphics card that would demonstrate devastating power and lighting fast speed packed in a light body. NVIDIA has reinstated the old Titanium designation to emphasize the light weight and strength of this card.

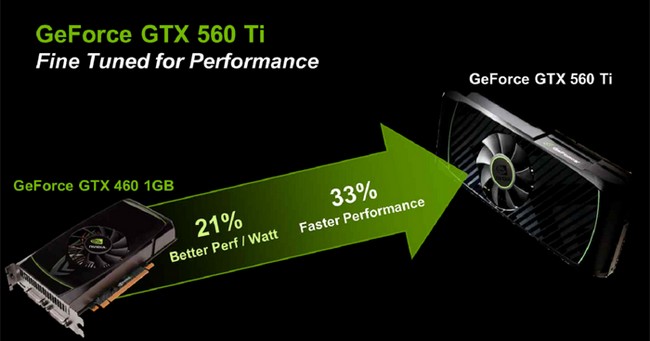

The GTX 560 Ti uses a GF114 chip, which is a redesigned and optimized version of the GF104 chip. Like all GeForce GTX 500 series cards, the GTX 560 Ti is designed with a greater performance to power consumption ratio than the GTX 460. As the result of these changes, the power leakage was minimized while the clocks were significantly increased. GTX 560 Ti has also been equipped with 384 CUDA cores and eight PolyMorph Engines in order to provide significantly higher performance than its predecessor GTX 460.

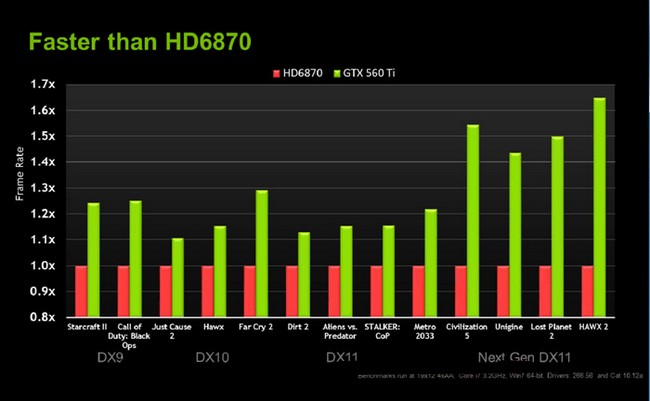

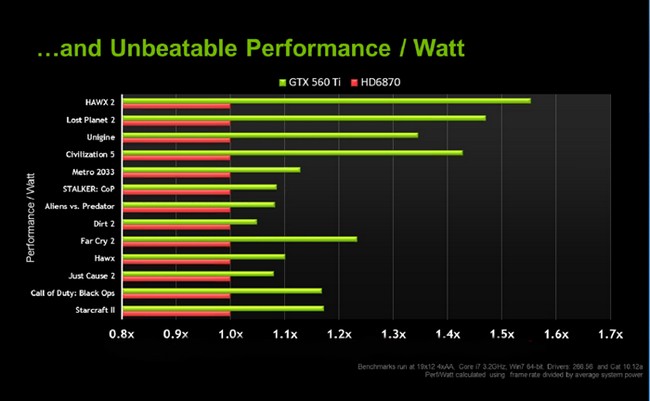

Certainly, with such modifications, the power usage has increased from 120W to 170W, but with this increase, the GTX 560 Ti is able to demonstrate 21% better performance per watt than the GTX 460. In DX11 games, NVIDIA estimates a 33% increase in performance in comparison to the GTX 460, and a 40-50% in performance in comparison to the HD 6870.

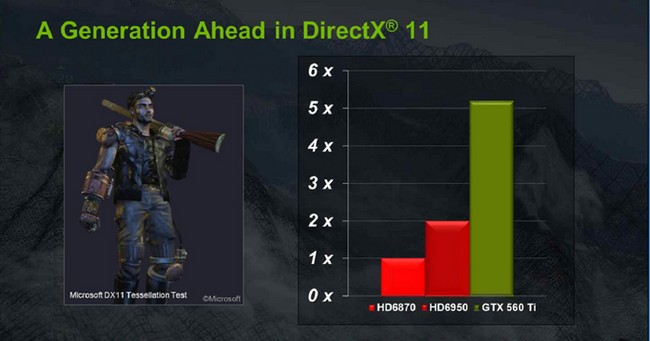

Though DirectX 11 was introduced in early 2010, only few games have actually used DX11 to its full potential: better tessellation, improved multi-core support, and support for multi-threaded applications. According to a survey, NVIDIA has determined that 84% of gamers have not yet updated to DirectX 11. However, DX11 is expected to gain ground among gamers in 2011, with the anticipated release of DX11-compatible games such as Crysis 2 and Battlefield 1943.

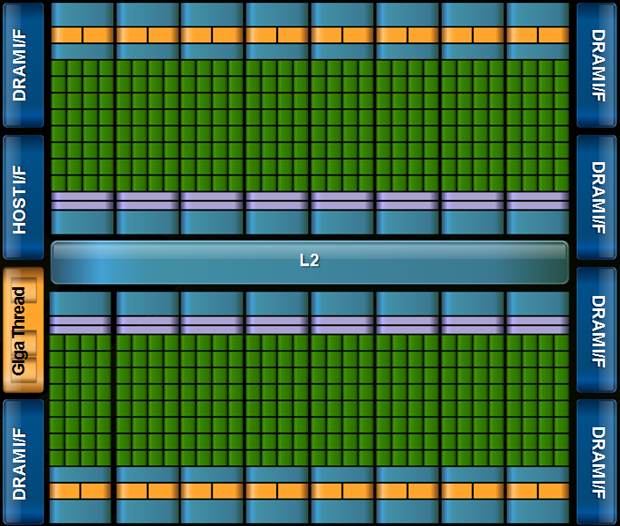

The GF114 Architecture

The GF104 Fermi architecture is based on parallel computing units (CUDA cores) that simultaneouly process different information. Therefore, the efficiency of the processor increases with the increase in amount of the CUDA cores present. The GTX 460’s GF104 chip contained 8 Streaming Multiprocessors (SM) with each linked to up 48 cores. However, only 7 out of the 8 SM’s were actually functional, meaning the number of usable CUDA cores was reduced to 336. Additionally, the GPU was equiped with 32 ROPs, 2 Raster engines, 64 textile units, and 8 geometry units.

NVIDIA completely redesigned the GTX 460.The new GPU GF114 now supports 384 CUDA cores. Additional Raster Engines and PolyMorph engines were added to provide better tesselation support and improved polygon-to-pixel conversion. The total memory bandwidth for the GeForce GTX 560 Ti has increased to 128.3 GB/s.

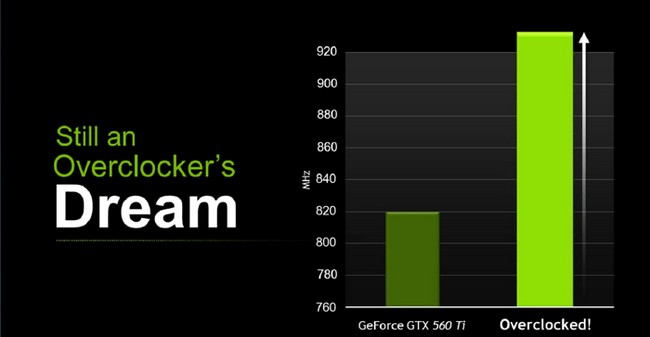

Due to the larger amount of active CUDA cores, the default clockspeed for a stock GTX 560 Ti was raised to 820 MHz, requiring a greater power consumption. Changes that occured in order to lower the temperature were performed on transistor level to ensure that the card has an excellent performance without excessive power loss. As compared to the GTX 460, the overall performance of GTX 560 Ti increased by 33%, and the performance per watt went up by 21%.

Not only is GTX 560 Ti faster than the GTX 460, it demonstrates a drastic increase in performance over the AMD HD 6870, which becomes markedly observed in DX11 games. NVIDIA claims that there is a 1.5-1.65x increase in performance in latest games like Civilization 5, Lost Planet 2, and HAWX 2. We’ll test this contention later on.

The GTX 560 was equipped with 8 PolyMorph engines in order to provide a great DirectX 11 experience. NVIDIA sees DirectX 11 as a future for the gaming industry and is gearing the products to serve the 84% that have not yet switched to Direct X11 in order to enjoy the revolutionary depth of field implemented in newer games. NVIDIA’s cards are one generation ahead in terms of DirectX 11, and with 8 PolyMorph engines in comparison to the AMD HD 6950’s 2 PolyMorph Engines, GTX 560 Ti shows 2.5x as much performance as the HD6950.

Due to the modifications made to accomodate 384 CUDA cores, the power consumption has been increased, but the card is still supplied by 2x 6-pin power connectors, and has an estimated consumption of 170 W. However, the card was designed to create a better performance per watt ratio. NVIDIA claims that in comparison to the AMD HD 5970, the GTX 560 Ti has up to 50% greater efficiency in power consumption.

With a clockspeed of 820 MHz, this card leaves plenty of room for any overclocker. It is also important to note that NVIDIA has implemented power monitoring features that monitor each 12 V rail. This features allows for the user to test the voltage with a lower risk of damaging the card. Once the threshold voltage is reached the card cuts power in order to avoid damage. This feature, common to the whole 500 series, differs from older cards, which would simply downclock the GPU when the card reached critical temperatures. An overclocked version of GTX 560 Ti will also be available. The power monitoring feature is not part of the reference design, and whether or not to include it will be the vendors’ decision.

What is CUDA?

CUDA is NVIDIA’s parallel computing architecture that enables dramatic increases in computing performance by harnessing the power of the GPU (graphics processing unit).

With millions of CUDA-enabled GPUs sold to date, software developers, scientists and researchers are finding broad-ranging uses for CUDA, including image and video processing, computational biology and chemistry, fluid dynamics simulation, CT image reconstruction, seismic analysis, ray tracing and much more.

Computing is evolving from “central processing” on the CPU to “co-processing” on the CPU and GPU. To enable this new computing paradigm, NVIDIA invented the CUDA parallel computing architecture that is now shipping in GeForce, ION, Quadro, and Tesla GPUs, representing a significant installed base for application developers.

In the consumer market, nearly every major consumer video application has been, or will soon be, accelerated by CUDA, including products from Elemental Technologies, MotionDSP and LoiLo, Inc.

CUDA has been enthusiastically received in the area of scientific research. For example, CUDA now accelerates AMBER, a molecular dynamics simulation program used by more than 60,000 researchers in academia and pharmaceutical companies worldwide to accelerate new drug discovery.

In the financial market, Numerix and CompatibL announced CUDA support for a new counterparty risk application and achieved an 18X speedup. Numerix is used by nearly 400 financial institutions.

An indicator of CUDA adoption is the ramp of the Tesla GPU for GPU computing. There are now more than 700 GPU clusters installed around the world at Fortune 500 companies ranging from Schlumberger and Chevron in the energy sector to BNP Paribas in banking.

And with the recent launches of Microsoft Windows 7 and Apple Snow Leopard, GPU computing is going mainstream. In these new operating systems, the GPU will not only be the graphics processor, but also a general purpose parallel processor accessible to any application.

For information on CUDA and OpenCL, click here.

For information on CUDA and DirectX, click here.

For information on CUDA and Fortran, click here.

PhysX

Some Games that use PhysX (Not all inclusive)

|

Batman: Arkham Asylum Watch Arkham Asylum come to life with NVIDIA® PhysX™ technology! You’ll experience ultra-realistic effects such as pillars, tile, and statues that dynamically destruct with visual explosiveness. Debris and paper react to the environment and the force created as characters battle each other; smoke and fog will react and flow naturally to character movement. Immerse yourself in the realism of Batman Arkham Asylum with NVIDIA PhysX technology. |

|

Darkest of Days Darkest of Days is a historically based FPS where gamers will travel back and forth through time to experience history’s “darkest days”. The player uses period and future weapons as they fight their way through some of the epic battles in history. The time travel aspects of the game, lead the player on missions where they at times need to fight on both sides of a war. |

|

Sacred 2 – Fallen Angel In Sacred 2 – Fallen Angel, you assume the role of a character and delve into a thrilling story full of side quests and secrets that you will have to unravel. Breathtaking combat arts and sophisticated spells are waiting to be learned. A multitude of weapons and items will be available, and you will choose which of your character’s attributes you will enhance with these items in order to create a unique and distinct hero. |

|

Dark Void Dark Void is a sci-fi action-adventure game that combines an adrenaline-fuelled blend of aerial and ground-pounding combat. Set in a parallel universe called “The Void,” players take on the role of Will, a pilot dropped into incredible circumstances within the mysterious Void. This unlikely hero soon finds himself swept into a desperate struggle for survival. |

|

Cryostasis Cryostasis puts you in 1968 at the Arctic Circle, Russian North Pole. The main character, Alexander Nesterov is a meteorologist incidentally caught inside an old nuclear ice-breaker North Wind, frozen in the ice desert for decades. Nesterov’s mission is to investigate the mystery of the ship’s captain death – or, as it may well be, a murder. |

|

Mirror’s Edge In a city where information is heavily monitored, agile couriers called Runners transport sensitive data away from prying eyes. In this seemingly utopian paradise of Mirror’s Edge, a crime has been committed and now you are being hunted. |

What is NVIDIA PhysX Technology?

NVIDIA® PhysX® is a powerful physics engine enabling real-time physics in leading edge PC games. PhysX software is widely adopted by over 150 games and is used by more than 10,000 developers. PhysX is optimized for hardware acceleration by massively parallel processors. GeForce GPUs with PhysX provide an exponential increase in physics processing power taking gaming physics to the next level.

What is physics for gaming and why is it important?

Physics is the next big thing in gaming. It’s all about how objects in your game move, interact, and react to the environment around them. Without physics in many of today’s games, objects just don’t seem to act the way you’d want or expect them to in real life. Currently, most of the action is limited to pre-scripted or ‘canned’ animations triggered by in-game events like a gunshot striking a wall. Even the most powerful weapons can leave little more than a smudge on the thinnest of walls; and every opponent you take out, falls in the same pre-determined fashion. Players are left with a game that looks fine, but is missing the sense of realism necessary to make the experience truly immersive.

With NVIDIA PhysX technology, game worlds literally come to life: walls can be torn down, glass can be shattered, trees bend in the wind, and water flows with body and force. NVIDIA GeForce GPUs with PhysX deliver the computing horsepower necessary to enable true, advanced physics in the next generation of game titles making canned animation effects a thing of the past.

Which NVIDIA GeForce GPUs support PhysX?

The minimum requirement to support GPU-accelerated PhysX is a GeForce 8-series or later GPU with a minimum of 32 cores and a minimum of 256MB dedicated graphics memory. However, each PhysX application has its own GPU and memory recommendations. In general, 512MB of graphics memory is recommended unless you have a GPU that is dedicated to PhysX.

How does PhysX work with SLI and multi-GPU configurations?

When two, three, or four matched GPUs are working in SLI, PhysX runs on one GPU, while graphics rendering runs on all GPUs. The NVIDIA drivers optimize the available resources across all GPUs to balance PhysX computation and graphics rendering. Therefore users can expect much higher frame rates and a better overall experience with SLI.

A new configuration that’s now possible with PhysX is 2 non-matched (heterogeneous) GPUs. In this configuration, one GPU renders graphics (typically the more powerful GPU) while the second GPU is completely dedicated to PhysX. By offloading PhysX to a dedicated GPU, users will experience smoother gaming.

Finally we can put the above two configurations all into 1 PC! This would be SLI plus a dedicated PhysX GPU. Similarly to the 2 heterogeneous GPU case, graphics rendering takes place in the GPUs now connected in SLI while the non-matched GPU is dedicated to PhysX computation.

Why is a GPU good for physics processing?

The multithreaded PhysX engine was designed specifically for hardware acceleration in massively parallel environments. GPUs are the natural place to compute physics calculations because, like graphics, physics processing is driven by thousands of parallel computations. Today, NVIDIA’s GPUs, have as many as 480 cores, so they are well-suited to take advantage of PhysX software. NVIDIA is committed to making the gaming experience exciting, dynamic, and vivid. The combination of graphics and physics impacts the way a virtual world looks and behaves.

Direct Compute

DirectCompute Support on NVIDIA’s CUDA Architecture GPUs

Microsoft’s DirectCompute is a new GPU Computing API that runs on NVIDIA’s current CUDA architecture under both Windows VISTA and Windows 7. DirectCompute is supported on current DX10 class GPU’s and DX11 GPU’s. It allows developers to harness the massive parallel computing power of NVIDIA GPU’s to create compelling computing applications in consumer and professional markets.

As part of the DirectCompute presentation at the Game Developer Conference (GDC) in March 2009 in San Francisco CA, NVIDIA demonstrated three demonstrations running on a NVIDIA GeForce GTX 280 GPU that is currently available. (see links below)

As a processor company, NVIDIA enthusiastically supports all languages and API’s that enable developers to access the parallel processing power of the GPU. In addition to DirectCompute and NVIDIA’s CUDA C extensions, there are other programming models available including OpenCL™. A Fortran language solution is also in development and is available in early access from The Portland Group.

NVIDIA has a long history of embracing and supporting standards since a wider choice of languages improve the number and scope of applications that can exploit parallel computing on the GPU. With C and Fortran language support here today and OpenCL and DirectCompute available this year, GPU Computing is now mainstream. NVIDIA is the only processor company to offer this breadth of development environments for the GPU.

OpenCL

| OpenCL (Open Computing Language) is a new cross-vendor standard for heterogeneous computing that runs on the CUDA architecture. Using OpenCL, developers will be able to harness the massive parallel computing power of NVIDIA GPU’s to create compelling computing applications. As the OpenCL standard matures and is supported on processors from other vendors, NVIDIA will continue to provide the drivers, tools and training resources developers need to create GPU accelerated applications.

In partnership with NVIDIA, OpenCL was submitted to the Khronos Group by Apple in the summer of 2008 with the goal of forging a cross platform environment for general purpose computing on GPUs. NVIDIA has chaired the industry working group that defines the OpenCL standard since its inception and shipped the world’s first conformant GPU implementation for both Windows and Linux in June 2009. |

|

NVIDIA has been delivering OpenCL support in end-user production drivers since October 2009, supporting OpenCL on all 180,000,000+ CUDA architecture GPUs shipped since 2006.

NVIDIA’s Industry-leading support for OpenCL:

2010

March – NVIDIA releases updated R195 drivers with the Khronos-approved ICD, enabling applications to use OpenCL NVIDIA GPUs and other processors at the same time

January – NVIDIA releases updated R195 drivers, supporting developer-requested OpenCL extensions for Direct3D9/10/11 buffer sharing and loop unrolling

January – Khronos Group ratifies the ICD specification contributed by NVIDIA, enabling applications to use multiple OpenCL implementations concurrently

2009

November – NVIDIA releases R195 drivers with support for optional features in the OpenCL v1.0 specification such as double precision math operations and OpenGL buffer sharing

October – NVIDIA hosts the GPU Technology Conference, providing OpenCL training for an additional 500+ developers

September – NVIDIA completes OpenCL training for over 1000 developers via free webinars

September – NVIDIA begins shipping OpenCL 1.0 conformant support in all end user (public) driver packages for Windows and Linux

September – NVIDIA releases the OpenCL Visual Profiler, the industry’s first hardware performance profiling tool for OpenCL applications

July – NVIDIA hosts first “Introduction to GPU Computing and OpenCL” and “Best Practices for OpenCL Programming, Advanced” webinars for developers

July – NVIDIA releases the NVIDIA OpenCL Best Practices Guide, packed with optimization techniques and guidelines for achieving fast, accurate results with OpenCL

July – NVIDIA contributes source code and specification for an Installable Client Driver (ICD) to the Khronos OpenCL Working Group, with the goal of enabling applications to use multiple OpenCL implementations concurrently on GPUs, CPUs and other types of processors

June – NVIDIA release first industry first OpenCL 1.0 conformant drivers and developer SDK

April – NVIDIA releases industry first OpenCL 1.0 GPU drivers for Windows and Linux, accompanied by the 100+ page NVIDIA OpenCL Programming Guide, an OpenCL JumpStart Guide showing developers how to port existing code from CUDA C to OpenCL, and OpenCL developer forums

2008

December – NVIDIA shows off the world’s first OpenCL GPU demonstration, running on an NVIDIA laptop GPU at

June – Apple submits OpenCL proposal to Khronos Group; NVIDIA volunteers to chair the OpenCL Working Group is formed

2007

December – NVIDIA Tesla product wins PC Magazine Technical Excellence Award

June – NVIDIA launches first Tesla C870, the first GPU designed for High Performance Computing

May – NVIDIA releases first CUDA architecture GPUs capable of running OpenCL in laptops & workstations

2006

November – NVIDIA released first CUDA architecture GPU capable of running OpenCL

Pictures & Impressions

Click Image For a Larger One

The GeForce GTX 560 Ti is designed along the same lines as the GTX 570 and 580. NVIDIA retains the green and black color scheme, and its logo is visible on both the fan and the PCB just above the PCI Express contacts. The GTX 560 Ti is equipped with a single fan, which is sufficient to provide adequate cooling to the card. Just like the GTX 460, the GTX 560 Ti is extremely energy efficient and is designed to provide maximum performance with minimum power consumption. Vendor cards may have a significant increase in cooling performance when they make their own designs. The card itself is light, weighting aproximately 0.7 kg, and is only 9 inches in length, meaning it will fit in most cases.

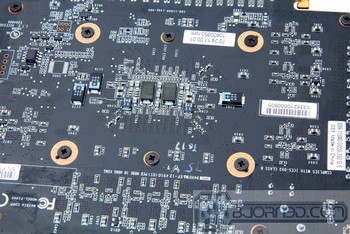

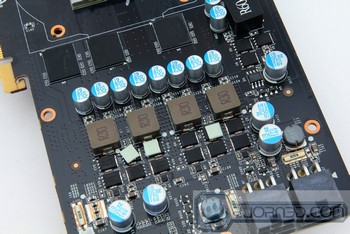

By looking at the inner layout of the GTX 560 Ti, significant changes in transistor poisitioning can be observed. The new GF114 GPU, clocked at 822 MHz, is equiped with another 48 CUDA cores. On the back of the PCB, two power regulators can be observed. Just like GTX 570 and GTX 580, Samsung memory chips make up the 1GB GDDR5 memory.

Testing & Methodology

The OS we use is Windows 7 Pro 64bit with all patches and updates applied. We also use the latest drivers available for the motherboard and any devices attached to the computer. We do not disable background tasks or tweak the OS or system in any way. We turn off drive indexing and daily defragging. We also turn off Prefetch and Superfetch. This is not an attempt to produce bigger benchmark numbers. Drive indexing and defragging can interfere with testing and produce confusing numbers. If a test were to be run while a drive was being indexed or defragged, and then the same test was later run when these processes were off, the two results would be contradictory and erroneous. As we cannot control when defragging and indexing occur precisely enough to guarantee that they won’t interfere with testing, we opt to disable the features entirely.

Prefetch tries to predict what users will load the next time they boot the machine by caching the relevant files and storing them for later use. We want to learn how the program runs without any of the files being cached, and we disable it so that each test run we do not have to clear pre-fetch to get accurate numbers. Lastly we disable Superfetch. Superfetch loads often-used programs into the memory. It is one of the reasons that Windows Vista occupies so much memory. Vista fills the memory in an attempt to predict what users will load. Having one test run with files cached, and another test run with the files un-cached would result in inaccurate numbers. Again, since we can’t control its timings so precisely, it we turn it off. Because these four features can potentially interfere with benchmarking, and and are out of our control, we disable them. We do not disable anything else.

We ran each test a total of 3 times, and reported the average score from all three scores. Benchmark screenshots are of the median result. Anomalous results were discounted and the benchmarks were rerun.

Please note that due to new driver releases with performance improvements, we rebenched every card shown in the results section. The results here will be different than previous reviews due to the performance increases in drivers.

|

Test Rig |

|

| Case | In-Win Dragon Rider |

| CPU |

Intel Core i7 930 @ 3.8GHz |

| Motherboard |

ASUS Rampage III Extreme ROG – LGA1366 |

| RAM |

3x 2GB Corsair 1333MHz |

| CPU Cooler | Thermalright True Black 120 with 2x Noctua NF-P12 Fans |

| Drives |

3x Seagate Barracuda 1TB 7200.12 Drives |

| Optical | ASUS DVD-Burner |

| GPU |

Nvidia GeForce GTX 560 Ti ASUS ENGTX580 1536MB Nvidia GeForce GTX 580 1536MB Nvidia GeForce GTX 570 1536MB Galaxy GeForce GTX 480 1536MB Palit GeForce GTX460 Sonic Platinum 1GB in SLI ASUS Radeon HD6870 AMD Radeon HD5870 |

| Case Fans |

3x Noctua NF-P12 Fans – Side 3x In-Win 120mm Fans – Front, Back, Top |

| Additional Cards |

Ineo I-NPC01 2 Ports PCI-Express Adapter for USB 3.0 |

| PSU |

SeaSonic X750 Gold 750W |

| Mouse | Logitech G5 |

| Keyboard | Logitech G15 |

Synthetic Benchmarks & Games

We will use the following applications to benchmark the performance of the Nvidia GeForce GTX 560 Ti video card.

| Synthetic Benchmarks & Games | |

| 3DMark Vantage | |

| Metro 2033 | |

| Stone Giant | |

| Unigine Heaven v.2.1 | |

| Crysis | |

| Crysis Warhead | |

| HAWX 2 | |

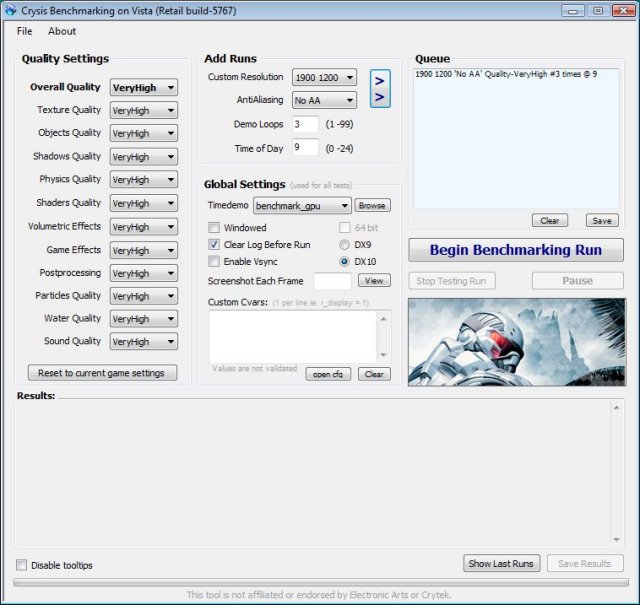

Crysis v. 1.21

Crysis is the most highly anticipated game to hit the market in the last several years. Crysis is based on the CryENGINE™ 2 developed by Crytek. The CryENGINE™ 2 offers real time editing, bump mapping, dynamic lights, network system, integrated physics system, shaders, shadows, and a dynamic music system, just to name a few of the state-of-the-art features that are incorporated into Crysis. As one might expect with this number of features, the game is extremely demanding of system resources, especially the GPU. We expect Crysis will soon be replaced by its sequel, the DX11 compatible Crysis 2.

After running the Crysis benchmark at a resolution of 1680×1050, there is a clear difference in the performance of the GTX 560 in comparison to the GTX 460. Considering that the GTX 460 is overclocked to 800 MHz, a difference of 5 frames per second amounts to a 15% increase, which is very singificant. In comparison to the AMD cards, the performance is slightly lower, but could be due to the fact that the game is run on DX10 rather DX11.

The results provided after benching at resolution of 1900×1200 are congruent with the 1680×1050, and overall, the GTX 560 Ti demonstrates a solid 15% increase over the overclocked GTX 460. The difference between the GTX 560 and the 570 is around 8 frames per second, which is the same performance proportion as the GTX 460-470.

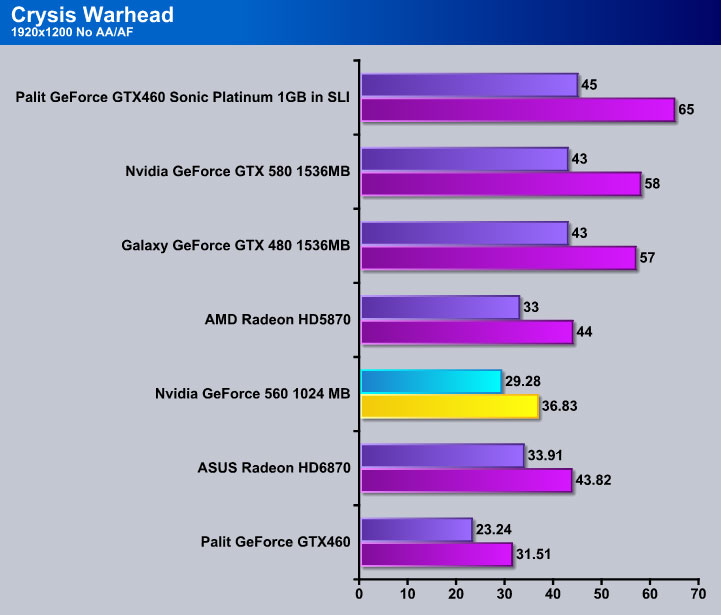

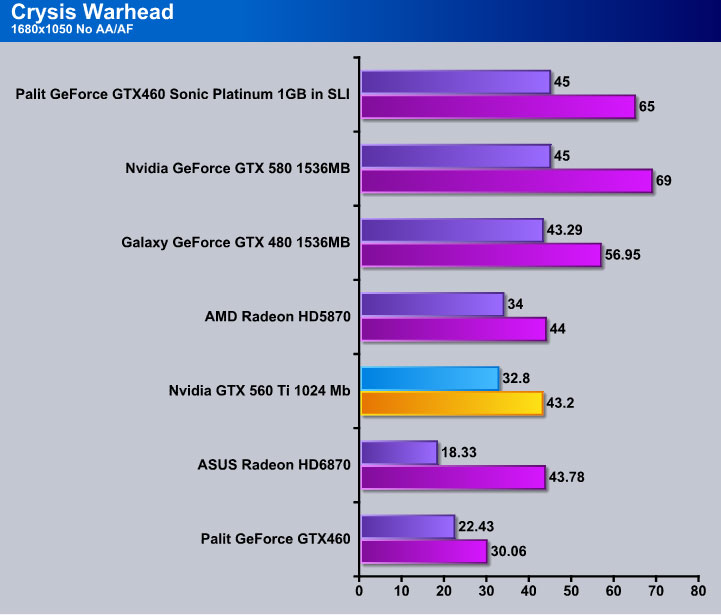

CRYSIS WARHEAD

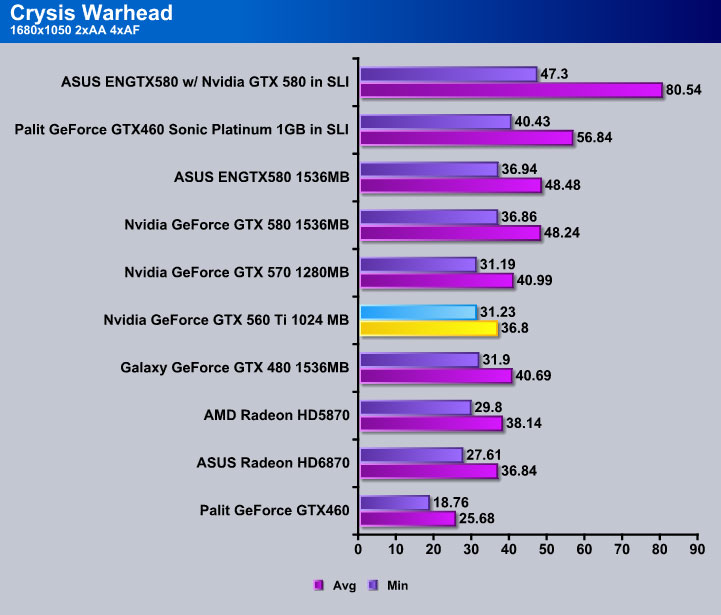

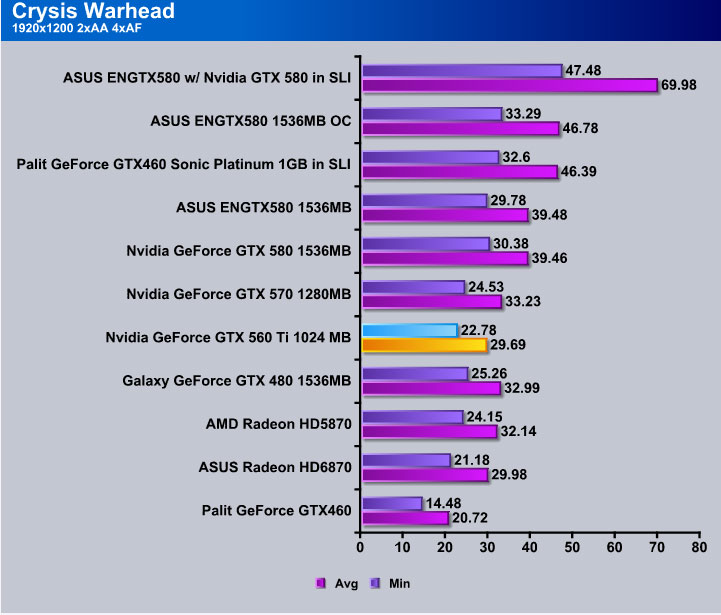

Crysis Warhead is the much anticipated standalone expansion to Crysis, featuring an updated CryENGINE™ 2 with better optimization. It was one of the most anticipated titles of 2008.

Crysis Warhead shows a significant increase of performance for GeForce GTX 560 Ti in comparison to the Palit Sonic Platinum GTX 460. With an 11 FPS increase on 2xAA and 13 FPS with AA disabled, the performance increase constitutes 30%, placing it right next to the AMD cards.

While running Crysis Warhead at a resolution of1920x1200, the performance results are different from those that were obtained at 1680×1050. In fact, the results are more congruent with the previous benchmark. Overall the performance increase of GTX 560 Ti over over the GTX 460 is estimated to be 30% (15% with the factory overclocked GTX 460).

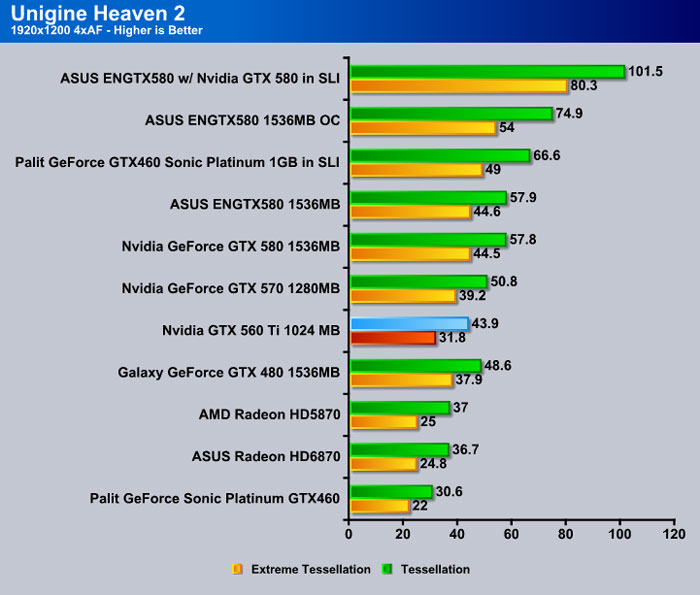

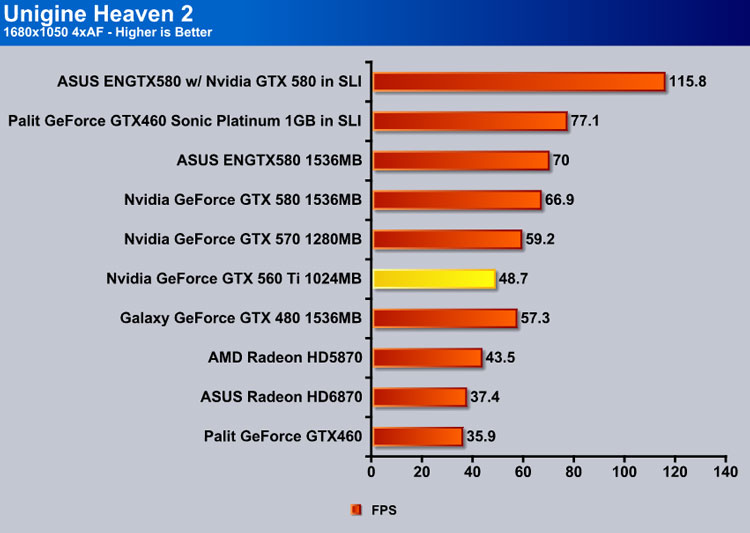

Unigine Heaven 2.1

Unigine Heaven is a benchmark program based on Unigine Corp’s latest engine, Unigine. The engine features DirectX 11, Hardware tessellation, DirectCompute, and Shader Model 5.0. All of these new technologies combined with the ability to run each card through the same exact test means this benchmark should be in our arsenal for a long time.

While the Crysis and Crysis Warhead benchmarks were tested on DX10, the GTX 560 Ti is specifically manufactured to exceed in its performance in DirectX 11 benchmarks. Unigine results confirm that the GTX 560 Ti demonstrated an around 20% increase in performance, surpassing its AMD competitors. It is important to notice that most recent games (and all upcoming games) will support DX 11, and therefore results on Direct X 10 are only relevant for those wish to stick to older games.

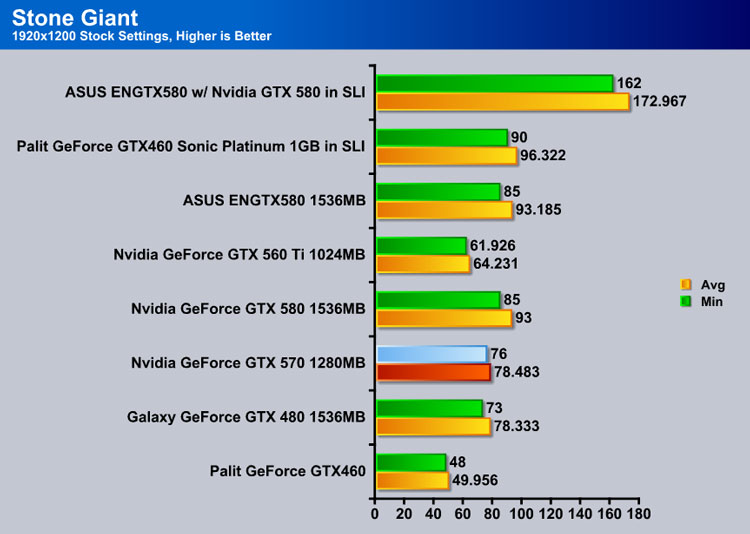

Stone Giant

We used a 60 second Fraps run and recorded the Min/Avg/Max FPS rather than rely on the built in utility for determining FPS. We started the benchmark, triggered Fraps and let it run on stock settings for 60 seconds without making any adjustments of changing camera angles. We just let it run at default and had Fraps record the FPS and log them to a file for us.

Key features of the BitSquid Tech (PC version) include:

- Highly parallel, data oriented design

- Support for all new DX11 GPUs, including the NVIDIA GeForce GTX 400 Series and AMD Radeon 5000 series

- Compute Shader 5 based depth of field effects

- Dynamic level of detail through displacement map tessellation

- Stereoscopic 3D support for NVIDIA 3dVision

“With advanced tessellation scenes, and high levels of geometry, Stone Giant will allow consumers to test the DX11-credentials of their new graphics cards,” said Tobias Persson, Founder and Senior Graphics Architect at BitSquid. “We believe that the great image fidelity seen in Stone Giant, made possible by the advanced features of DirectX 11, is something that we will come to expect in future games.”

“At Fatshark, we have been creating the art content seen in Stone Giant,” said Martin Wahlund, CEO of Fatshark. “It has been amazing to work with a bleeding edge engine, without the usual geometric limitations seen in current games”.

Stone Giant confirms an amazing result for the GTX 560 Ti. The performance increase is in the range of 20-50% over the Palit Sonic Platinum. In conclusion, GTX 560 Ti is definitely geared towards tessellation.

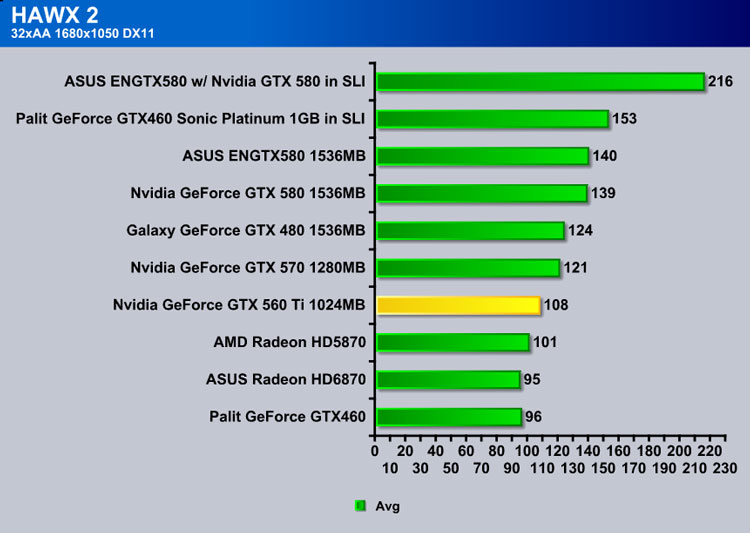

HAWX 2

Tom Clancy’s H.A.W.X. 2 plunges fans into an explosive environment where they can become elite aerial soldiers in control of the world’s most technologically advanced aircraft. The game will appeal to a wide array of gamers as players will have the chance to control exceptional pilots trained to use cutting edge technology in amazing aerial warfare missions.

Developed by Ubisoft, H.A.W.X. 2 challenges you to become an elite aerial soldier in control of the world’s most technologically advanced aircraft. The aerial warfare missions enable you to take to the skies using cutting edge technology.

The GeForce 560 Ti surpasses the AMD Radeon HD 5870 by 4 FPS on 1920×1200 resolution and 7 FPS on 1680×1050 resolution. It also surpasses the NVIDIA GTX 460 by 12 FPS on 1680×1050 and 10 FPS on 1900×1200.

Interestingly, NVIDIA claims that the GTX 560 Ti performs over 1.6 times as fast as the AMD Radeon HD 6870 in HAWX 2, though our own results show a markedly smaller difference. This may be due to the difference in testing setups.

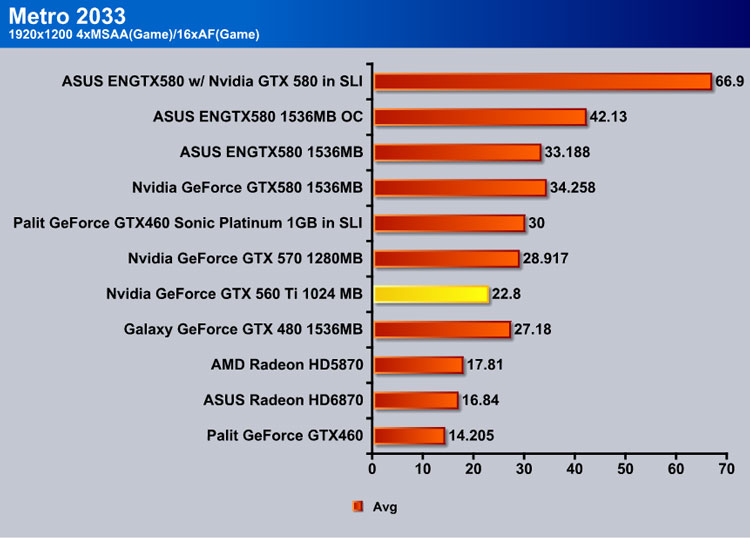

Metro 2033

Metro 2033 is an action-oriented video game blending survival horror and first-person shooter elements. The game is based on the novel Metro 2033 by Russian author Dmitry Glukhovsky. It was developed by 4A Games in Ukraine and released in March 2010 for the Xbox 360 and Microsoft Windows. In March 2009, 4A Games announced a partnership with Glukhovsky to collaborate on the game. The game was announced a few months later at the 2009 Games Convention in Leipzig; a first trailer came along with the announcement. When the game was announced, it had the subtitle “The Last Refuge,” but this subtitle is no longer being used.

The game is played from the perspective of a character named Artyom. The story takes place in post-apocalyptic Moscow, mostly inside the metro system where the player’s character was raised (he was born before the war, in an unharmed city). The player must occasionally go above ground on certain missions and scavenge for valuables.

The game’s locations reflect the dark atmosphere of real metro tunnels, albeit in a more sinister and bizarre fashion. Strange phenomena and noises are frequent, and mostly the player has to rely on their flashlight and quick thinking to find their way around in total darkness. Even more lethal is the surface, as it is severely irradiated and a gas mask must be worn at all times due to the toxic air. Water can often be contaminated as well, and short contacts can cause heavy damage to the player, or even kill outright.

Often, locations have an intricate layout, and the game lacks any form of map, leaving the player to try and find its objectives only through a compass – weapons cannot be used while visualizing it, leaving the player vulnerable to attack during navigation. The game also lacks a health meter, relying on audible heart rate and blood spatters on the screen to show the player how close he or she is to death. There is no on-screen indicator to tell how long the player has until the gas mask’s filters begin to fail, save for a wristwatch that is divided into three zones, signaling how much the filter can endure, so players must continue to check it every time they wish to know how long they have until their oxygen runs out. Players must replace the filters, which are found throughout the game. The gas mask also indicates damage in the form of visible cracks, warning the player a new mask is needed. The game does feature traditional HUD elements, however, such as an ammunition indicator and a list of how many gas mask filters and adrenaline (health) shots remain.

Another important factor is ammunition management. As money lost its value in the game’s setting, cartridges are used as currency. There are two kinds of bullets that can be found: those of poor quality made by the metro-dwellers, and those made before the nuclear war. The ones made by the metro-dwellers are more common, but less effective against the dark denizens of the underground labyrinth. The pre-war ones, which are rare and highly powerful, are also necessary to purchase gear or items such as filters for the gas mask and med kits. Thus, the game involves careful resource management.

We left Metro 2033 on all high settings with Depth of Field on.

While playing Metro 2033, we noticed a significant performance improvement for the GTX 560 Ti over its competition. While it is not able to provide quite the same experience as the GTX 570, it is capable of running the game at 4x AA smoothly enough to make it playable, even in the most graphically intense areas of gameplay. On average, the surface was providing a solid 19 FPS, while the metro itself was scaling close to 24 on the 1920×1200. In terms of gaming experience, the GTX 560 Ti provides a sigificantly smoother experience in comparison to the Palit Sonic Platinum GTX 460.

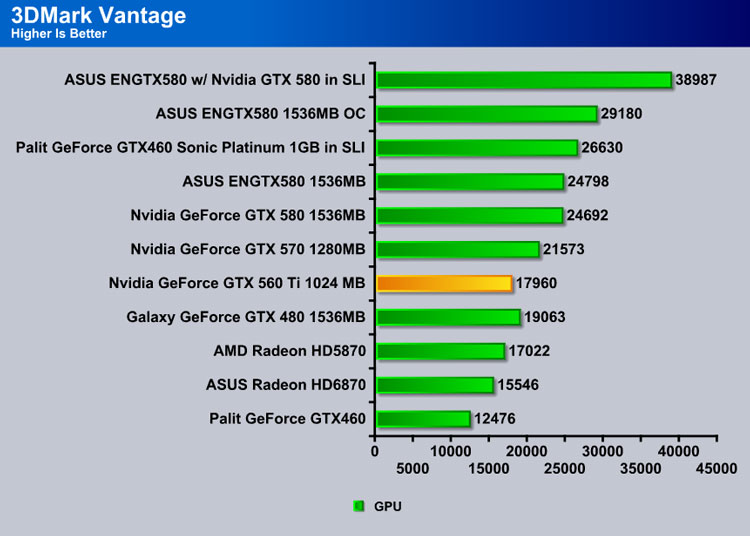

3DMark Vantage

For complete information on 3DMark Vantage Please follow this Link:

www.futuremark.com/benchmarks/3dmarkvantage/features/

The newest video benchmark from the gang at Futuremark. This utility is still a synthetic benchmark, but one that more closely reflects real world gaming performance. While it is not a perfect replacement for actual game benchmarks, it has its uses. We tested our cards at the ‘Performance’ setting.

After running 3DMark Vantage, the GPU score with the Nvidia GTX 560 Ti increased to 17960, surpassing the AMD Radeon HD 5870 and barely missing the Galaxy GeForce GTX 480. This score came as a pleasant surprise and strongly confirmed a 44% increase in GPU performance over the GTX 460.

TEMPERATURES

To measure the temperature of the video card, we used MSI Afterburner and ran Metro 2033 for 10 minutes to find the Load temperatures for the video cards. The highest temperature was recorded. After playing for 10 minutes, Metro 2033 was turned off and we let the computer sit at the desktop for another 10 minutes before we measured the idle temperatures.

| Video Cards – Temperatures – Ambient 23C | Idle | Load (Fan Speed) |

|---|---|---|

| 2x Palit GTX 460 Sonic Platinum 1GB GDDR5 in SLI |

31C |

65C |

| Nvidia GTX 560 Ti | 30 C | 64 C |

| Palit GTX 460 Sonic Platinum 1GB GDDR5 |

29C |

60C |

| Galaxy GTX 480 |

53C |

81C (73%) |

| Nvidia GeForce GTX 580 | 39C | 73C (66%) |

The GTX 560 Ti has an extremely low temperature. In comparison to the GTX 460 Sonic Platinum, the temperature did not increase significantly. Also, this card cooled very fast. After running Metro 2033 for 10 minutes, the temperature was able to return to idle levels within 2 minutes. With the addition of the power monitor, it is practically impossible to overheat this card, making it a very safe card for overclocking.

POWER CONSUMPTION

To get our power consumption numbers, we plugged in our Kill A Watt power measurement device and took the Idle reading at the desktop during our temperature readings. We left it at the desktop for about 15 minutes and took the idle reading. Then we ran Metro 2033 for a few minutes minutes and recorded the highest power usage.

| Video Cards – Power Consumption | Idle | Load |

|---|---|---|

| 2x Palit GTX 460 Sonic Platinum 1GB GDDR5 in SLI |

315W |

525W |

| Palit GTX 460 Sonic Platinum 1GB GDDR5 |

249W |

408W |

| Nvidia GTX 460 1GB |

237W |

379W |

| Galaxy GTX 480 |

248W |

439W |

| Nvidia GTX 560 TI 1GB | 260 W | 412 W |

The TDP of the GTX 560 Ti is only 10W higher than that of the GTX 460. There is roughtly a 40W difference between the reference GTX 460 and the reference GTX 560, well worth the performance increase seen on the DirectX 11 games.

Conclusion

In July 2010, NVIDIA released the GTX 460, which was able to provide high performance at a fairly low budget. The new GTX 560 is an optimized and completely redesigned version of GTX 460. After rigorous testing we were able to confirm the increase in performance of up to 35% in comparison to the 460. With the current performance and estimated pricing in the margin of USD 249.99-269.99, the GTX 560 Ti is a serious competition to AMD cards.

Some of the major changes that occured in the design of GTX 560 Ti include creation of the new GF114 chip, addition of extra tessellation features for smoother performance on DX 11 games, and most importantly, a complete rearrangement of transistors in order to provide higher performance per watt. The GF114 chip also fully supports all 384 CUDA cores. These changes have not only impacted the performance of the GeForce GTX 560 Ti, but also give this card good overclocking capability by significantly lowering temperatures. While there is a slight increase in TDP in comparison to the GTX 460, the GTX 560 Ti is still one of the most efficient graphics cards currently on the market.

While the reference graphics clock is 820 MHz, overclocked versions of GTX 560 Ti will be commercially available. According to the latest news, GIGABYTE is releasing a 1 GHz GTX 560 Ti. Tessellation performance on the newer Direct X 11 is a definitive characteristic of the new GeForce 500 series cards. Throughout the testing, a significant performance increase was observed in newer benchmarks like Unigine Heaven and Stone Giant. Overall, the design changes of the GTX 560 Ti result in marked performance increases. While the GTX 460 will still remain on the market, the GTX 560 Ti represents a more optimal variant that is bound to deliver excellent performance at a significantly lower price.

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996