LSI and Seagate have teamed up to let us bring you an enthusiast RAID review. How enthusiast? LSI MegaRaid and 10 Seagate Savvio 15K.1 73.4 GB drives. RAID insanity at its best.

INTRODUCTION

The one thing all computer enthusiasts like is a rig that runs fast. We’ve found that often the hard drive is one of the bottlenecks in modern systems. A lot of enthusiasts have turned to onboard (software) RAID (Redundant Array of Inexpensive Disks) to provide more speed. Software RAID does provide some relief to the hard drive bottleneck but it comes at the cost of using more CPU cycles and a larger percentage of your CPU’s capabilities are used to drive the software RAID. It also uses onboard SATA ports which aren’t always accessible if you’re using dual slot video cards.

We’re now seeing boards with as many as four full PCI-E 16x slots and three PCI-E 8x, boards like the Asus P6T7. Once again, expansion ports are on the list of things that enthusiasts are looking for so manufacturers are building boards with more ports available. Chances are you have at least one PCI-E slot open capable of handling a Hardware RAID card.

Hardware RAID cards come in a variety of types and capabilities. The one we’re going to look at today, in conjunction with a bevy of Seagate Savvio 15K (ST973451SS) drives, is the LSI MegaRaid (SAS 8708EM2), capable of connecting eight drives directly to the RAID controller. The advantage of hardware RAID is that you can get RAID cards that have onboard processors and memory that almost totally take the load off the CPU and handle input/output by themselves, and they run faster than any onboard RAID we’ve ever seen.

Are you ready for some seriously insane RAID action? We know that you are. We have RAID in every flavor that our LSI RAID controller will handle with the drives we have available (10 Seagate Savvio 15K SAS drives). As a point of interest, the LSI MegaRaid controller will also handle SATA drives. We’re going past SATA and right to SAS with its lightning fast access times and spindle speeds up to 15K RPM. RAID, RAID, and more RAID. RAID0, RAID1, RAID5, and RAID6 are on the menu today, so set back and enjoy this feast of RAID.

About LSI

LSI Corporation is a leading provider of innovative silicon, systems and software technologies that enable products which seamlessly bring people, information, and digital content together. We offer a broad portfolio of capabilities and services including custom and standard product ICs, adapters, systems and software that are trusted by the world’s best known brands to power leading solutions in the Storage and Networking markets.

About Seagate

As digital content, such as music, video, photos and games, becomes more integrated into everyday life, the idea of static data storage is becoming obsolete. In today’s on-demand world, you want to access, share and secure your digital content using dynamic storage solutions that give you the freedom to do business, create and interact—anytime, anywhere. From protecting treasured family photos and personal music collections to developing next-generation consumer electronics devices and large enterprise networks, Seagate delivers advanced digital storage solutions to meet the needs of today’s consumers and tomorrow’s applications.

Setting Your Content Free

When the first hard drives began shipping in 1956, only big corporations could afford the cost and space required for these one-ton behemoths. Today, digital storage is all around us. Whether you realize it or not, you are probably interacting with digital storage devices on a daily basis. Every time you visit an ATM machine, record and replay a favorite TV show on your DVR, or download a song to your portable media player, you are accessing or storing information on a hard drive. Every day, content passes through numerous types of storage devices before it makes it to your office computer or personal media player. Hard drives today are involved not only in the storing of content but also in the transfer and creation of it. In our culture of downloading and instant access, you can find a seemingly endless amount of content to download, enjoy, and share—whenever, wherever you want.

The digital storage market continues to grow at a rapid pace—fueled by the explosion in content being created and consumed, as well as new legislation requiring businesses to store specific records and information. Storage solutions today aren’t just about keeping your valuable data safe and secure but also helping your information and media be as accessible and flexible as you need it to be. Now you can access and take your content wherever you go—in your car, on your business trip, to the gym. From home entertainment consumer electronics devices to large enterprise data centers, Seagate digital storage solutions empower you to make the most of your content—for business and pleasure.

Platforming and Vertical Integration—Streamlining the Process

As the leader in the industry, Seagate has focused on building business efficiencies, such as platforming and vertical integration that give the company a competitive edge. Platforming has helped Seagate deliver a comprehensive product portfolio by allowing the company to apply one core technology platform to create multiple products. The company has applied the platforming concept to the manufacturing process, allowing Seagate to manufacture many different hard drive models on the same factory line to further increase efficiencies and improve product quality and margins.

Vertical integration also plays a large role in streamlining the design and development process. Seagate designs, owns and manufactures all of the core technologies needed to develop individual storage products, including heads, media, motors and printed circuit boards. Without reliance on third parties to supply components, the company has complete control of its entire development process and product roadmap—from component supply to technology improvements. As a result, product quality, margins and time to market are vastly improved—enabling Seagate to quickly meet the quality, reliability and supply demands of its customers.

Seagate Technology has been at the forefront of the storage industry for nearly 30 years. With corporate offices in Scotts Valley, California, Seagate employs more than 55,000 people around the world—all contributing to the development of the company’s next-generation storage products. From the first 5.25-inch hard drive for the PC to the development of perpendicular recording technology, the company has been pioneering new industry standards that have fueled advancements in the digital information age. Through technology leadership and innovation, Seagate continues to help individuals and businesses maximize the potential of their digital content in an ever-evolving, on-demand world.

LSI MegaRaid SAS 8708EM2

Features

- Eight internal SAS/SATA ports

- Two Mini-SAS SFF-8087 x4 connectors

- 3Gb/s throughput per port

- LSISAS1078 RAID on Chip (ROC)

500MHz power-PC

Hardware RAID 5 & 6 parity engines - Low-profile MD2 form factor (6.6” X 2.536”)

- x8 PCI Express host interface

- 128MB DDRII Cache (667MHz)

- Optional battery backup unit (direct attach)

- Connect up to 32 SAS and/or SATA devices

- RAID levels 0, 1, 5 and 6

- RAID spans 10, 50 and 60

- Auto-resume on rebuild

- Auto-resume on reconstruction

- Online Capacity Expansion (OCE)

- Online RAID Level Migration (RLM)

- Comprehensive RAID management suite

- Global and dedicated hot spare with revertible hot spare support

Automatic rebuild

Enclosure affinity

Emergency SATA hot spare for SAS arrays - Single controller multipathing (failover)

- I/O load balancing

- Support for extended RAID 1 volumes of up to 32 devices

Benefits

- Proven MegaRAID protection for SATA and SAS drives using RAID 0, 1, 5, 6, 10, 50 or 60

- LSISAS1078 ROC, x8 PCI Express host interface and 667MHz DDRII cache enhance performance of storage for low-cost server and nearline applications.

- True MD2 form factor perfect fit for 1U and 2U servers and space-limited storage environment

- Battery backup and advanced error detection and correction capabilities deliver outstanding RAID availability

- Common suite of management utilities for all MegaRAID SAS/SATA adapters

As you can see, the LSI MegaRaid controller will drive RAID 0, 1, 5, 6 and RAID Spans of 10, 50, and 60. So it should cover about all the RAID needs of any Enthusiast and provide some measure of future proofing if you’re dedicated to running a hardware RAID setup on your system.

LSI MegaRaid (SAS 8708EM2) BIOS

The LSI MegaRaid has an onboard 500MHz processor and 128MB of dedicated DDR2 667MHz memory so you can expect it to have a BIOS. What we didn’t expect was a feature rich, entirely self driven RAID setup Wizard with full featured array setup. There’s a manual for it on the included driver disk, but after playing with BIOS for a few minutes, we were able to setup multiple RAID configurations without cracking the manual open, so we’ll cover BIOS in depth.

Please note that any image on this page may be enlarged by clicking on it if you want a better look at the LSI MegaRaid (SAS 8708EM2) BIOS.

BIOS

After you have the MegaRaid card installed you boot the computer and when you see the MegaRaid Screen it stops for a few seconds and you hit “<CTRL>H” and you get the initial setup screen prompting you to click on “Start”.

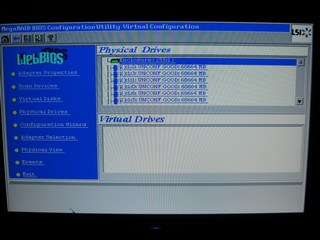

Once you’ve clicked start you get the WebBIOS screen which offers you nine options. The one we’re most concerned with is the Configuration Wizard, but you can also browse the physical drives, virtual drives, and some other housekeeping type menus.

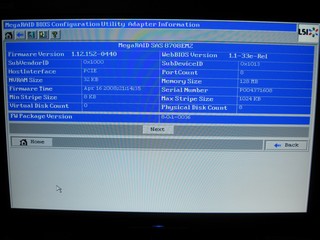

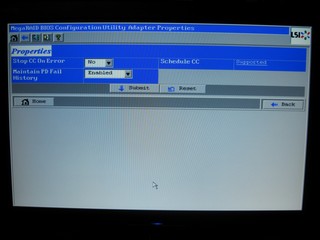

This is a shot of the adapter properties screen that gives in depth information on the adapter and BIOS revision.

Second of three BIOS Configuration screens available.

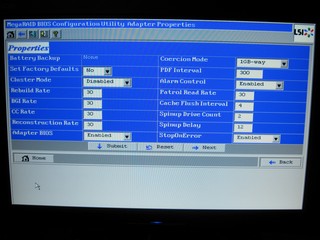

The last of three BIOS configuration screens.

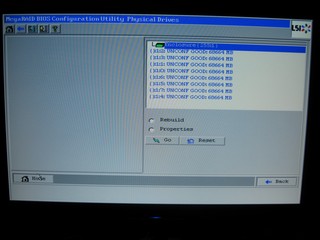

BIOS Configuration Physical drives screen.

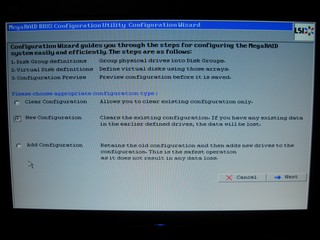

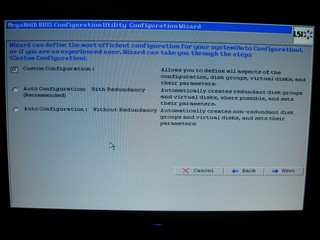

Then we get to the interesting part, the Configuration Wizard. From here you can select Clear Configuration and wipe the array, New Configuration which clears the old configuration and allows you to make a new configuration, or add a configuration if you’ve added drives or just want to run multiple RAID setups from one adapter.

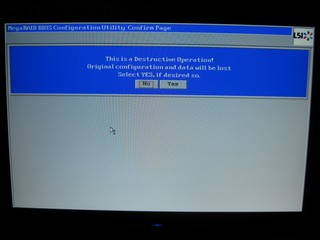

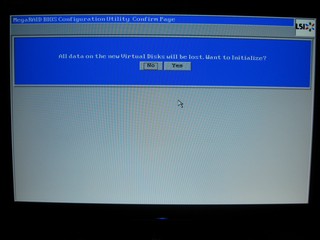

At this point we went into the New Configuration screen and it informs us that this is a Destructive Operation (it’s going to unmount the array and destroy any data on it). Clicking YES here will un-mount the array and prepare you for building a different array. Take our word for it. We were in this screen about 50 times doing different arrays for testing. It’s an easy to use, easy to understand BIOS setup.

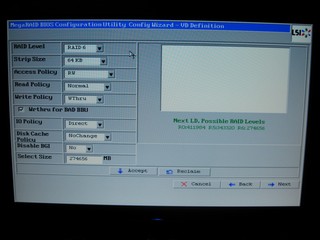

Then we went into the Custom Configuration Screen, but if all you want is a simple redundant or non-redundant array, you can use one of the two auto-configuration options and they work really well.

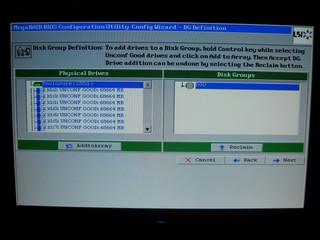

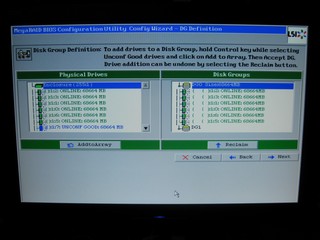

On this screen you can hold the <CTRL> key and click on the drives you want to add to the RAID array you’re wanting to create. Once you have the drives selected you click “Add to Array”.

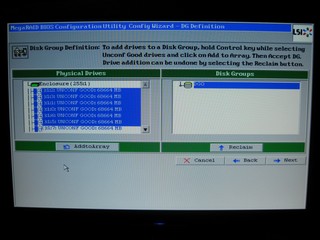

Here we show Six of the eight available drives selected for the array we want to build.

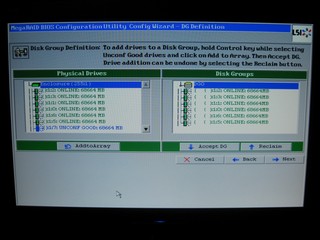

Then, what you’ll see after you’ve clicked “Add To Array”. If you’ve defined the array you want and have no further operations to do you click “Accept DG”. DG stands for Drive Group.

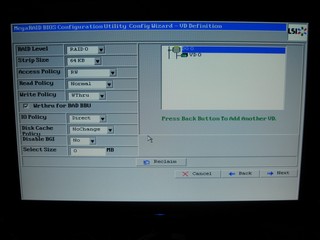

After clicking “Accept DG” you have one last chance to add drives to another Drive Group before clicking Next.

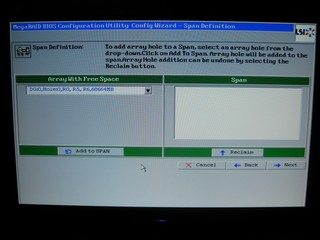

Then you get this screen in case you’ve defined more than one drive group. In our case we only defined one array with six drives, so all we had to do was click “Add To Span”.

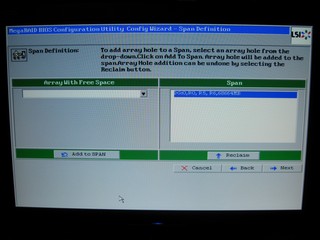

This is the screen you get after clicking “Add To Span” and at this point you click “Next”.

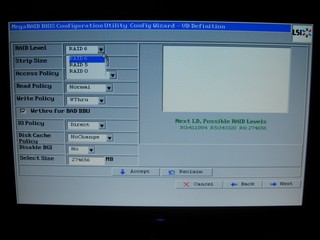

Then you get a screen that will let you choose from the different types of arrays you can set up with the number of drives you’ve added to the group(s). In our case, with six drives in the Group, we had the option for RAID 1, 5, or 6.

We were ready to go for a six drive array in RAID0 which we’ll refer to as 6xRAID0 and follow that convention later on. You can also define the stripe size which we left at 64KB the entire time we were testing. You also set policies for the Array. Say if you want to store programs on the volume but, as the system administrator, you don’t want anyone deleting them, you can set the array to “Read Only”. In our case we left it as RW or rewrite, which is normal for most arrays.

Once you click “Accept” you’ll see a screen confirming the existence of a “VD” or Virtual Drive. Some of the older reader might equate VD with something else but we assure you your drive hasn’t contracted any STD’s.

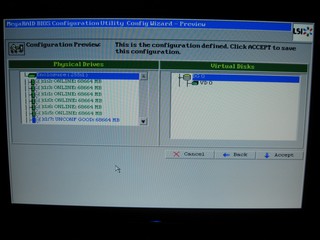

The next screen shows you the “VD” you’ve defined and the drives that are in it (or in them) and gives you one last chance to go back in case you change your mind.

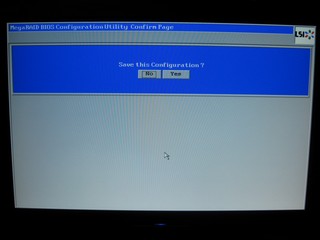

Click yes to save the configuration.

The “are you sure you want to save the configuration screen” is where it tells you it’s going to erase your data if you dare to click yes again.

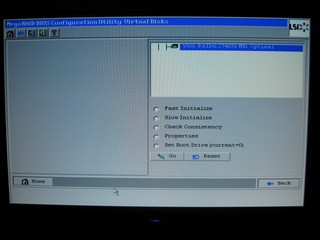

Then, you finally get to see the Virtual Drive and the size it is which will depend on the type of array you define.

Then it takes you back to the WebBIOS screen and you can see the VD again.

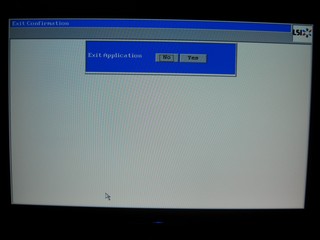

Click Yes to exit the application.

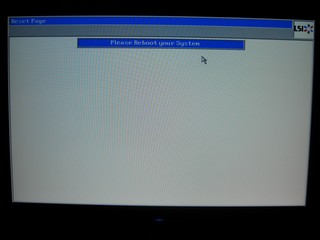

Reboot the system for the changes to take effect.

Then, when you reboot you’ll be greeted again by the LSI Mega Raid Screen.

Then you’ll pause for a few seconds at this screen and have a chance to enter WebBIOS again.

Then, finally a screen that shows you the array, the RAID level you’ve chosen, and the size of the array. You also get one last chance to enter WebBIOS. At this point you’re going into Windows, ready or not. Have your driver disk handy, because your LSI RAID Adapter needs drivers to work. Most Operating Systems are supported by the included Disk except Vista and we had to go online and download those. When Windows pops up and asks for drivers, point it toward the folder you have the drivers saved in and it’ll load right up, and you’re ready to head back to the Control Panel and format your newly created array.

The question that a lot of you have on mind right now should be, “Can I boot to the array? Yes. By clicking an option in one of the screens above you can make it a bootable array. On paper, that looks really complicated, so we went through the entire process for creating an array and should you have any questions, you can refer back to this guide. In reality, it takes about two minutes to define and create the array the first time and under a minute after you get used to the screens. It’s that easy and we can easily say it’s the best RAID controller BIOS we’ve ever had the pleasure of wandering through. We didn’t, however, find any overclocking features for you really crazy enthusiasts, and believe us, we looked. The best you’re going to get is RAID type selection and tweaking the stripe size.

Seagate Savvio 15K (ST973451SS) 73 GB

Key Features and Benefits

- The world’s first 15K 2.5-inch drive

- Fastest and most reliable drive in the world

- Consumes 30 percent less power than any other 15K drive

- 70 percent smaller than 3.5” drives; enables more drives per system and more airflow to cool processors.

- More performance per U, per GB, and per watt than any other drive

Applications

- Email/collaboration

- Database

- Transaction processing

- Rack and tower servers

- Blade servers

- Storage arrays

- Space-constrained specialty applications

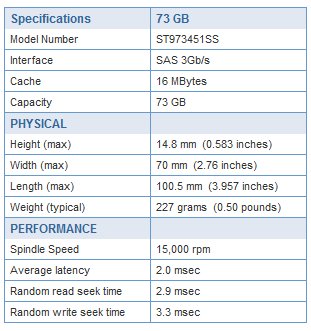

The Seagate Savvio is the first 2 1/2 inch drive in the world that is capable of a spindle speed of 15,000 RPM and its blazing access time of between 2.9 – 3.3 ms reflects that. We did some single drive testing and a single drive connected to the onboard SAS connectors on the P6T we were seeing between 180 and 235 MB/s single drive sustained transfer rates. The Seagate Savvio is a small but lightning fast drive. With advanced anti-vibration technology and low noise design it’s ideal for the server environment and, as you’ll see a little later, with a some Bjorn3D creativity, capable of supercharging a desktop.

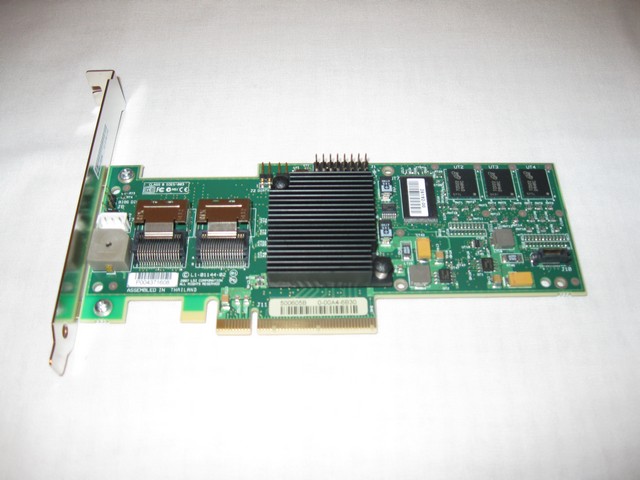

Pictures LSI MegaRaid (SAS 8708EM2)

The LSI MegaRaid adapter comes well packed in an attractive box that has plenty of information and specification to help inform the consumer about its purchase. Inside the box you’ll find a Quick Start Guide, Driver Disk (Not Pictured), two cables for normal SATA Drives, and the Controller in an Anti-Static bag. If you want to use SAS drives, like we did, you’ll need a different set of cables for the SAS Drives.

Two Mini-SAS SFF-8087 x4 connectors are the ports on the card so any Mini SAS SFF-8087 to SAS cables will work. Each controller in direct connect configuration can handle four drives, so we hooked two Mini SAS SFF-8087 to four SAS drive connectors and we were good to go. These aren’t included in the MegaRaid Controller, so if you go SAS make sure you acquire them separately.

Taking a spread out look at the bundle that comes with the controller you see the controller itself, the two Mini SAS SFF-8087 to four SATA Drive cables, a low profile PCI bracket, the driver disk, and the ROHS compliant papers. Everything you need to get started with SATA drives, but like we mentioned, if you’re going SAS, you’ll need different cables.

The LSI MegaRaid controller is an 8x controller, so you’ll need a PCI-E 8x or 16x available for it to be installed in. The controllers aren’t much to look at and I wouldn’t be expecting LEDs mesmerizing you or a UV reactive PCB. In the case of RAID controllers, it’s not how they look but what they can do for you.

Seagate Savvio 15K (ST973451SS)

The Seagate Savvio 15K drives were delivered without a lot of fanfare or fancy packaging which is normal for OEM Hard Drives. This quantity of drives is usually delivered in a specially designed shipping box that has top and bottom slots for the drives to be nestled in. A Specifications sheet for each drive is included. OEM drives ship with no cables, screws or other bundle. They’re just bare ready to install drives.

The Seagate Savvio series is a 2 1/2 inch form factor drive in the Enterprise class. They have a normal SAS connector and, like most drives, the bottom of the drive is packed with electronics. It’s hard to believe that such a small drive can have a spindle speed of 15K and lightning fast Access times. Having a flock of ten drives makes for quite an impressive sight. Having such a flock of drives was a little daunting, at first, but we quickly figured out what to do with them.

Naturally, we had to see if they were stackable and, of course, they stacked quite nicely. No need to worry. No drives were harmed in the making of this review. The real trick to the review was getting the drives hooked up in a coherent manner, since the drive enclosures we ordered were out of stock. It took some serious engineering and hours of debate to overcome this obstacle.

Yes, we spared no expense. That’s genuine dual layer cardboard and two layers of the massively expensive material. Our supplier assures us that sometime in the near future we’ll have two 5 1/2 drive bay enclosures capable of housing 4 drives each, but given that the vendor (yes, you know who you are and I’m linking you the review) promised that the enclosures would be here in time for the review, we don’t put much stock in that. In the mean time, we installed 32 short bolts on the bottom of the drives to elevate them and put a 120mm fan blowing across them to keep things cool. Believe us, these aren’t drives that you want operating flat on anything. At 15,000 RPM, if you leave them flat on a surface for testing, they’ll quickly heat up and you’ll have problems. The need for ventilation is clearly stated in the drive specifications on the Seagate website, so we’d recommend that you heed the need for cooling.

TESTING & METHODOLOGY

There are some things to keep in mind about testing the Seagate Savvio Drives. The first thing to keep in mind is that they’re not really designed for this purpose, which is what makes it fun. Second, they’re designed for a server environment with features that we can’t really test. For instance, they’re specifically designed to be low noise and anti vibration and we can tell you that they accomplish those quite nicely. They are entirely quiet in operation and despite the less than perfect setup on the drives due to the little vendor mishap, they are vibration free. Lesser drives would have most likely simply walked off the double walled cardboard we were forced to use because of a failed delivery of proper housings for the drives.

Another important thing to remember is that we are using a LSI MegaRaid (SAS 8708EM2) hardware RAID controller with a built in 500 MHz processor and 128 MB dedicated DDR2 667 MHz RAM. It’s a very capable hardware RAID controller and it provides a bunch of different possible RAID configurations.

We chose to do testing on RAID 0 with 2 – 8 drives. We tested every level of RAID0 that we possibly could given our setup. Then, we tested RAID1 with two drives. The reason that we tested RAID1 with only two drives is that it will also show the capabilities of the drives in single operation and, frankly, there are better options out there for RAID Arrays. In RAID1, for every drive doing the job it was intended for, you have to have one drive to Mirror it to. The storage effectiveness of a RAID1 array is the worst ratio of any of the available arrays you can run with the exception of RAID0/1, which has the same characteristics of RAID1. A one to one drive ratio, if you have eight drives running RAID0/1, four of them are mirroring the four drives in RAID0. So, you get the use of 50% of the drives.

By the same token, if one drive drops out of the RAID0 Array, you can replace it with the Mirror drive. So it’s a fairly safe arrangement but doesn’t offer a good storage ratio.

We tested RAID5 with 4, 6, and 8 drives. In RAID5 one drive is reserved for parity. It’s fault tolerant. so one drive can fail and the array will continue to operate normally and you can replace and rebuild the drive. Each Array has its strengths and weaknesses and we’ll get a little more into detail on those on the chart pages. So, you get the same redundancy as RAID1 with the advantage only using one drive for parity instead of the 50/50 ratio with Raid1 or RAID0/1. With four drives you get 3/4 of the drive space usable, with six drives running you still only use one drive for parity so you get 5/6 of the drive space available, and with eight drives you get 7/8 of the drive space available. A much better storage capacity than RAID0/1 and still offers a measure of data integrity.

We also tested RAID6 which is identical to RAID5 but it uses two drives for Parity and is fault tolerant and can survive and operate normally if you lose two drives. We also tested it with 4, 6, and 8 drives. Having tested with RAID0, RAID1, RAID5, and RAID6 we feel that this is a fair sampling of RAID arrays and, while we are sure there will be no lack of the “We’d have like to have seen” crowd, with this setup we spent better than three weeks dedicated testing. We’d rather test thoroughly than to spread the testing so thin we miss something. RAID6 is safer than RAID0 and RAID5 but comes with an increased overhead by using two drives for parity no matter the array size. So, at four drives you get 50% of the drive space available for storage, with six drives running you get 2/3 of the space, and with eight running you get 3/4 of the drive space. Compared to a RAID0/1 array with eight drives which yields 50% of the storage as usable, you get 25% more storage space with a good measure of reliability (two drive can fail and the array can be rebuilt). The chances of two drives failing simultaneously is very small. It’s infinitesimally small. Even then, your array will survive. The chances of three drives failing simultaneously is the same as the chances of you dropping your freshly opened soda in the middle of the array. Frightening thought. Keep those sodas and beverages of choice away from your array because we’re fairly sure the warranty on the drives doesn’t cover Soda or Barley pop stains on the electronics.

We did a fresh load of Vista 64 on a boot drive, then because much of the testing on Hard Drive and RAID Array’s is destructive we left each Array blank and tested them in that manner. If you try to do any write testing on an Array a lot of utilities will destroy the data on them and you’d end up loading Vista three times for each test. That’s just not feasible. Then, after we tested all the different arrangements we wanted to test we fired up what we suspected to be the most popular enthusiast RAID array (RAID0) and loaded Vista on it and did some real life testing with an 8xRAID0 array. We repeated each test three times and the average of each three test run is reported here.

Test Rig

| Test Rig “Quadzilla” |

|

| Case Type | Top Deck Testing Station |

| CPU | Intel Core I7 965 Extreme (3.74 GHz 1.2975 Vcore) |

| Motherboard | Asus P6T Deluxe (SLI and CrossFire on Demand) |

| Ram | Kingston HyperX 1866 (9-9-9-27 1T) 6 GB kit |

| CPU Cooler | Thermalright Ultra 120 RT (Dual 120mm Fans) |

| Hard Drives | 10 – Seagate Savvio 15K (ST973451SS) 73GB G.Skill Titan 256 GB SSD (FM-25S2S-256GBT1) 2 WD VelociRaptor’s 300GB (In single and Raid0) |

| Optical | Sony DVD R/W |

| GPU | BFG GTX-260 MaxCore Drivers 182.06 |

| Case Fans | 120mm Fan cooling the mosfet CPU area |

| Docking Stations | None |

| Testing PSU | Thermaltake Toughpower 1200 Watt |

| Legacy | None |

| Mouse | Razer Lachesis |

| Keyboard | Razer Lycosa |

| Gaming Ear Buds |

Razer Moray |

| Speakers | None |

| Any Attempt Copy This System Configuration May Lead to Bankruptcy | |

Test Suite

|

Benchmarks |

|

ATTO |

|

HDTach |

|

Crystal DiskMark |

|

HD Tune Pro |

RAID Levels Tested

RAID 1

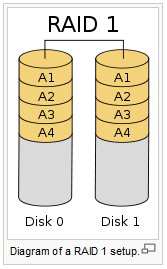

Diagram of a RAID 1 setup.

A RAID 1 creates an exact copy (or mirror) of a set of data on two or more disks. This is useful when read performance or reliability are more important than data storage capacity. Such an array can only be as big as the smallest member disk. A classic RAID 1 mirrored pair contains two disks (see diagram), which increases reliability geometrically over a single disk. Since each member contains a complete copy of the data, and can be addressed independently, ordinary wear-and-tear reliability is raised by the power of the number of self-contained copies.

RAID 1 performance

Since all the data exists in two or more copies, each with its own hardware, the read performance can go up roughly as a linear multiple of the number of copies. That is, a RAID 1 array of two drives can be reading in two different places at the same time, though not all implementations of RAID 1 do this. To maximize performance benefits of RAID 1, independent disk controllers are recommended, one for each disk. Some refer to this practice as splitting or duplexing. When reading, both disks can be accessed independently and requested sectors can be split evenly between the disks. For the usual mirror of two disks, this would, in theory, double the transfer rate when reading. The apparent access time of the array would be half that of a single drive. Unlike RAID 0, this would be for all access patterns, as all the data are present on all the disks. In reality, the need to move the drive heads to the next block (to skip unread blocks) can effectively mitigate speed advantages for sequential access. Read performance can be further improved by adding drives to the mirror. Many older IDE RAID 1 controllers read only from one disk in the pair, so their read performance is always that of a single disk. Some older RAID 1 implementations would also read both disks simultaneously and compare the data to detect errors. The error detection and correction on modern disks makes this less useful in environments requiring normal availability. When writing, the array performs like a single disk, as all mirrors must be written with the data. Note that these performance scenarios are in the best case with optimal access patterns.

RAID 1 has many administrative advantages. For instance, in some environments, it is possible to “split the mirror”: declare one disk as inactive, do a backup of that disk, and then “rebuild” the mirror. This is useful in situations where the file system must be constantly available. This requires that the application supports recovery from the image of data on the disk at the point of the mirror split. This procedure is less critical in the presence of the “snapshot” feature of some file systems, in which some space is reserved for changes, presenting a static point-in-time view of the file system. Alternatively, a new disk can be substituted so that the inactive disk can be kept in much the same way as traditional backup. To keep redundancy during the backup process, some controllers support adding a third disk to an active pair. After a rebuild to the third disk completes, it is made inactive and backed up as described above.

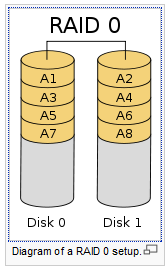

Diagram of a RAID 0 setup.

A RAID 0 (also known as a stripe set or striped volume) splits data evenly across two or more disks (striped) with no parity information for redundancy. It is important to note that RAID 0 was not one of the original RAID levels and provides no data redundancy. RAID 0 is normally used to increase performance, although it can also be used as a way to create a small number of large virtual disks out of a large number of small physical ones.

A RAID 0 can be created with disks of differing sizes, but the storage space added to the array by each disk is limited to the size of the smallest disk. For example, if a 120 GB disk is striped together with a 100 GB disk, the size of the array will be 200 GB.

RAID 0 failure rate

Although RAID 0 was not specified in the original RAID paper, an idealized implementation of RAID 0 would split I/O operations into equal-sized blocks and spread them evenly across two disks. RAID 0 implementations with more than two disks are also possible, though the group reliability decreases with member size.

Reliability of a given RAID 0 set is equal to the average reliability of each disk divided by the number of disks in the set:

The reason for this is that the file system is distributed across all disks. When a drive fails the file system cannot cope with such a large loss of data and coherency since the data is “striped” across all drives (the data cannot be recovered without the missing disk). Data can be recovered using special tools (see data recovery), however, this data will be incomplete and most likely corrupt, and recovery of drive data is very costly and not guaranteed.

RAID 0 performance.

While the block size can technically be as small as a byte, it is almost always a multiple of the hard disk sector size of 512 bytes. This lets each drive seek independently when randomly reading or writing data on the disk. How much the drives act independently depends on the access pattern from the file system level. For reads and writes that are larger than the stripe size, such as copying files or video playback, the disks will be seeking to the same position on each disk, so the seek time of the array will be the same as that of a single drive. For reads and writes that are smaller than the stripe size, such as database access, the drives will be able to seek independently. If the sectors accessed are spread evenly between the two drives, the apparent seek time of the array will be half that of a single drive (assuming the disks in the array have identical access time characteristics). The transfer speed of the array will be the transfer speed of all the disks added together, limited only by the speed of the RAID controller. Note that these performance scenarios are in the best case with optimal access patterns.

RAID 0 is useful for setups such as large read-only NFS servers where mounting many disks is time-consuming or impossible and redundancy is irrelevant.

RAID 0 is also used in some gaming systems where performance is desired and data integrity is not very important. However, real-world tests with games have shown that RAID-0 performance gains are minimal, although some desktop applications will benefit, but in most situations it will yield a significant improvement in performance.

RAID 5

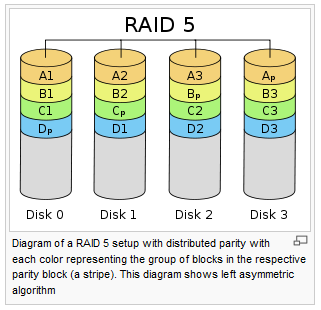

Diagram of a RAID 5 setup with distributed parity with each color representing the group of blocks in the respective parity block (a stripe). This diagram shows left asymmetric algorithm.

A RAID 5 uses block-level striping with parity data distributed across all member disks. RAID 5 has achieved popularity due to its low cost of redundancy. This can be seen by comparing the number of drives needed to achieve a given capacity. RAID 1 or RAID 0+1, which yield redundancy, give only s / 2 storage capacity, where s is the sum of the capacities of n drives used. In RAID 5, the yield is equal to the Number of drives – 1. As an example, four 1TB drives can be made into a 2 TB redundant array under RAID 1 or RAID 1+0, but the same four drives can be used to build a 3 TB array under RAID 5. Although RAID 5 is commonly implemented in a disk controller, some with hardware support for parity calculations (hardware RAID cards) and some using the main system processor (motherboard based RAID controllers), it can also be done at the operating system level, e.g., using Windows Dynamic Disks or with mdadm in Linux. A minimum of three disks is required for a complete RAID 5 configuration. In some implementations a degraded RAID 5 disk set can be made (three disk set of which only two are online), while mdadm supports a fully-functional (non-degraded) RAID 5 setup with two disks – which function as a slow RAID-1, but can be expanded with further volumes.

In the example above, a read request for block A1 would be serviced by disk 0. A simultaneous read request for block B1 would have to wait, but a read request for B2 could be serviced concurrently by disk 1.

RAID 5 performance

RAID 5 implementations suffer from poor performance when faced with a workload which includes many writes which are smaller than the capacity of a single stripe; this is because parity must be updated on each write, requiring read-modify-write sequences for both the data block and the parity block. More complex implementations may include a non-volatile write back cache to reduce the performance impact of incremental parity updates.

Random write performance is poor, especially at high concurrency levels common in large multi-user databases. The read-modify-write cycle requirement of RAID 5’s parity implementation penalizes random writes by as much as an order of magnitude compared to RAID 0.

Performance problems can be so severe that some database experts have formed a group called BAARF — the Battle Against Any Raid Five.

The read performance of RAID 5 is almost as good as RAID 0 for the same number of disks. Except for the parity blocks, the distribution of data over the drives follows the same pattern as RAID 0. The reason RAID 5 is slightly slower is that the disks must skip over the parity blocks.

In the event of a system failure while there are active writes, the parity of a stripe may become inconsistent with the data. If this is not detected and repaired before a disk or block fails, data loss may ensue as incorrect parity will be used to reconstruct the missing block in that stripe. This potential vulnerability is sometimes known as the write hole. Battery-backed cache and similar techniques are commonly used to reduce the window of opportunity for this to occur.

RAID 6

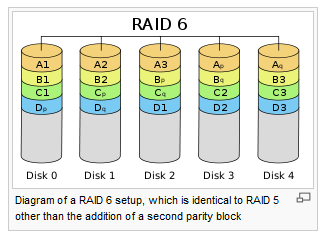

Diagram of a RAID 6 setup, which is identical to RAID 5 other than the addition of a second parity block

Redundancy and Data Loss Recovery Capability

RAID 6 extends RAID 5 by adding an additional parity block; thus it uses block-level striping with two parity blocks distributed across all member disks. It was not one of the original RAID levels.

Performance

RAID 6 does not have a performance penalty for read operations, but it does have a performance penalty on write operations due to the overhead associated with parity calculations. Performance varies greatly depending on how RAID 6 is implemented in the manufacturer’s storage architecture – in software, firmware or by using firmware and specialized ASICs for intensive parity calculations. It can be as fast as RAID 5 with one fewer drives (same number of data drives.)

Efficiency (Potential Waste of Storage)

RAID 6 is no more space inefficient than RAID 5 with a hot spare drive when used with a small number of drives, but as arrays become bigger and have more drives the loss in storage capacity becomes less important and the probability of data loss is greater. RAID 6 provides protection against data loss during an array rebuild; when a second drive is lost, a bad block read is encountered, or when a human operator accidentally removes and replaces the wrong disk drive when attempting to replace a failed drive.

The usable capacity of a RAID 6 array is the total number of drives capacity -2 drives.

Implementation

According to SNIA (Storage Networking Industry Association), the definition of RAID 6 is: “Any form of RAID that can continue to execute read and write requests to all of a RAID array’s virtual disks in the presence of any two concurrent disk failures. Several methods, including dual check data computations (parity and Reed Solomon), orthogonal dual parity check data and diagonal parity have been used to implement RAID Level 6.”

Results RAID0

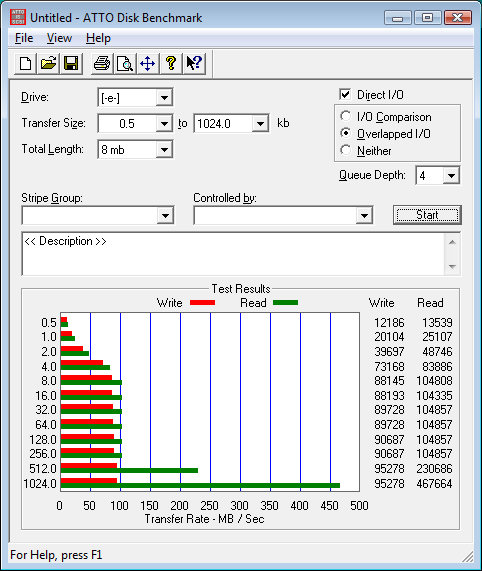

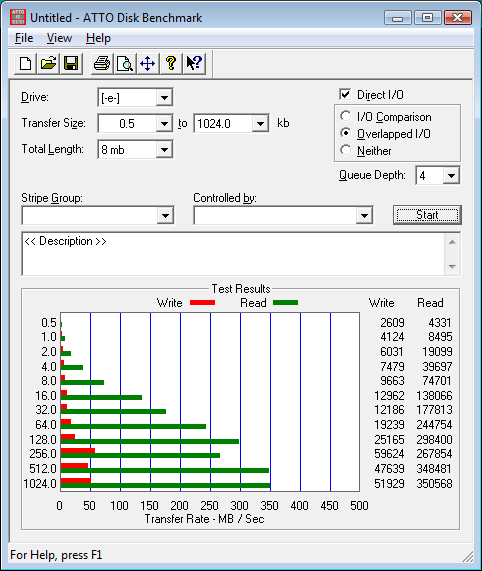

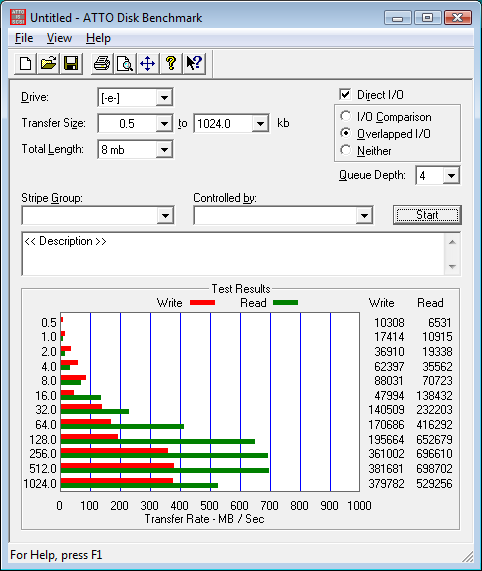

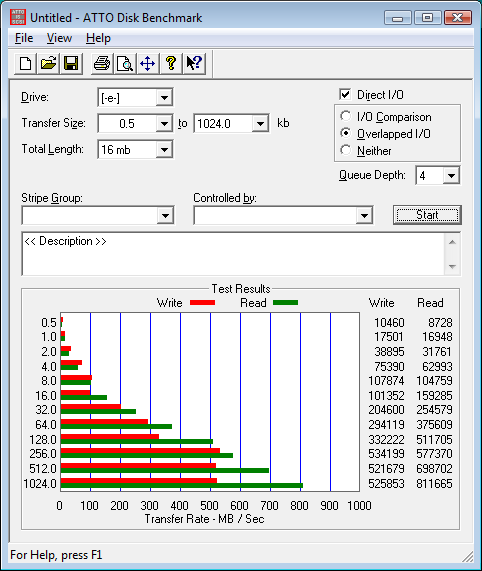

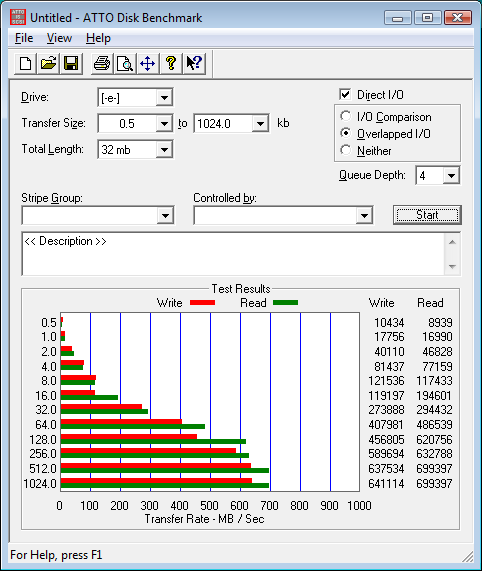

ATTO

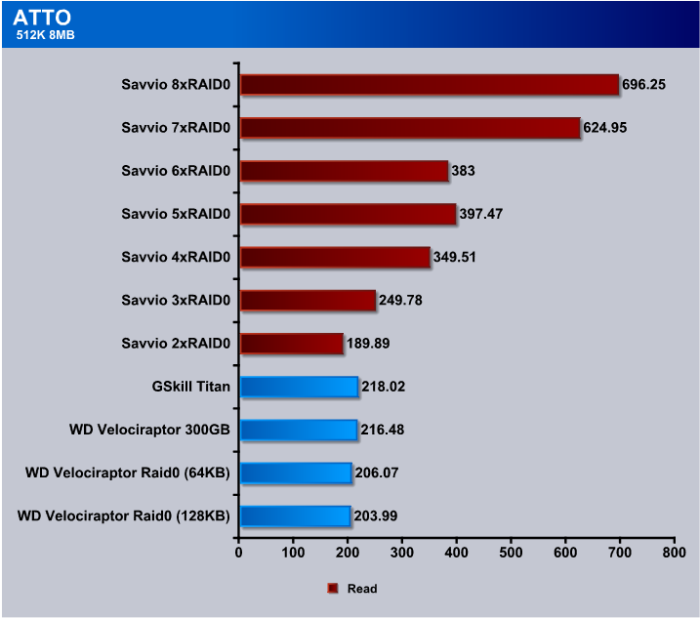

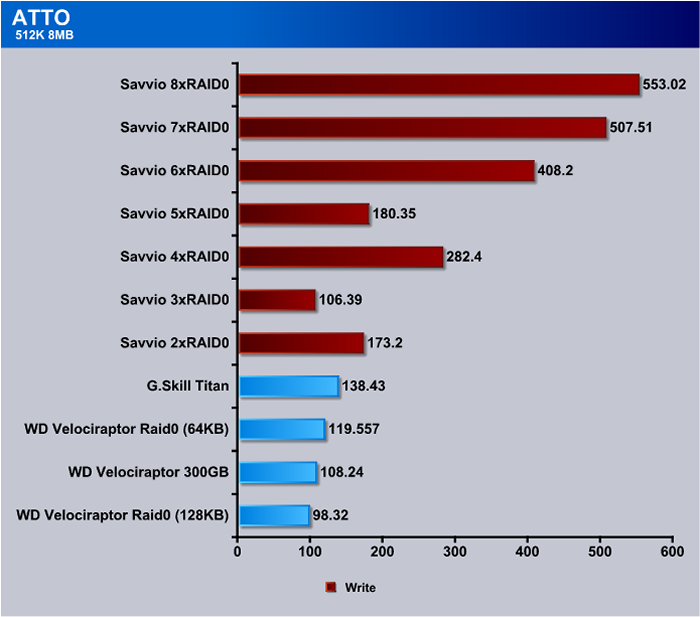

We threw in a couple of drives we tested previously for comparison numbers. We used the Western Digital VelociRaptors we reviewed recently and the G.Skill Titan SSD because we felt like those drives were best suited for comparison. The G.Skill was run on an onboard controller and since the MegaRAID controller is capable of handling the VelociRaptors, we used it to test the VelociRaptor RAID Array and the onboard controller to test the single drive. At 2xRAID0 performance was a little behind the other drives but as we added drives to the array the speeds took off quickly. Top Speed in this test is 696 MB/s which far exceeds the theoretical limit of 300 MB/s on a single SATA 2 drive.

We noticed a few spots in RAID0 like 3xRAID0 and 5xRAID0 where performance lagged a little but with the exception of 3xRAID0 write performance in ATTO was better than with the high end drives we had for comparison. Top end speed at 8xRAID0 was 553 MB/s, which is pretty impressive by any standards.

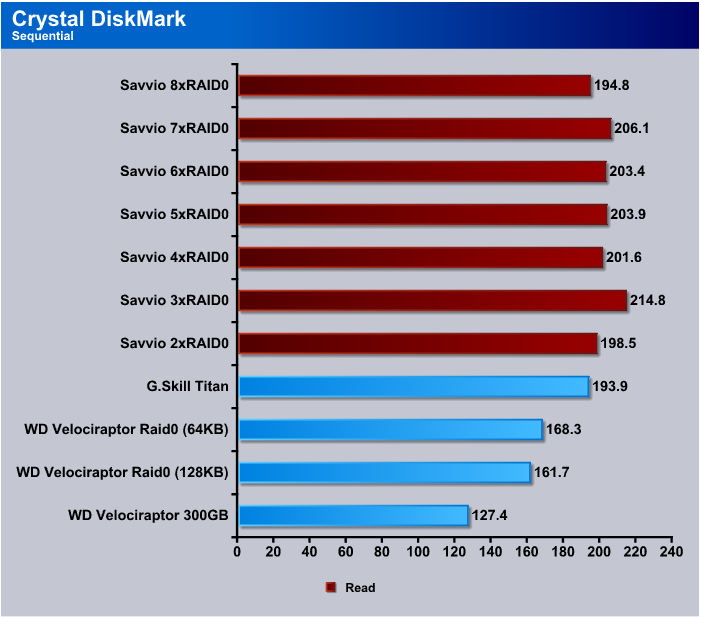

Crystal DiskMark

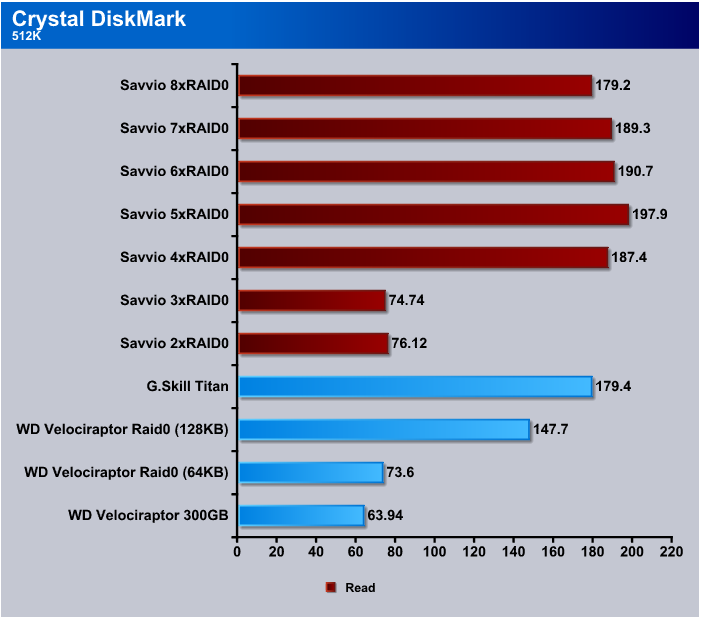

Generally, with RAID Arrays what we’ve seen in the past is the smaller the write the less efficient they get. When we did the Crystal DiskMark 512k test the 2 and 3xRAID0 arrays couldn’t keep up with the other drives we tested and at the fastest we saw a 20 MB/s performance increase over the other drives we used for comparison.

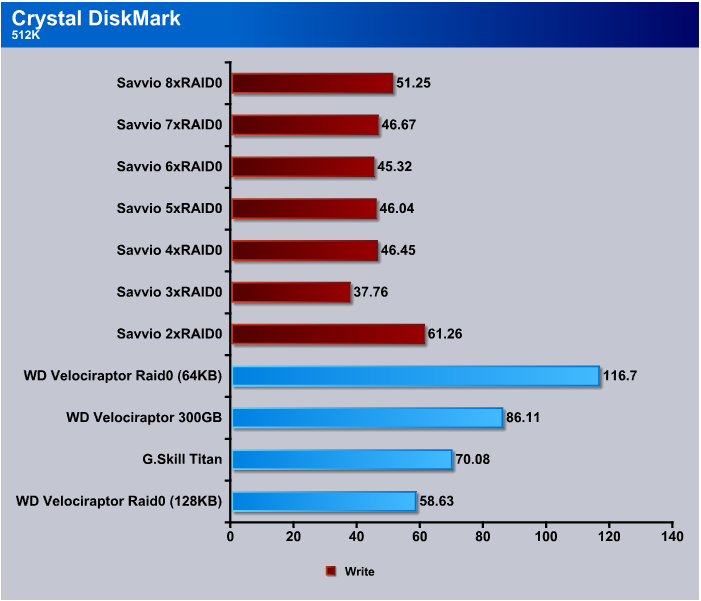

Here’s where we start to see the hit you take from using RAID Arrays for write intensive environments. Still, for enthusiast purposes Reads are more important than Writes. Usually we’re writing from an optical or flash drive and those media are much slower than the speeds we’re seeing here. Most programs and games are written once and read many times and just the data files are changed for gamers and home use. Now, in an Enterprise environment like a server farm this might be a disadvantage, but we wouldn’t see them running RAID0 anyway.

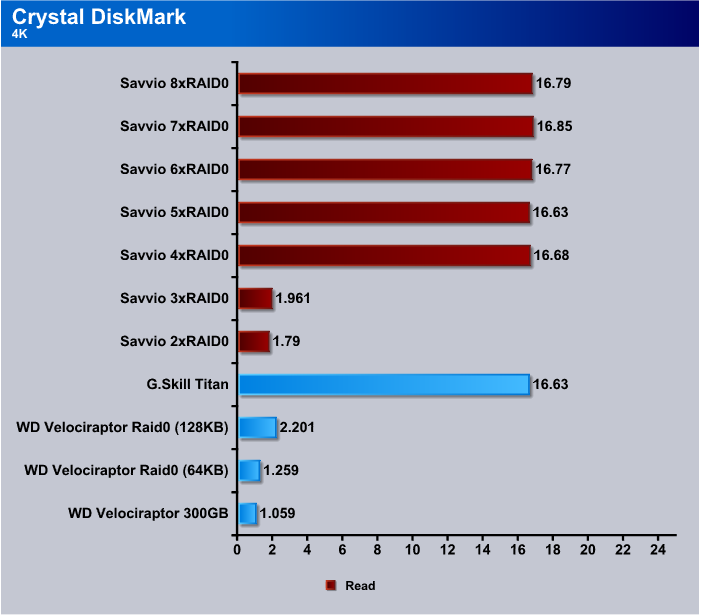

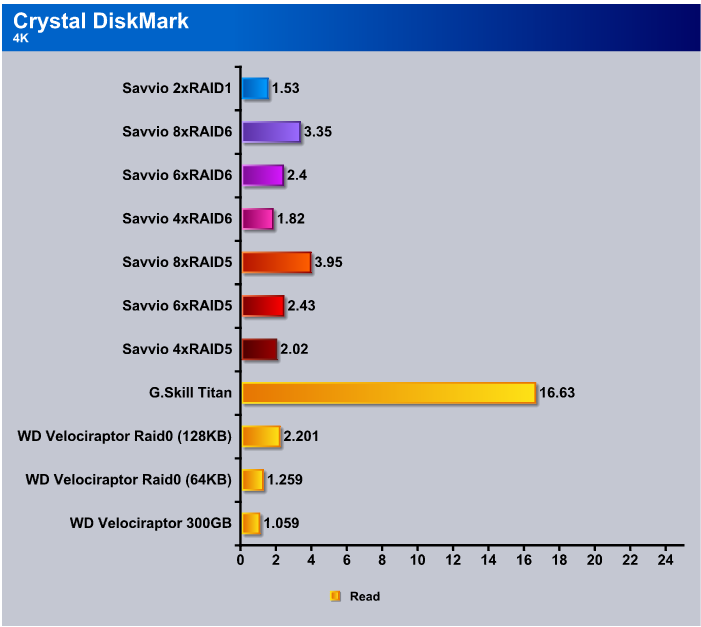

Again, we see that smaller files, in this case 4k, are hard on any type drive. Performance at 2 and 3xRAID0 was acceptable and beyond those RAID levels we got great performance considering the file size.

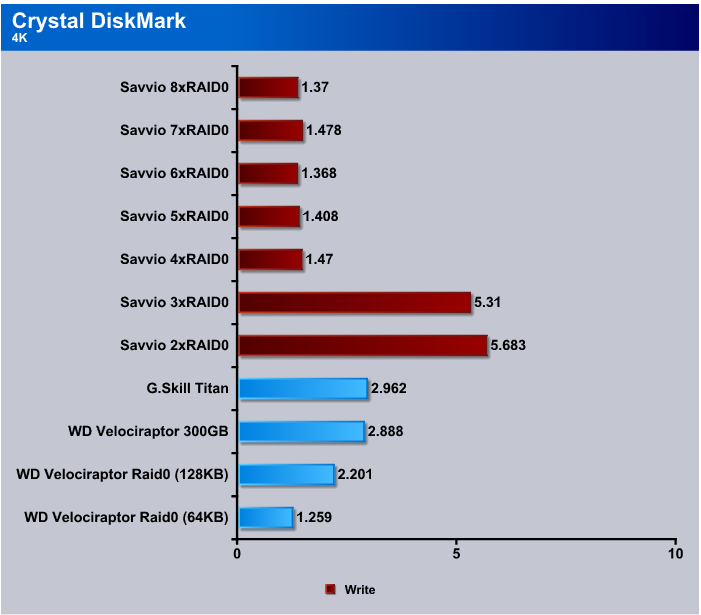

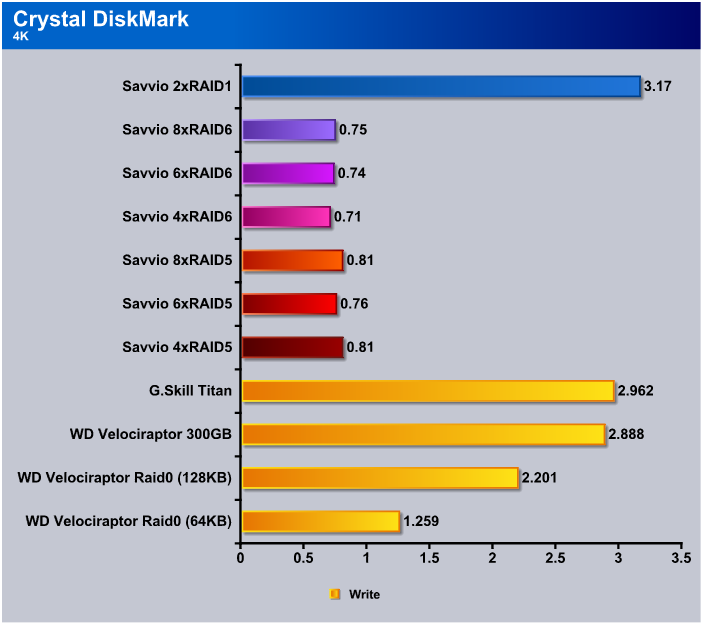

The 4k write test in Crystal DiskMark is about as brutal a test as you’ll see and it’s rather odd that we see the 2 and 3xRAID0 performing better than the higher RAID levels until you think about it a little. As the RAID levels increase the small 4k block has to be split more times and takes longer to write. Write speeds finally level out at about 1.4(ish) MB/s in this brutal test.

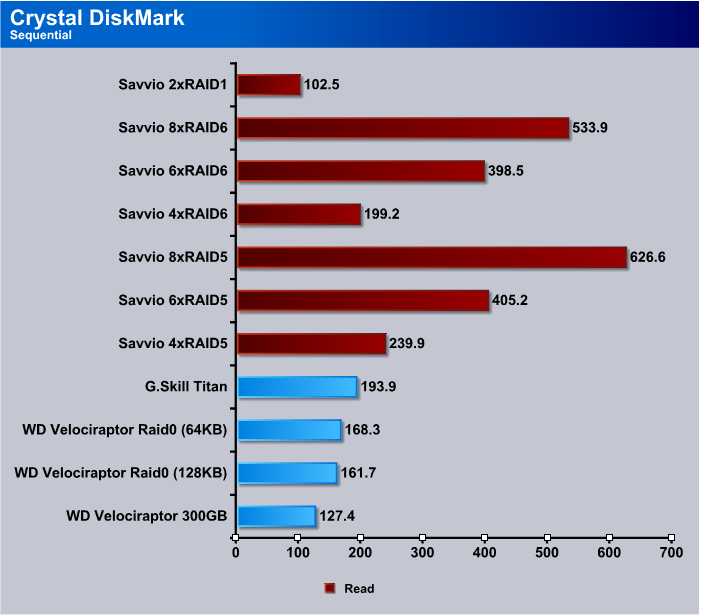

We kept Crystal DiskMark at five iterations of the test and set to 100 MB, so in each test it did five passes of 100 MB each. Speeds in this test stayed in the 200 MB/s range across the board with the RAID arrays we tested.

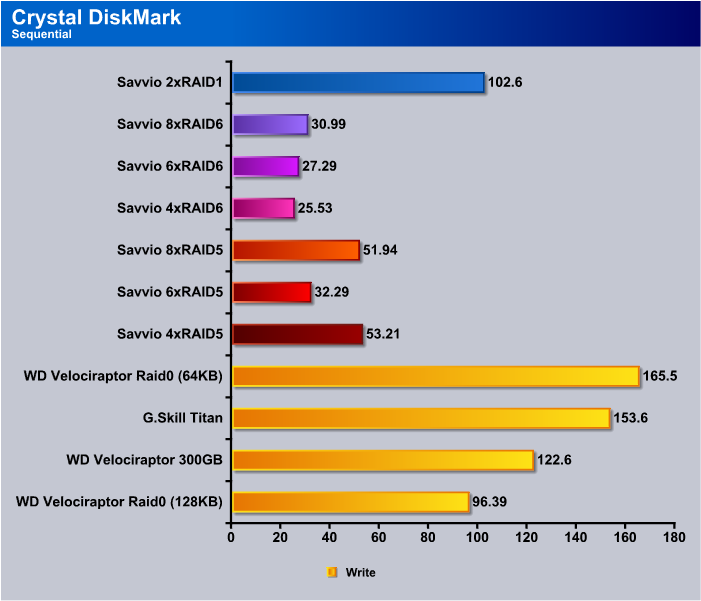

In the Sequential write test the 2xRAID0 did the best with the Savvio 15k SAS drives and beyond that we begin to see the write performance hits you take from using RAID.

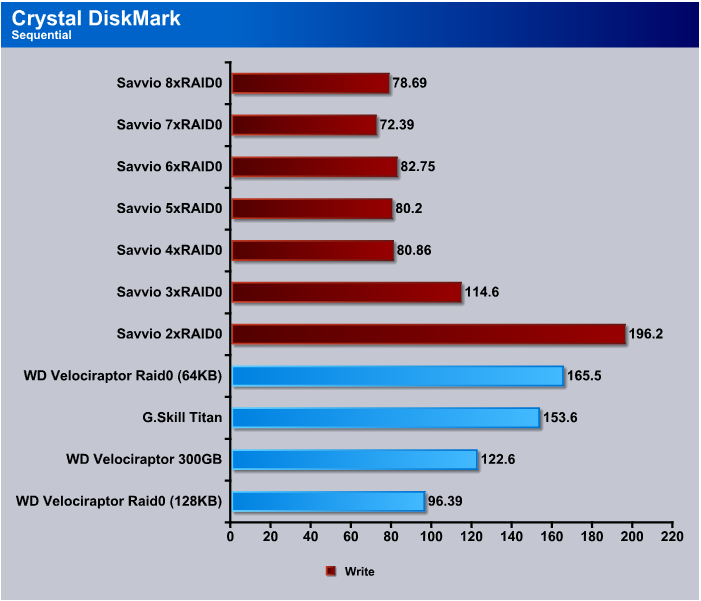

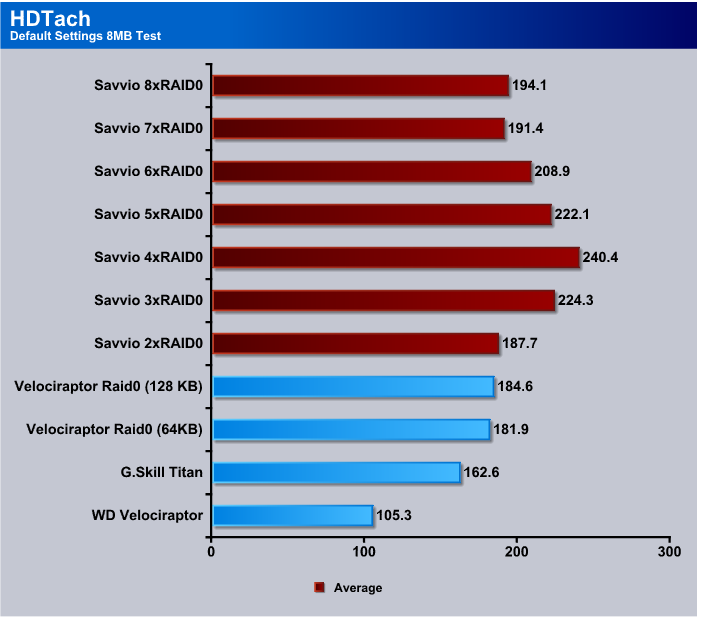

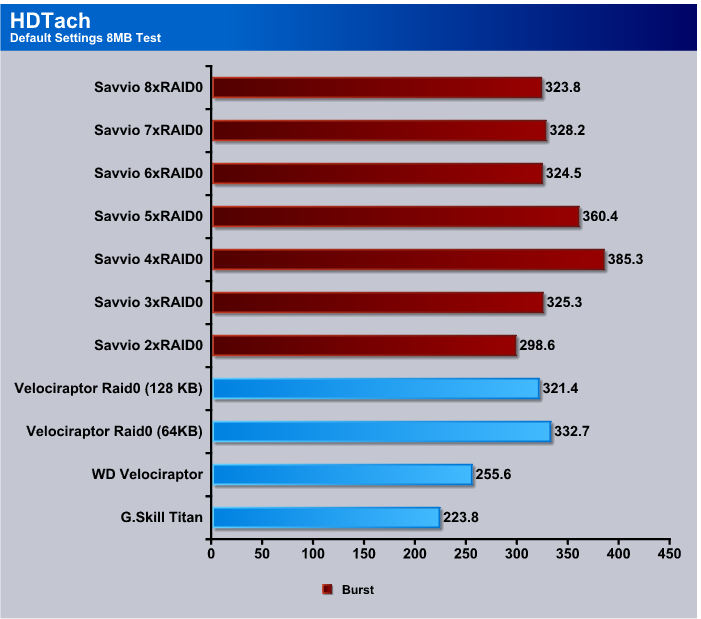

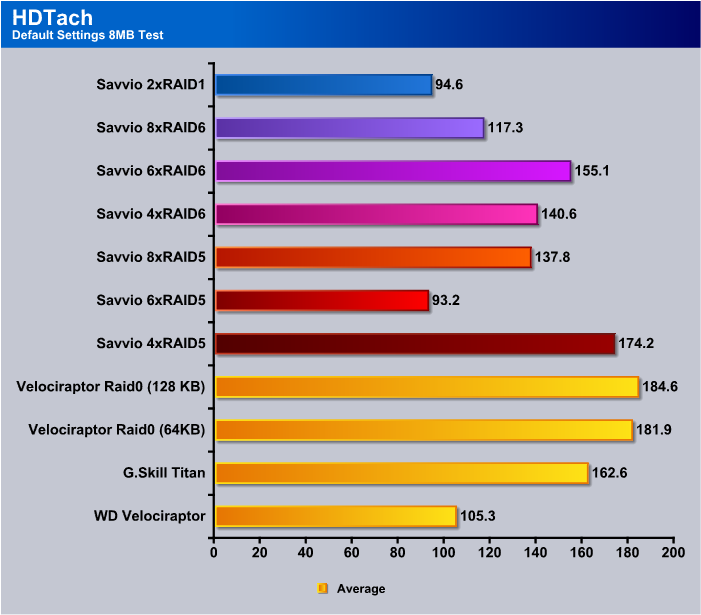

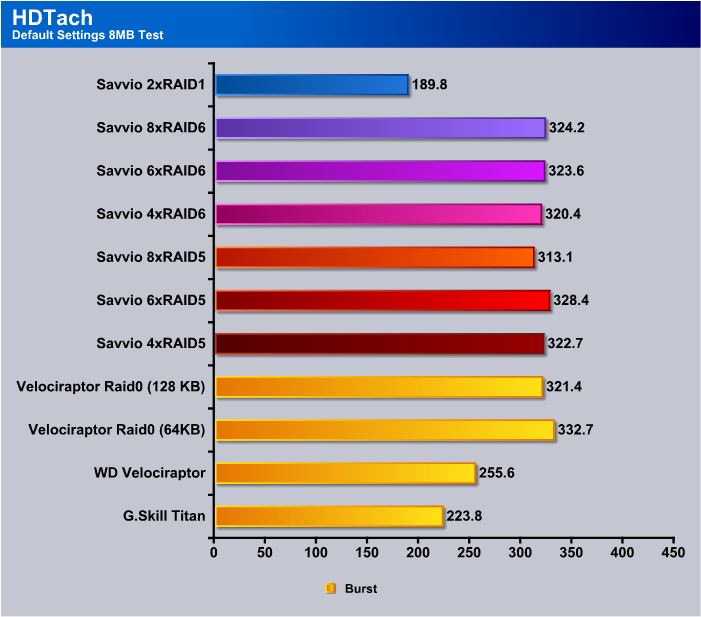

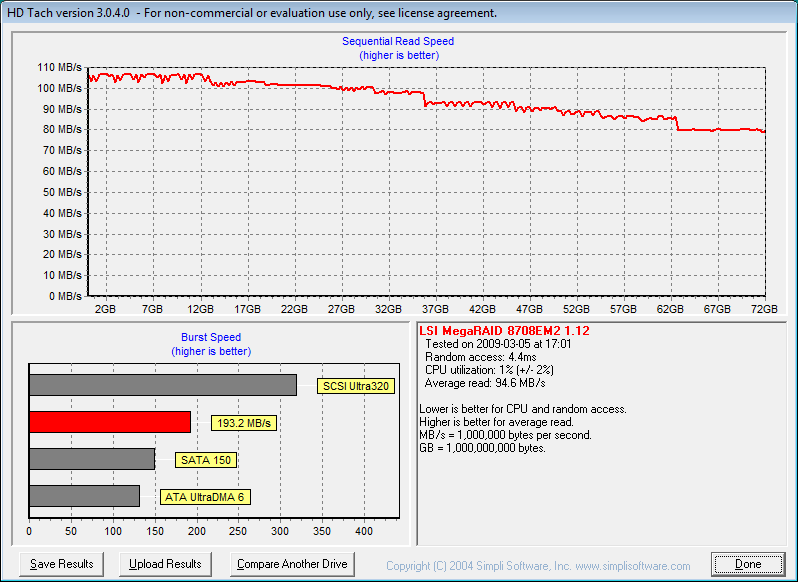

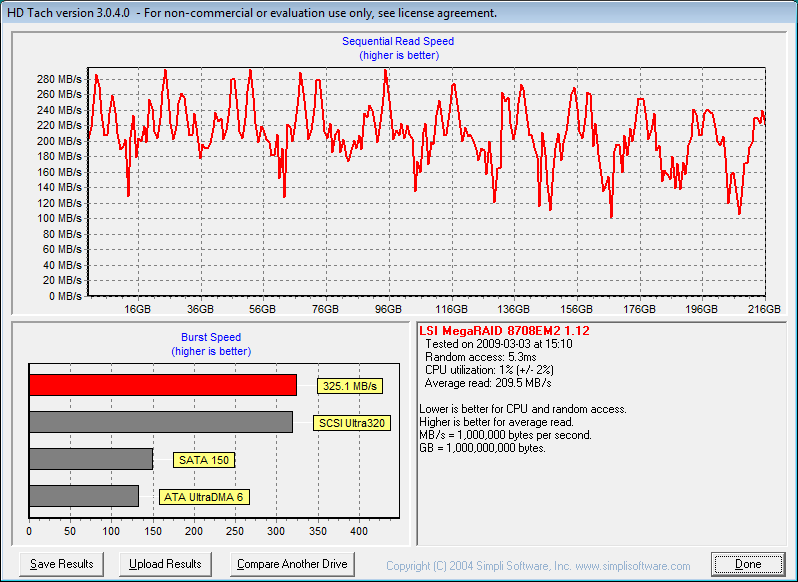

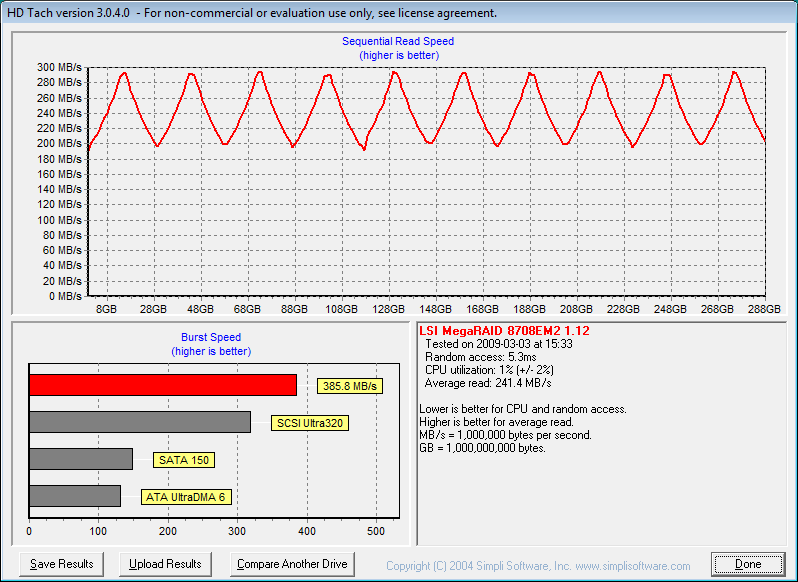

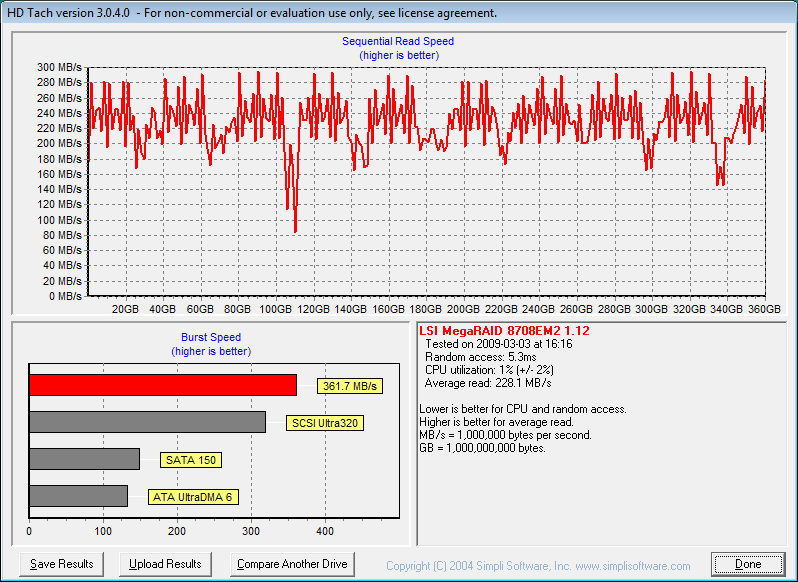

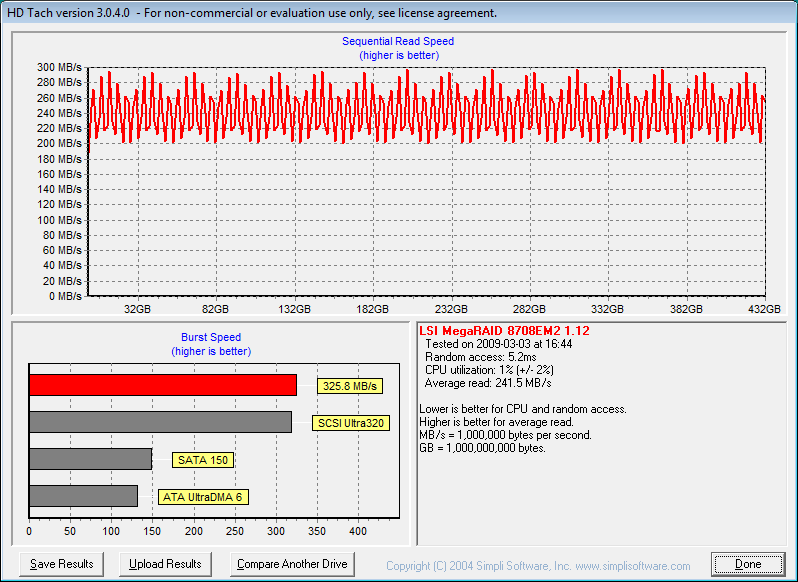

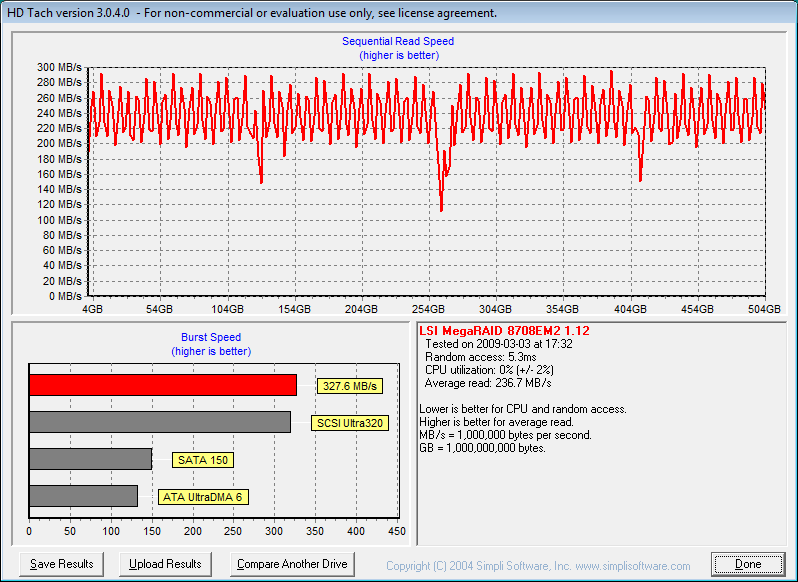

HDTach

The HDTach 8 MB test and the 32 MB test both churned out numbers that were very similar so we opted to just show the 8 MB test rather than show two sets of almost identical data. The LSI MegaRAID and Seagate Savvio combination outperformed everything we compared them to in this test but not by as much as you’d think from stacking up to 8 high end drives in RAID0. We are, however, impressed by the consistency of the speed across a battery of tests.

When we saw the burst speeds of the RAID0 arrays we tested they ranged between 298 and 385 MB/s transfer speed and peaked out at 4 and 5xRAID0. Still, those are some pretty decent speeds for a desktop PC.

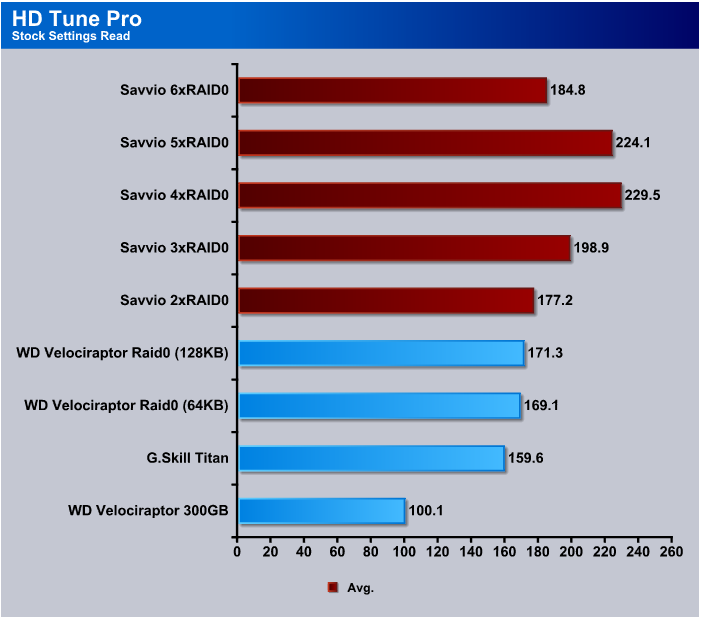

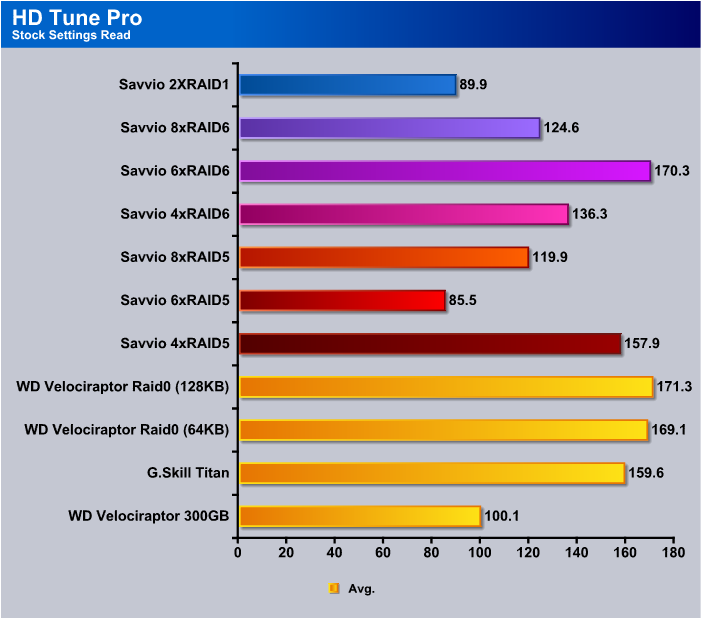

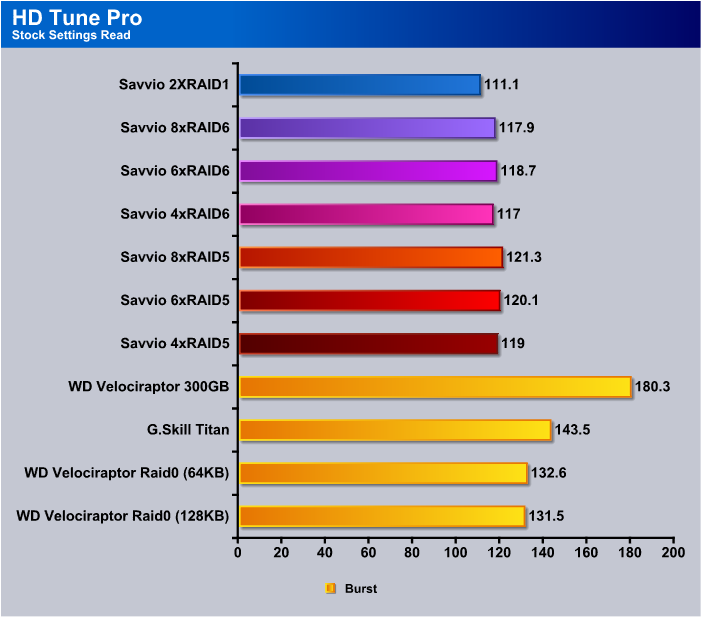

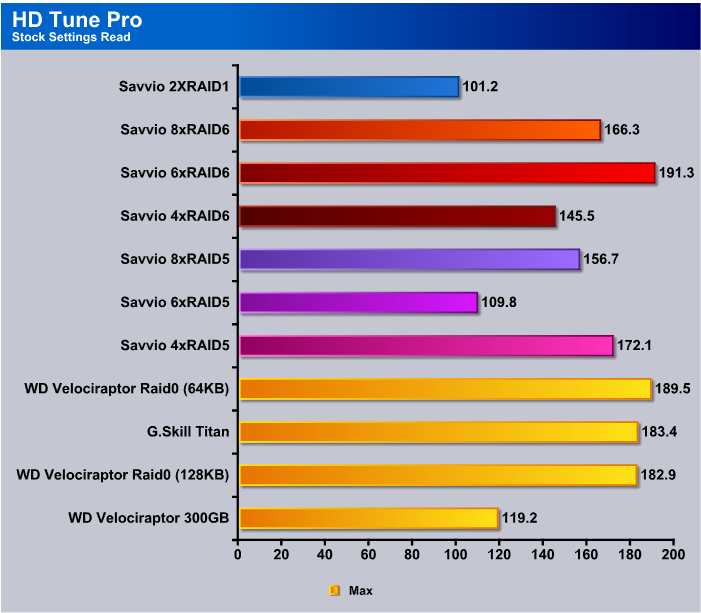

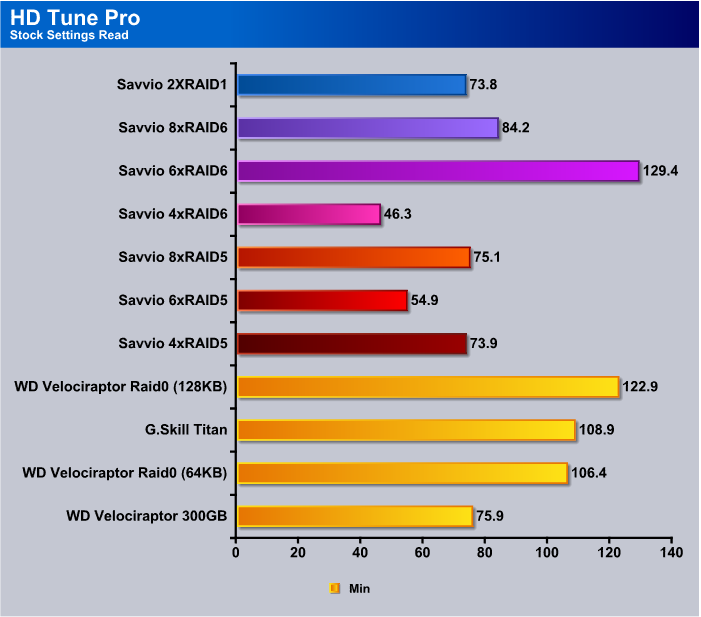

HD Tune Pro

We left all the settings on HD Tune Pro at stock and we’re seeing speeds that range from 177 MB/s to 229 MB/s but we had to stop at 6xRAID0 because HD Tune PRO was reporting some really strange numbers that topped out above 1 GB/s and dropping to 0 GB/s randomly. We probably exceeded its capacity to read the arrays we created and it was trying to commit ritual suicide.

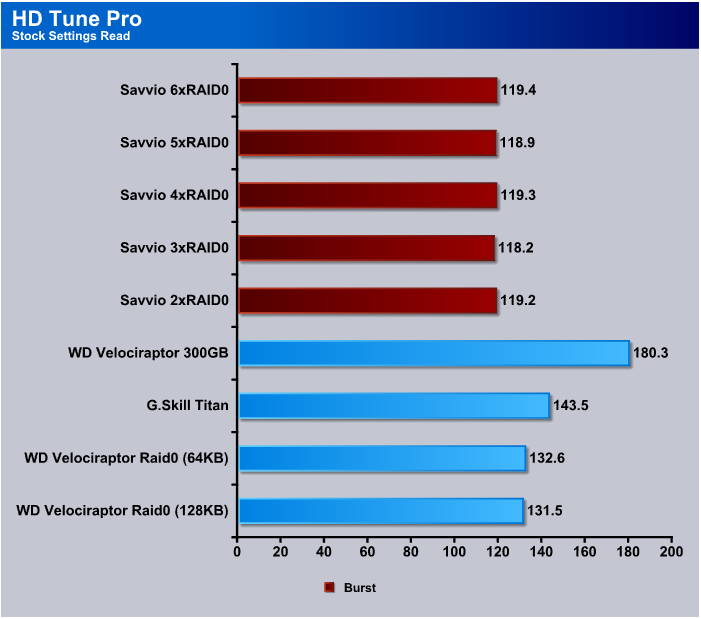

We’re really not sure what was going on with the burst test in HD Tune Pro because every array we tested cam in the 118 – 199 MB/s second range. So, with that small a variance it’s a hard call and we’ll leave that up to the reader to consider or dismiss.

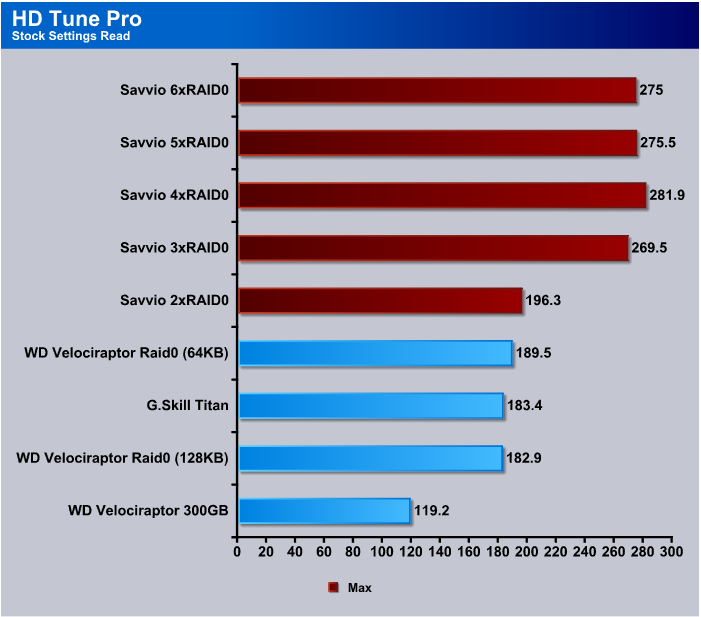

Maximum speeds in HD Tune Pro at 2XRAID0 matched the VelociRaptors in the same configuration and beyond 2xRAID0 we saw speeds of up to 281.9 MB/s and we still seem to be having trouble breaking 300 MB/s level even with high end RAID. Compared to single drives on onboard controllers, these speeds are astronomical.

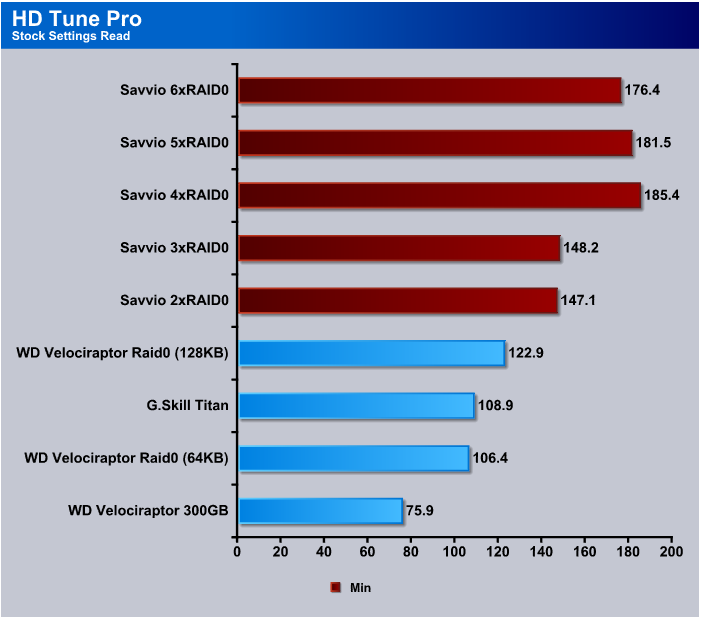

The Minimum speed test on HD Tune Pro shows the LSI MegaRAID and Savvio 15K drives with a pretty good advantage at this level of testing. Performance over all so far has been high and 4xRAID0 looks like a pretty decent sweet spot.

Results RAID1, 5, 6

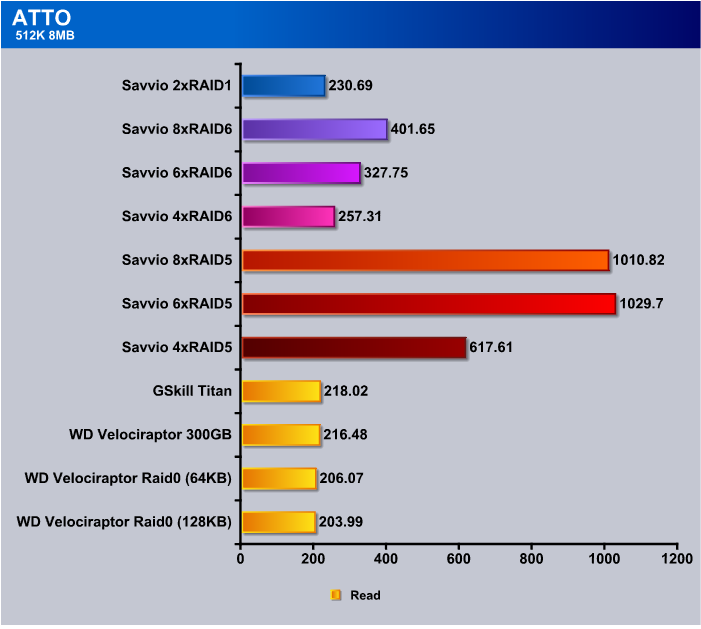

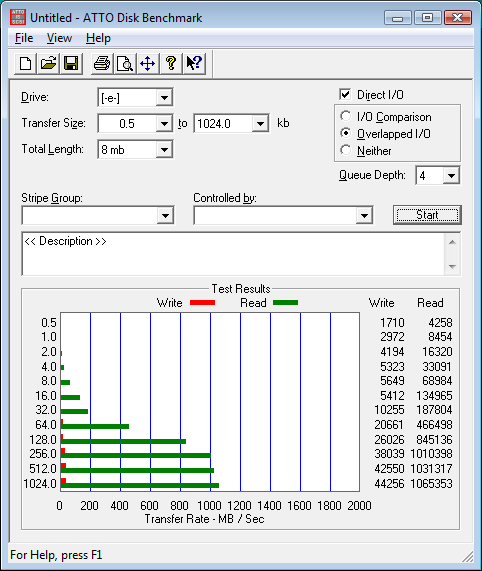

ATTO

Things start to get interesting when we get away from the monochrome landscape of RAID0. At 4xRAID5 we see speeds in excess of 600 MB/s, then when we move to 6 and 8xRAID5 we see speeds in excess of 1 GB/s. Best speed in RAID6 is just over 400 MB/s and RAID1 comes in at 230 MB/s. RAID5 is looking good so far for enthusiast use with some small measure of fault tolerance.

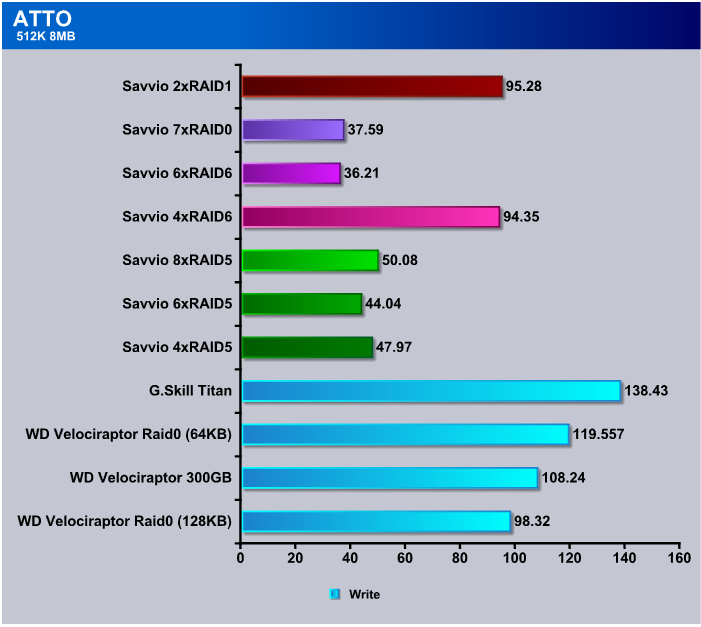

Once again we see the hit you’re going to take with hard Drive writes and RAID Arrays. Depending on what you use your computer for it can be an issue but in practice we haven’t seen much downside to the hit you take on writes. The added performance from reads gives a nice boost in most applications.

Crystal DiskMark

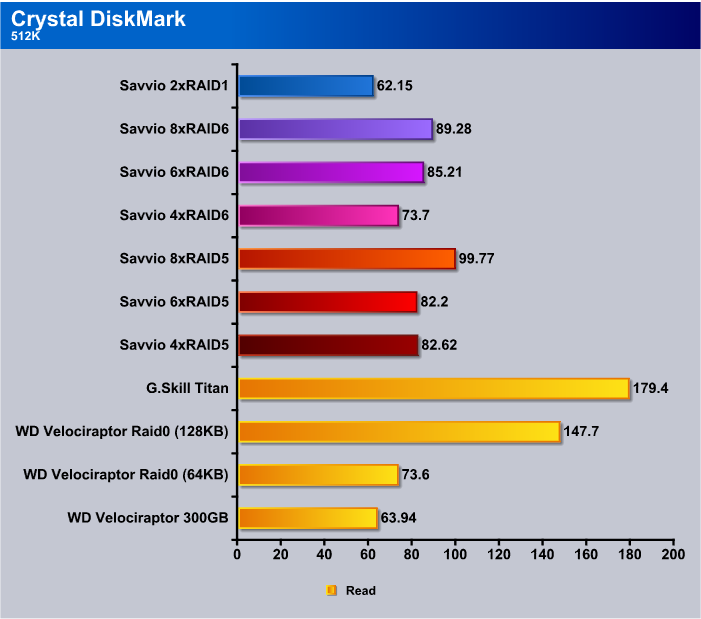

In Crystal DiskMark, no matter how we set it in the 512 Read test, speeds were lack luster and we can’t help but wonder if it’s Crystal DiskMark that’s to fault or the inherent nature of RAID. We did have the RAID arrays set for 64 KB stripe the entire testing time for consistency. Perhaps playing with stripe size would yield some better results.

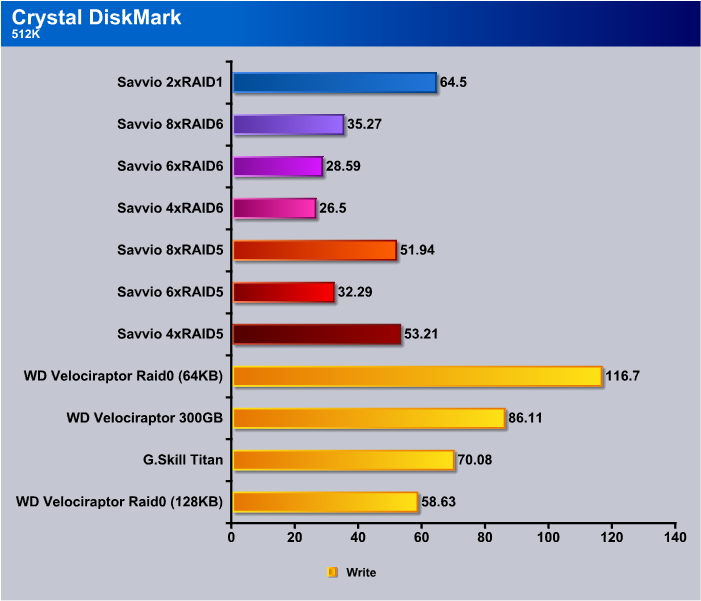

Write speeds are about what we expect from complicated RAID arrays and have been a known limitation of RAID implementations for quite some time now.

The ever brutal 4K test in Crystal DiskMark shows no real advantage or disadvantage to using RAID for small reads. The one drive that excelled at this is a SSD and a different class drive. Of course, Seagate Savvio 15K are in a class by themselves, so it’s hard to make any comparisons that are relevant.

In the 4K test under 1 MB/s was a little bit of a surprising result but with the writes being only 4K in size we don’t see it as much of an issue because how many 4K writes are you going to do in an average day.

Getting back to the realms of sheer speed we see that 8xRAID5 tops out at 626.6 MB/s and 8xRAID6 with its higher fault tolerance and use of 2 drives for Parity tops out at 533.9 MB/s and both RAID5 and RAID6 are looking to slake your thirst for hard drive nirvana.

Then, reality comes crashing back in on Sequential Write testing. Once again, unless you’re writing from one hard drive to another, which seldom happens, this really isn’t much of an issue.

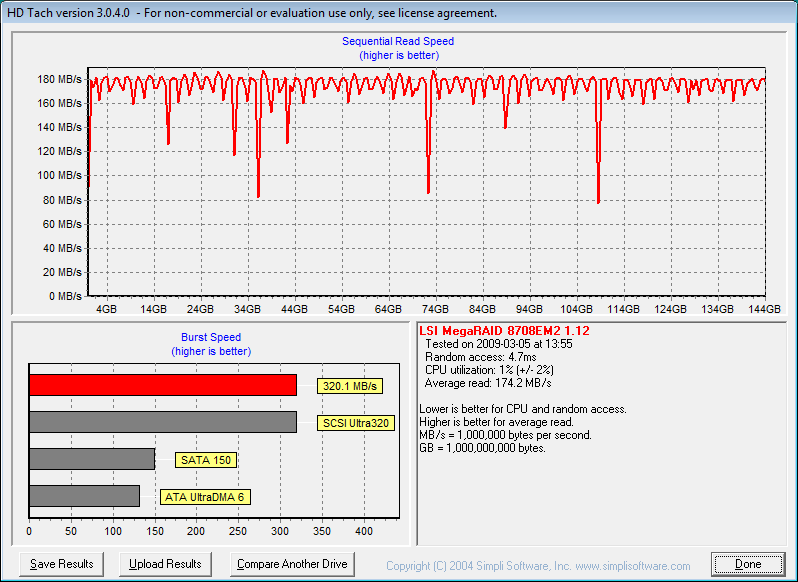

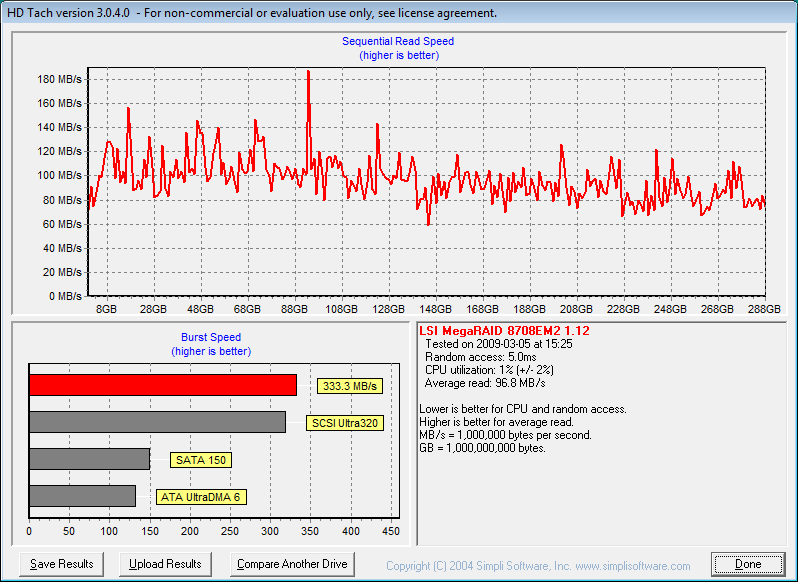

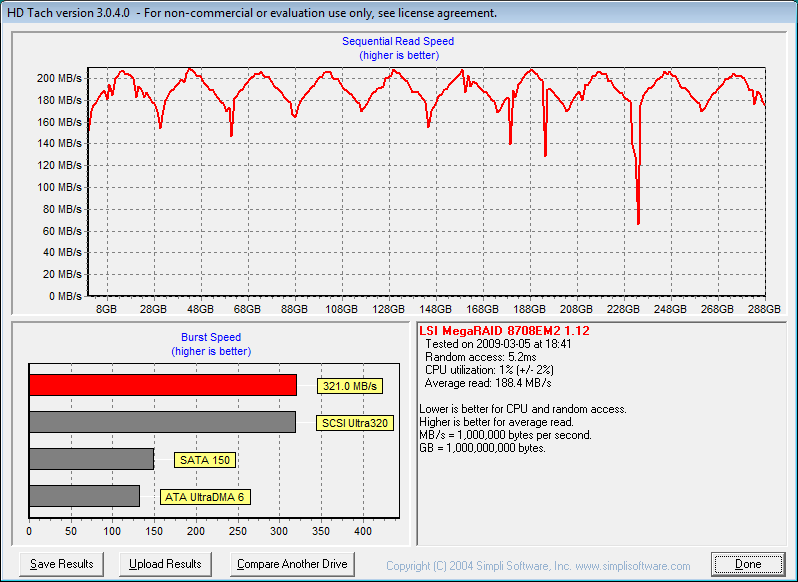

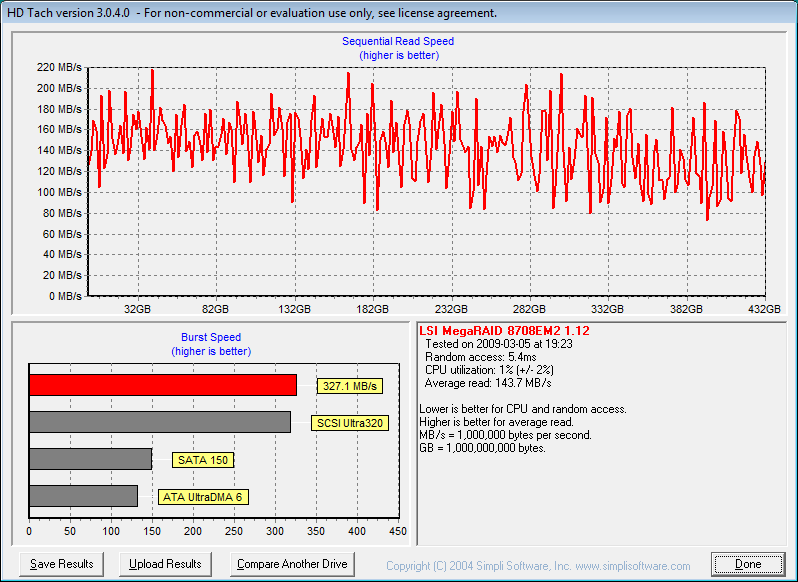

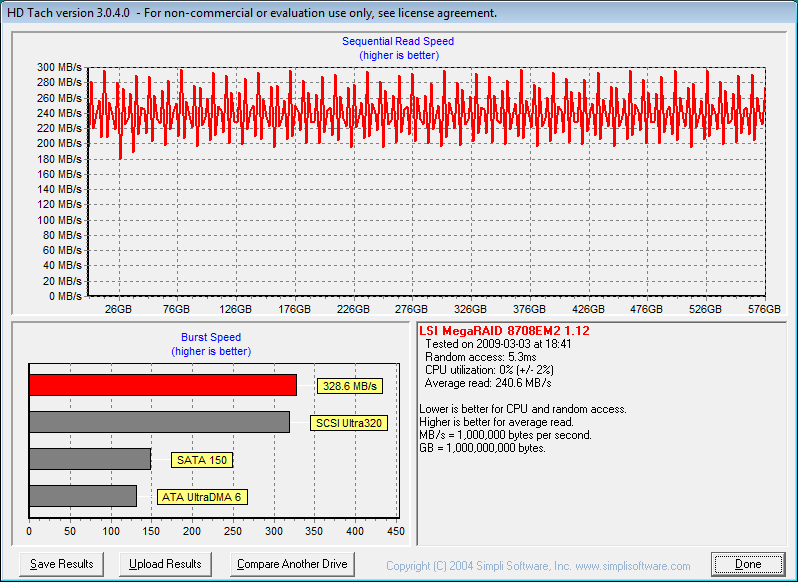

HDTach

In HDTach we stuck with default settings and the 8 MB test again because we got almost identical results between the 8 MB and 32 MB tests again. RAID5 topped out at 4xRAID5 at 174.2 MB/s and in RAID6 it topped out at 6xRAID6 at 1155 MB/s.

In the Burst test in HDTach we see pretty much the same thing we saw in RAID0. Testing speeds topped out around 300 GB/s with the exception of RAID1 and 2xRAID1 topped out at almost 190 MB/s.

HD Tune Pro

In HDTune Pro RAID5 speeds hit 157.9 MB/s at 4xRAID5 and 170.3 MB/s at 6xRAID6. It’s rather amazing seeing how array size affects overall speed in the different tests. The way the human brain works is that you tend to think more drives more speed, but we’re seeing here that it’s not always the most drives that takes the bench.

We got a little wider variance in the burst test in HD Tune PRO but not enough to convince us that HD Tune Pro doesn’t have a problem with the controller and the Burst Test.

Maximum speed in RAID5 we hit at 4xRAID5 with 172.1 MB/s being the top end. In RAID6 we hit 191.3 MB/s with 6xRAID6 which seems to be the sweet spot in RAID6.

Minimum speeds we got the best speed from 6xRAID6 and by the same token we got the worst speed from RAID6. Most of the minimum speeds we saw in HD Tune Pro were very minor one time drops in speed that didn’t have enough legs to make any real difference to the overall average speed. Once we get past the Everest Charts we have some screen shots of the different tests we ran randomly sampled to illustrate what we mean.

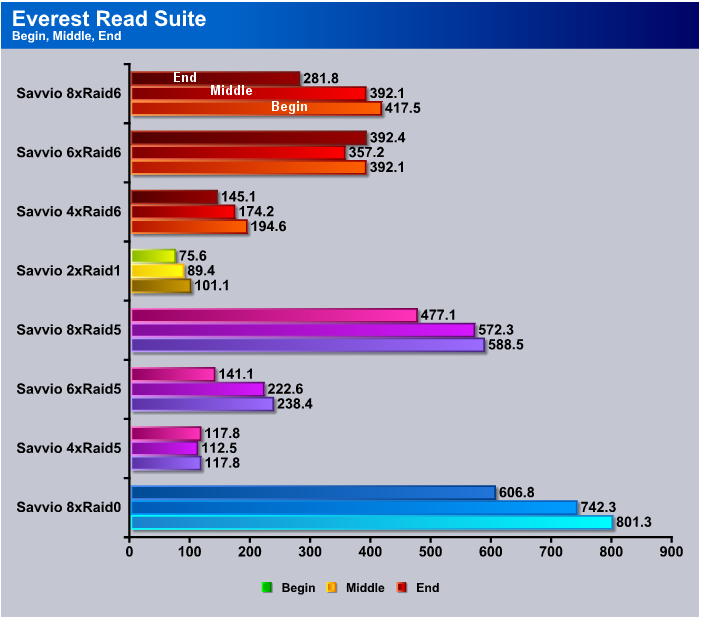

Everest Ultimate 5.0

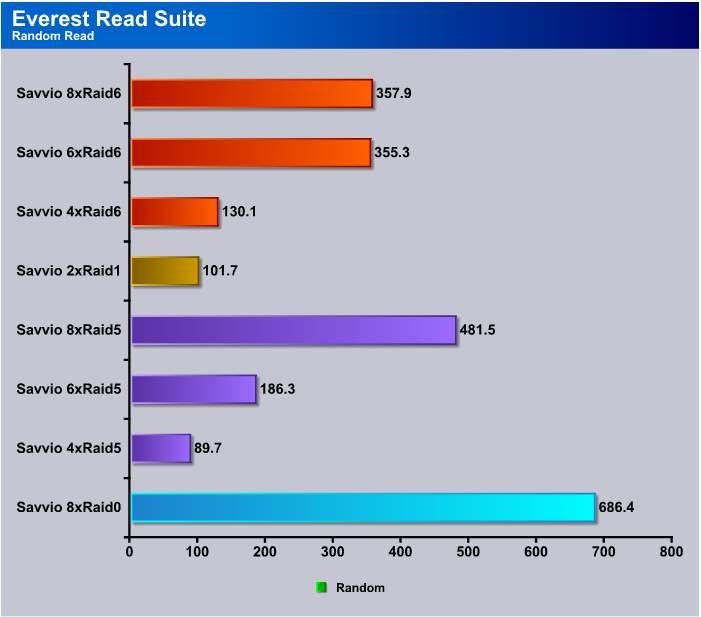

In Everest 5.0 we wanted to mix it up a little and toss in the 8xRAID0 results with the other RAID setups so you could get an overall sense of how the top end 8x arrays did on the same chart. 8xRAID0 came out smokin’ fast in Everest and churned out 801.3 MB/s at the beginning of the drive platters. RAID5 has a little more overhead with its distributed Parity and turned in 588.5 MB/s, which is still smokin’ fast. 8xRAID6 came in with 417.5 MB/s and really, if you’re used to single drive performance, you’d be thrilled with these speeds.

In the Random Read test in Everest you expect speeds to drop a little because of the nature of the test, but 8xRAID0 came in at a blistering 686.4 MB/s, which really surprised us. 8xRAID5 came in at 481.5 MB/s, which is more than acceptable, and 6 and 8xRAID6 came in at 350(ish) MB/s, which looks puny compared to the other scores but you have to keep in mind the theoretical limit of a SATA 2 drive is 300 MB/s and they seldom do a third of that speed. So, worst case here you’re looking at three and a half times the speed of a normal SATA drive, but you get the advantage of a blazing fast access time.

Benchmark Screenshots

We’re providing some of the benchmark Screen shots because they expand on the sampled information but we’ll leave you to browse them on your own, free from commentary.

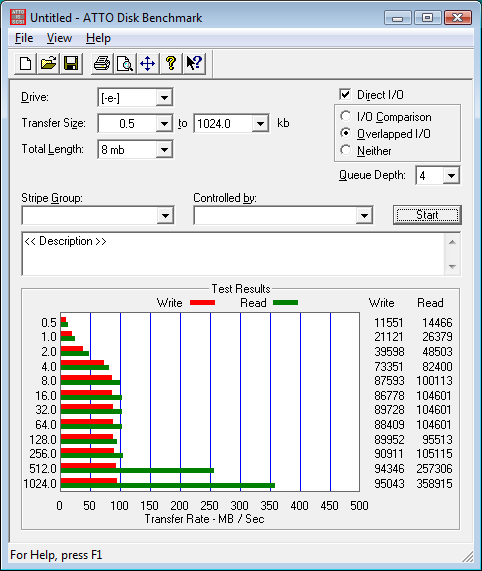

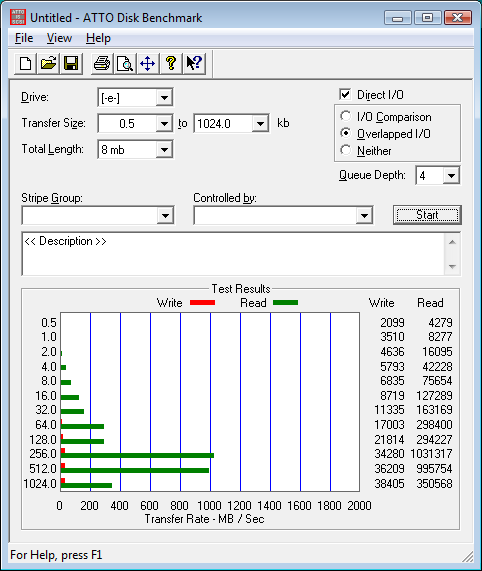

ATTO

RAID0

RAID5

RAID6

RAID0

HDTach

2xRAID1

RAID5

RAID6

RAID0

CONCLUSION LSI MegaRaid (SAS 8708EM2)

The LSI MegaRaid 8708EM2 is a dream to work with. RAID arrays are generated with a setup wizard that is easy to learn and easy to use. Redundant arrays (RAID 1, 5, 6) with fault tolerance and Non-redundant (RAID0) are made with just a few clicks of the mouse. The only thing we had to do was put the RAID drivers for our operating system on flash drive and Vista and MegaRaid did the rest. We took the easy approach and installed the MegaRaid controller and let Vista detect it and inserted the flash drive and Vista found the drivers on its own and installed them correctly first time out of the gate.

We tested synthetic benchmarks on the MegaRaid 8708EM2 with the drives blank then we loaded Vista on an 8xRAID0 array and did some real life testing in gaming and a few other disk intensive applications. While the MegaRaid 8708EM2 impressed us in synthetic benches, it impressed us even more when we loaded the OS on it and took it for a weeks spin. Applications loaded faster than using high end SSD’s and the system was snappier and applications loaded faster.

With all the types of arrays the MegaRaid 8708EM2 offers, you’re sure to find a setup you can live with. Setup was a snap and speeds were phenomenal when combined with the Seagate Savvio 15K (ST973451SS) drives we tested with. If you’re not into SAS drives the controller is flexible enough to handle SATA drives and you can run more than one array on the same controller if you desire.

Access times remained ultra low with the MegaRaid/Seagate Savvio 15K (ST973451SS) combination and that’s something we haven’t touched on as yet. Average access time across all the testing remained in the 4ms range, which is unheard of for traditional platter drives. Another advantage of the MegaRaid 8708EM2 is that CPU usage across all the arrays we tested remained unbelievably low. The LSI MegaRaid 8078EM2 has an onboard processor that runs at 500 MHz and 128 MB dedicated DDR2 to take the load off the CPU. The entire time we tested CPU usage ran between 0.5% and 1% and never went over 1% even writing to the 8 drive arrays we tested. Your CPU is left to do what it’s designed to, provide blazing speed in games and applications.

As we’ve shown, there is a downside to RAID and that is Write performance tends to be less in RAID arrays than single drive operations. To that end we copied an entire OS install and all the programs on a boot drive that contained better than 140 GB of programs and data to an 8xRAID0 array and the total time for the copy was 10 Minutes 43 Seconds. That is the drive to drive copying that we mentioned. The same copy to a single drive took 8 Minutes 27 seconds, so we lost a little over two minutes copying an entire drive to the 8xRAID0 array and that’s not a bad compromise for the blazing Read speeds we got, which in some cases topped 1 GB/s and in many cases topped the 300 MB/s theoretical limit of a normal SATA 2 drive.

We can easily recommend the LSI MegaRaid 8708EM2 to a beginner or to a seasoned system administrator. It’s simple enough for the beginner but offers enough features for a seasoned veteran of hardware RAID.

We are trying out a new addition to our scoring system to provide additional feedback beyond a flat score. Please note that the final score isn’t an aggregate average of the new rating system.

- Performance 10

- Value 8

- Quality 10

- Warranty 8

- Features 10

- Innovation 9

Pros:

+ Easy Setup

+ Up to 32 Drives

+ RAID 0,1,5,6 and Spans 10, 50, 60

+ Low Profile

+ Low CPU Usage

+ Blazing Speed

Cons:

– Write Speeds Suffer

– Cost

The LSI MegaRaid 8708EM2 is an easy to use but comprehensive hardware RAID controller that has to be experienced to be believed. Multiple RAID Array setups with just a few clicks of a mouse and blazing speeds we didn’t even think possible. CPU usage was lower than we’ve ever seen on complicated RAID setups and did we mention mind blowing, data scorching speed? The LSI MegaRaid 8708EM2 receives a score of: 9.5 out of 10 and the Bjorn3D Golden Bear Award

Conclusion Seagate Savvio 15K (ST973451SS)

The Seagate Savvio 15K 73 GB SAS drive is the first 2 1/2 inch 15,000 RPM drive ever produced, showing that physical size isn’t a limitation on high performance drives any longer. Single drive Latency is rated at 2ms and Read Access is rated at 2.9ms with Write access coming in at 3.3ms. Those are unheard of access times for drives of this size. We were testing RAID arrays on a hardware based LSI MegaRaid controller but we also snuck in some onboard controller testing on the Asus P6T Deluxe which has two SAS ports built in. Even on the built in ports with software based RAID, the Seagate Savvio drives amazed us with their smokin’ fast access times and high transfer rates. When we switched out to the 14 different RAID arrays we tested, the Seagate Savvio 15K drives amazed us even more and not only with their high transfer rates. They remained quiet and vibration free in less than perfect testing conditions, which is amazing for a 15K drive.

Because of the nature of the extensive testing, which lasted longer than a month, we tested the RAID arrays without loading them with data or an OS, but we did load Vista 64 on an 8xRAID0 array and it was obvious from the word go that we’d discovered hard drive nirvana. Program response times were shorter, but due to the nature of hardware RAID controllers Vista didn’t load any faster. It didn’t load any slower but the pause of the RAID controller to check the eight drives it was controlling offset the faster load. Inside Vista things were faster, load times were quicker, and programs responded faster. We saw speeds we didn’t even think possible; in a few cases exceeding 1GB/s, in many cases exceeding 300MB/s which is the theoretical limit of a SATA 2 drive (they never reach the theoretical limit and in our experience seldom transfer faster than 80 MB/s in ideal conditions). In single drive operation with the Savvio’s we were seeing about 120 MB/s sustained transfer rates, which is considerably better than regular SATA 2 drives.

There’s a lot to like about the Savvio 15K drives. They’re 70% smaller than 3 1/2 inch drives, use 30% less power consumption, and they free up more space in the chassis to allow for better cooling inside the system. Drive enclosures are starting to appear that can handle 4 Savvio’s in a single 5 1/2 inch drive bay, so you can feasibly stack eight or more drives in a minimum of space, which is usually unused anyway, and since the enclosures use a single Molex connector there’s not even a large knot of wires to manage.

Like all things high performance there is a downside which in this case is size and cost. The cost per Gigabyte is much higher than regular SATA 2 drives but as we all know the longer a piece of hardware is around and as demand grows, prices drop. The size of the drives storage capacity more reflects Enterprise environments like Server Farms and Blade servers but adapted for desktop use you can attain speeds that will make your head spin. If you’re looking at this class drive you don’t expect to pay the same per Gigabyte. These are for the most demanding Enthusiasts and Enterprise applications. The good new is that you don’t need eight drives like we had for testing to realize some seriously fast transfer rates and low access times. Two to three drives on any capable hardware RAID controller will make even the hardest core Enthusiast smile.

We are trying out a new addition to our scoring system to provide additional feedback beyond a flat score. Please note that the final score isn’t an aggregate average of the new rating system.

- Performance 9.5

- Value 7

- Quality 10

- Warranty 10

- Features 10

- Innovation10

Pros:

+ Silent Operation

+ Size

+ Power Consumption Is 30% less than 3 1/2 Inch Drives

+ Blazing Access Times

+ High Transfer Rates

+ Did We Mention Blazing Speed

Cons:

– Cost

– Have To Have A SAS Controller Which Will Increase Operational Costs

For their innovative design, ultra low access times, and blazing speeds the Seagate Savvio 15K 73 GB drives receive a: 9.5 out of 10 and the Bjorn3D Golden Bear Award!

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996