With the introduction of Nvidia’s new drivers (v. 180.43) and Core I7 SLI is coming to maturity. Game Developers and Nvidia come together to perfect SLI technology for FarCry 2.

FARCRY 2 SLI PERFORMANCE REVIEW

Many sites produced reviews on FarCry 2 as fast as they could. We decided to miss the initial rush of hurried reviews on FarCry 2 and SLI and opt for FarCry 2 on Core I7, specifically the “Big Dog”, the Core I7 965 Extreme. Since we’ve had Core I7 in hand and we’ve been testing for some time now, we know that it is the future of computing. People will look back at Core I7 and think, “That’s when computing really started to mature.” By waiting for the NDA to lift on Core I7 and the Intel X58 chipset, we’re able to bring you a fresh look at FarCry 2, SLI, CrossFire, the X58 chipset, and Core I7 all rolled into one. You know life is good when you’re setting on the hottest platform and playing the hottest game with a couple of the fastest GPU’s on the planet just aching to churn out frames so fast it’ll make your head spin.

FarCry 2 is one of the most anticipated titles of the year. It’s an engaging state-of-the-art First Person Shooter set in an un-named African country. FarCry 2 features a stunning backdrop on which to play out the conflict between you and a nasty fellow aptly named the Jackal. It would be rather boring if it were just you and the Jackal wandering around the game world trying to off each other, so Ubisoft tossed in a couple of warring factions that are called the APR and UFLL. We might add that the APR and UFLL aren’t really particular about who they shoot at, so you might want to do unto others before they do it to you.

NVIDIA Developer Support of FarCry 2

The game itself was developed by Ubisoft with close cooperation from Nivdia in an attempt to improve SLI scaling in the game. We spoke with James Wang from Nivida and he enlightened us on the level of cooperation between Ubisoft and Nvidia.

NVIDIA’s Level Of Support

- Consultation with NVIDIA software engineers on graphics tuning and performance

- Two weeks on-site consultation

- Three man months of cumulative consultation

- Full access to NVIDIA’s Moscow Global Testing Labs (GTL):

- Performance testing on 9 different game builds

- 42 hardware configurations

- 252.6 hours of testing in total

- On demand driver updates

- Early developer access and seeding for GeForce GTX 200 processors

- Specific driver improvements to optimize for FarCry 2’s memory usage

Not only are we testing SLI performance, but we’re testing Crossfire and TriFire performance in FarCry 2 as well. Before we move on we’d also like to add that we heard the cries of our readers, and we’re answering those cries. You’ve been asking for GTX-280 benches to be included in our reviews. On our current platform we’re going to bring you GTX-280 results. Not only are we going to bring you GTX-280 results, we’re going to bring you GTX-280 SLI results. Then, since we love our readers so much, we’re going to go one better and provide Triple GTX-280 SLI results. You’ll have to wait a short time for the Triple results though because today we’re going to whet your appetite with GTX-280 and dual SLI GTX-280 results in FarCry 2. As soon as we get an X58 triple SLI platform to mount all three of these beasts on, we’ll give you more SLI GTX-280’s than you ever dreamed possible. Not only that, we’re going to be bringing you all that in pinpoint performance reviews on some of the hottest game titles out there.

We’d especially like to thank the great crew over at NVIDIA for providing us the EVGA GTX-280’s, Ubisoft for FarCry 2 to use them on. We graciously thank them for all the cooperation and coordination required to bring you some GTX-280 action in FarCry 2.

By the way, we love our faithful readers, but get within three feet of our triple GTX-280’s and we’ll be forced to taser you into submission and eject you from the lobby.

TESTING & METHODOLOGY

We did a clean install of Vista 64 bit (all patches and updates, latest drivers etc) on our test platform and deliberately left the video drivers out. Then we used Acronis Disk Director Suite to clone the hard drive. Once we had a verified good clone of the hard drive we went ahead and installed the first set of GPU drivers. In between each GPU, we copied the cloned drive with no GPU driver on it back to the Intel 80GB Sata SSD (X25-M) we were using for testing. That way, we always have a clean fresh install of Vista for each GPU and the drivers for that particular GPU had been loaded for the first time. We didn’t want anything left over from a previous test to interfere with and possibly skew the numbers of this epic test.

We used the built in FarCry 2 benchmarking tool that Ubisoft ships with it’s retail version of FarCry 2. It’s a full featured benchmark and one of the most well designed, well thought out game benchmarks we’ve ever seen. One big difference between this benchmark and others is it leaves the games AI (Artificial Intelligence) running while the benchmark is being performed. We got some chuckles watching the rag doll PhysiX of combatants being flung across the screen. We didn’t want to taint the benchmark numbers with screen shots so we caught a little rag doll action after testing.

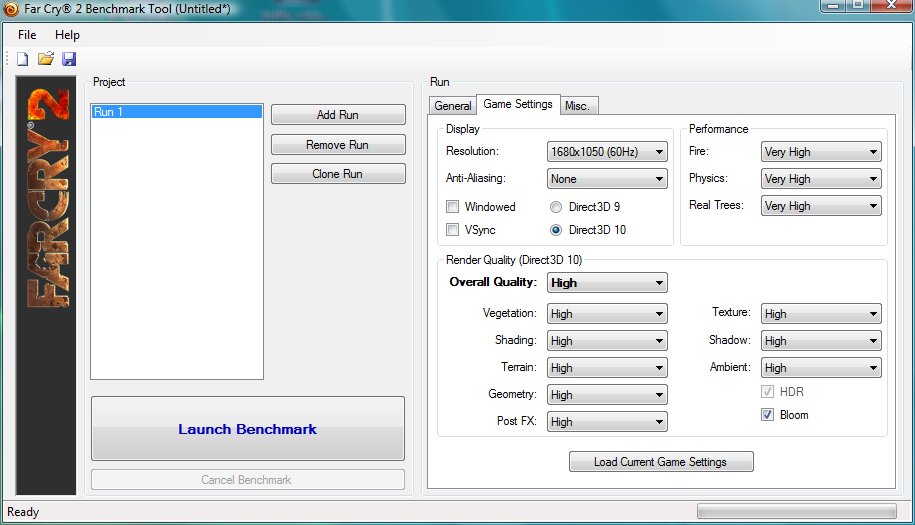

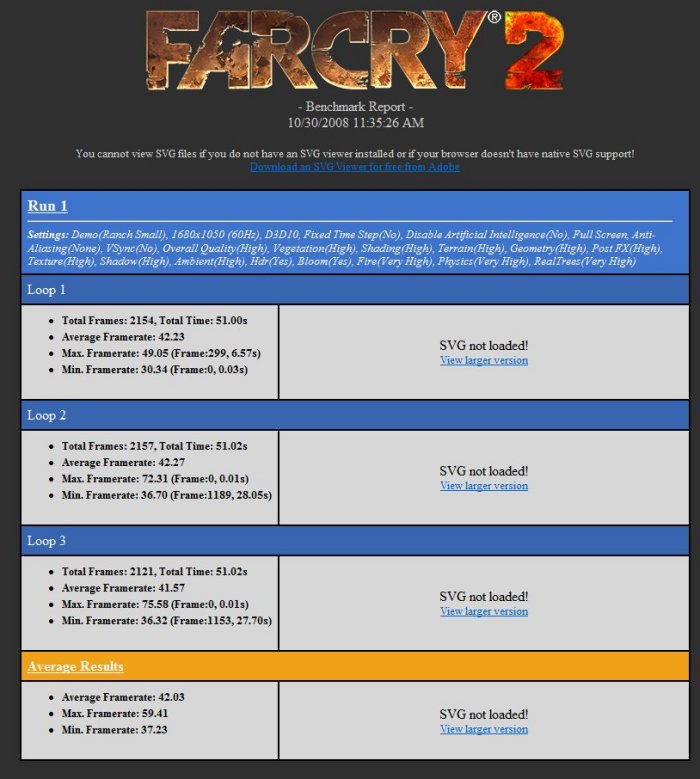

The FarCry benchmark executes three passes of benchmarking and averages the FPS numbers for you at the bottom of the screen, saving us tons of time normally spent with a calculator going over averages. To make it easier, we took a screen shot the settings we used in the benchmark.

We, of course, tested at 1680×1050 in DX9 and DX10 with 0xAA and 4xAA with an array of GPU’s in every configuration we could think of. We were able to use the Asus P6T Deluxe X58 motherboard which is SLI and Crossfire capable. So, for the first time, we’re able to provide SLI and Crossfire results on the same exact platform, only changing GPU’s and GPU configurations with the rest of the platform remaining exactly the same. So, we don’t want to hear any grumbling from the various camps (NVIDIA or ATI fanboys) that it’s not a fair test because they used different motherboards. We used the same motherboard for both NVIDIA and ATI. So if your favorite brand doesn’t meet your expectations, live with it. It’s the fairest test possible.

GPU Comparison Table

| Major GPU Specifications | ||||||||

| GPU | HD 3870 X2 | 9800 GX2 | Palit 4870X2 | GTX 260 | BFG GTX 260 MaxCore | GTX 280 | HD 4850 | HD 4870 |

| GPU frequency | 825 MHz | 600 MHz | 750 MHz | 576 MHz | 655 MHz | 602 MHz | 625 MHz | 750 MHz |

| ALU frequency | 825 MHz | 1500 MHz | 750 MHz | 1242 MHz | 1404 MHz | 1296 MHz | 625 MHz | 750 MHz |

| Memory frequency | 900 MHz | 1000 MHz | 900 MHz | 999 MHz | 1125 MHz | 1107 MHz | 993MHz | 900MHz |

| Memory bus width | 2×256 bits | 2×256 bits | 2×256 bits | 448 bits | 448 bits | 512 bits | 256 bits | 256 bits |

| Memory type | GDDR3 | GDDR3 | GDDR5 | GDDR3 | GDDR3 | GDDR3 | GDDR3 | GDDR5 |

| Memory quantity | 2 x 512 MB | 2 x 512 MB | 2 x 1024 MB | 896 MB | 896 MB | 1024 MB | 512 MB | 512 MB |

| Number of ALUs | 640 | 256 | 128 | 192 | 216 | 240 | 800 | 800 |

| Number of texture units | 32 | 128 | 80 | 64 | 72 | 80 | 40 | 40 |

| Number of ROPs | 32 | 32 | 32 | 28 | 28 | 32 | 16 | 16 |

| Shading power | 1 TFlop | 1152 GFlops | 2.4 TFlops | 715 GFlops | 824 GFlops | 933 GFlops | 1 TFlop | 1.2 TFlops |

| Memory bandwidth | 115.2 GB/s | 128 GB/s | 115.2 GB/s (x2 | 111.9 GB/s | 126.0 GB/s | 141.7 GB/s | 31.78 GB/s | 115.2 GB/s |

| Number of transistors | 1334 mil | 1010 mil | 956 mil X 2 | 1400 mil | 1400 mil | 1400 mil | 965 mil | 965 mil |

| Process | 55nm | 65nm | 55nm | 65nm | 65nm | 65nm | 55nm | 55nm |

| Die surface area | 2 x 196 mm² | 2 x 324 mm² | 2 x 260 mm² | 576 mm² | 576 mm² | 576 mm² | 260mm² | 260mm² |

| Generation | 2008 | 2008 | 2008 | 2008 | 2008 | 2008 | 2008 | 2008 |

| Shader Model supported | 4.0 | 4.0 | 4.1 | 4.0 | 4.0 | 4.0 | 4.1 | 4.1 |

Test Rig

| Test Rig “Quadzilla” |

|

| Case Type | Top Deck Testing Station |

| CPU | Intel Core I7 965 Extreme (3.74 GHz 1.2975 Vcore) |

| Motherboard | Asus P6T Deluxe (SLI and CrossFire on Demand) |

| Ram | Kingston HyperX DDR 3 1600 (9,9,9,24 1.64v) 3GB Kit |

| CPU Cooler | Thermalright Ultra 120 RT (Dual 120mm Fans) |

| Hard Drives | Intel 80 GB SSD |

| Optical | Sony DVD R/W |

| GPU’s Tested | EVGA GTX-280 (2) Drivers 180.43 Palit Radeon HD 4870X2 Drivers FarCry 2 Hotfix BFG GTX-260 OCX Drivers – Vista 64 180.43 NVIDIA Sapphire HD 4870 Drivers – FarCry Hotfix Leadtek GTX-260 Drivers Vista 64 180.43 NVIDIA |

| Case Fans | 120mm Fan cooling the mosfet cpu area |

| Docking Stations | None |

| Testing PSU | Thermaltake Toughpower 1K |

| Legacy | None |

| Mouse | Razer Lachesis |

| Keyboard | Razer Lycosa |

| Gaming Ear Buds |

Razer Moray |

| Speakers | None |

| Any Attempt Copy This System Configuration May Lead to Bankrupcy | |

TEST RESULTS DX9

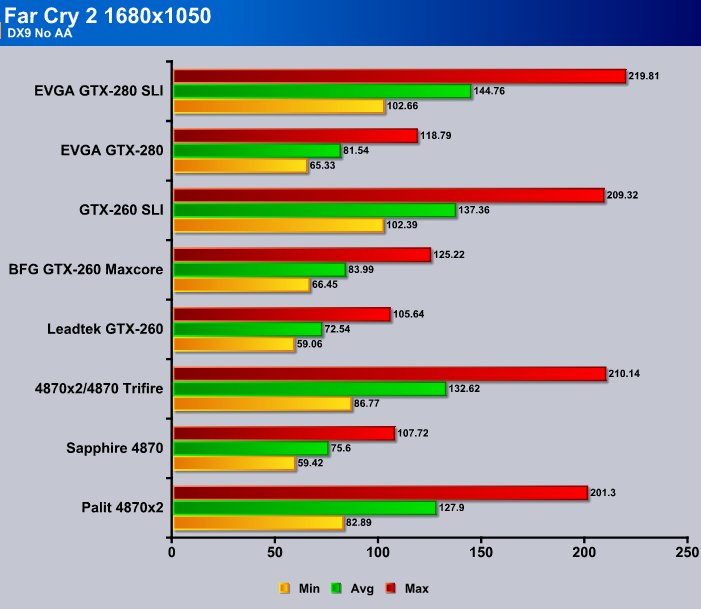

First, we’re going to look at FarCry 2 at 1680×1050 in DX9 0xAA.

The Single GPU test shows the EVGA GTX-280 has a slight advantage over a single ATI 4870. It pulls away on maximum frame rates by a mere 11 FPS, but that drops to 6 FPS on the average frame rate test and 7 FPS for the minimum frame rate. That’s pretty close for the two “Top Dog” GPU’s (single card single core) head to head. The EVGA GTX-280 wins but only by a hair.

The 4870X2, of course being dual GPU on one PCB, doesn’t really fit into the single GPU category because it has two GPU’s on one PCB and it almost fits as CrossFire because it can be considered CrossFire on one card, but doesn’t quite fit there either. When we look at it compared to the EVGA GTX-280’s running in SLI, it comes close to the SLI array but only in maximum FPS. On Average and Minimum FPS it runs about 20 FPS slower than the two EVGA GTX-280’s.

Now to the brass tacks of the issue. We ran the 4870X2 and 4870 in TriFire and for three GPU cores we get little to no return. We get 4 FPS increase on the minimum, and under 5 FPS increase on the average test, and under 9 FPS increase on the maximum frame rate. You could probably get better results just overclocking your CPU a little and cranking up your ram. That’s practically non-existent scaling. We ran the test with the ATI FarCry hotfix installed with the latest drivers, and we ran it multiple times in TriFire to confirm what we were seeing. Considering the test was done on Core I7 965 Extreme running at 3.74 GHz, the fastest most efficient CPU known with DDR 3 cranked up to 1333 providing a massive 23GB/s memory bandwidth, and sitting on a state of the art Asus P6T Deluxe X58 motherboard, that’s pretty sad scaling.

On the other hand, the EVGA GTX-280’s running in dual SLI show a tremendous improvement in scaling. This proves beyond a shadow of a doubt that, through close collaboration between NVIDIA and Game developers, SLI will and does work as intended. Scaling in games that aren’t this optimized won’t be this good, but that would be the game developers fault. NVIDIA has shown its willingness and desire to lend their expertise and resources to help game developers make SLI run correctly in their games. If they don’t take advantage of that generosity, they obviously need their heads examined.

In the minimum frames we gained 37.33 FPS or a 57% increase in FPS. Average frames gained 63.22 FPS or an increase of 77%. In Maximum frames we gained 101.2 FPS or a percentage increase of 85%. Now to get a conglomerate number we add all three and divide by three and we end up with a 68.4% FPS increase overall. That’s pretty decent for SLI scaling in DX9, but lets take a look at DX9 with 4xAA.

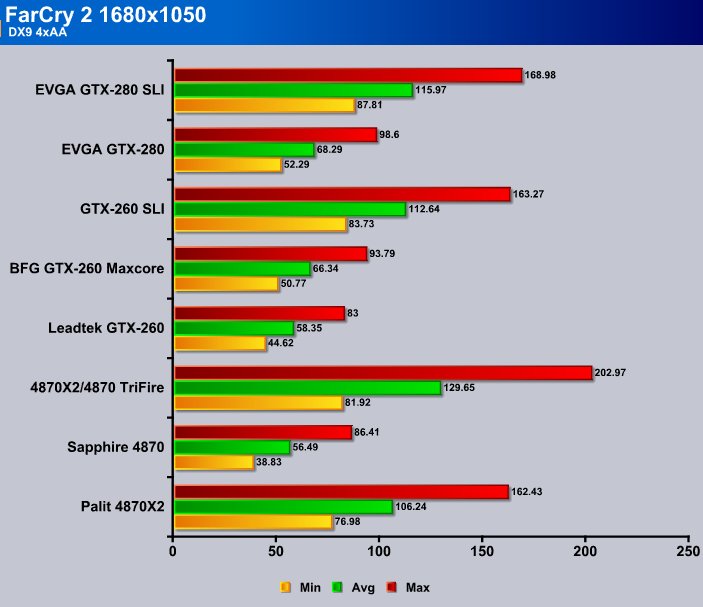

Part of the mystery that is ATI is that in some cases it runs better with AA turned on. Since having AA turned off is less demanding that would tend to indicate a driver glitch. It’s one they’ve been plagued with, or blessed with, depending on if you’re a glass half full or glass half empty kinda person.

Going from minimum which gained 5 FPS by adding a GPU to the mix, to average which added just over 23FPS, to maximum for a gain of 40 FPS, the scaling looks a little puny. We’ve tested CrossFire and it seems to scale well in synthetic benchmarks, but doesn’t make the transition to games very well.

Moving to the SLI setup we see an increase of 35 FPS on the minimum frames test, or a percentage increase of 68%. Yes we are aware of the remainders but we aren’t splitting hairs here, you can’t watch 1/3 of a frame unless your system is seriously jacked. For average FPS we see an increase of 47 FPS, a percentage increase of almost 70%. And maximum frames we get an increase of 70 FPS, a percentage increase of almost 72%. Is it just us or can SLI come to partial maturity through the close cooperation of game developers and NVIDIA? Because if we see scaling like this across a wide variety of games, it changes the face of SLI.

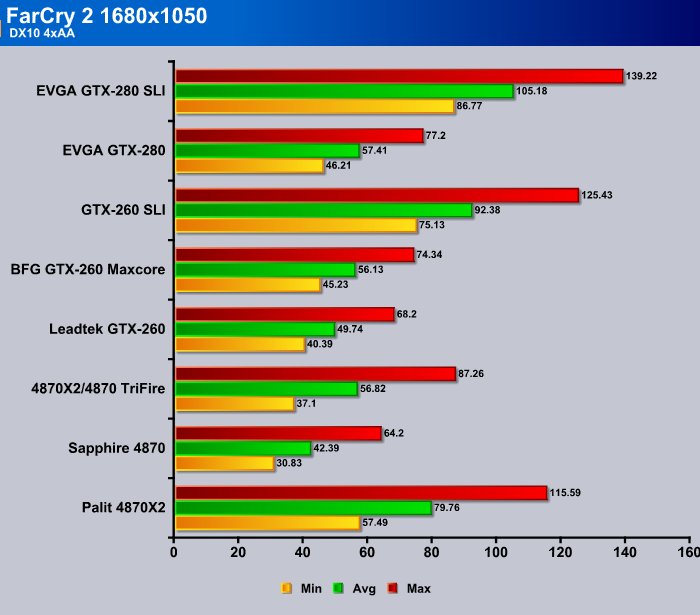

TEST RESULTS DX10

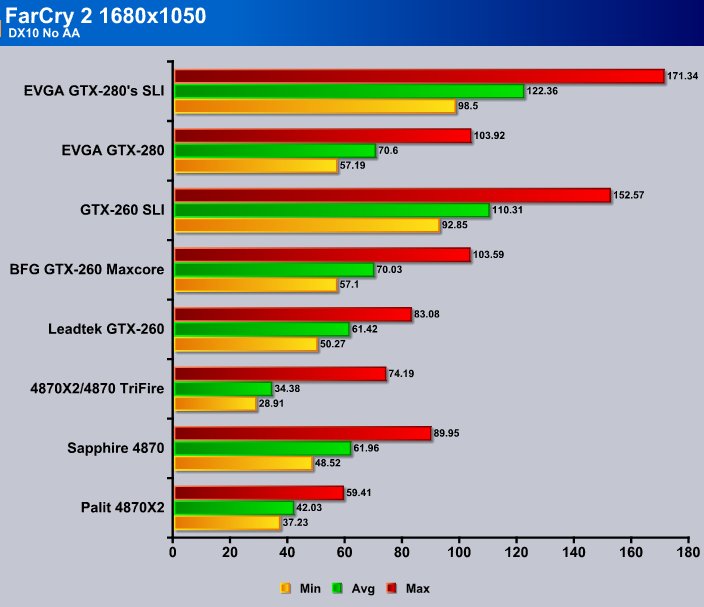

Lets take a look at FarCry 2, again at 1680×1050 with 0xAA.

For those of you shaking your heads and cursing at the 4870X2 results in DX10, we went ahead and saved one of the many benchmark screens for you to gander at in a second. We got with another 4870X2 owner and he ran the same tests with his 4870X2 and got similar results. In DX10 with no AA, the driver glitch we mentioned earlier practically cripples the 4870X2. Here’s the benchmark screenie.

Then, as you can see, when we moved to TriFire, minimum and average frame rates went down. That has to be a driver glitch. Keep in mind that we were using a clean install of Vista 64 using the clone copy to insure no previous drivers were interfering with our results. Also, consider this is with the FarCry 2 hotfix and latest drivers. Don’t kill the messenger, we just bench ’em and report the numbers.

Again, when we move from a single EVGA GTX-280 to the dual SLI setup, we see a smooth increase in frame rates across the board. Minimum frames increased 41 FPS for a percentage total of 72%. Average frames increased by 51 FPS a percentage total of 73%. Then finally, maximum frames increased by 67 FPS, again a percentage total of almost 65%. The scaling is much improved over previous generations of drivers.

We really like the graphics in FarCry 2 with NVIDIA PhysX. It might be our imagination, but the flames and cinders flying off look more realistic and act more naturally. Don’t get us started on the rag doll PhysX of opponents flying across the field. It would be really awesome if, when you get fragged in the Multi-player game, you could see through the eyes of your character rag dolling across the screen in slow motion (hint Ubisoft).

When we crank the AA up to 4xAA the 4870 single GPU starts to drag a little. If we went to a higher resolution it’d be below the magical 30 FPS required for most game play to look good to the human eye. The 4870X2 does decent by itself, but when we move it to TriFire, once again, the frames per second decreases. We confirmed this with the other 4870X2 owner and did some checking on the web. It’s accurate. For whatever driver related reason, the 4870X2 died with 0xAA in DX10, and when we moved to 4xAA that improved, but FPS went down in TriFire. We are seriously glad that we aren’t on the ATI driver team. They have to be scrambling to fix that little glitch. One thing we might have forgotten to mention, we were running the fans on the ATI cards at 100% to make sure they weren’t experiencing thermal throttling. The 4870X2 thermal light started flashing on us once and when we checked it was hitting 104°C (not during FarCry 2 testing). That’s the highest temperature we’ve seen it at so far. Not wanting to take a chance with it before we install a water jacket on it, we kicked the fans up to 100% to protect it during testing. We thought we’d mention that in case we have a die hard ATI fan out there chanting they didn’t cool the ATI cards well enough.

The EVGA GTX-280 SLI setup and the GTX-260 setup have scaled smoothly throughout all the FarCry testing. In most cases the mis-matched SLI setup (one Leadtek GTX-260, one BFG GTX-260 Maxcore) managed to stay out if front of the 4870X2 and in some cases outran TriFire. Go ahead, flip back a page and check. We’ll wait on you.

We can spout numbers all day but you can see for yourself, in DX10 with 4xAA the scaling on, the EVGA GTX-280 SLI setup just got better. Minimum frames increased 40 FPS, a percentage increase of 87%. Average frames increased by 47 FPS a percentage increase of 83%. Maximum FPS increased by 62 FPS a percentage increase of 80%.

All we can say is that game developers need to get with NVIDIA and optimize their games like Ubisoft did so we can enjoy this scaling across the board. With scaling showing this much promise on a properly optimized game, we, the end user, should demand it. Especially if this translates as well with less expensive GPU’s. Now that it’s been proven a viable and desirable technology, we can’t wait to see the day when every game scales this well!

CONCLUSION

After exhaustive testing, flocks of e-mails flying back and forth, and verifying testing results with other testers, we are quite impressed with the new found potential of SLI! Some times you can start a really big fire from a single spark that has been carefully nested in a bed of tinder. We hold high hopes for this little SLI spark, and hope that it finds its way into the bed of tender and ignites like a wildfire. The spark being NVIDIA drivers and support, and the bed of tinder, the game developers who only need to seek out and partner with NVIDIA to get SLI to work correctly in their games.

We would also hope that ATI will forge a partnership with the same game developers and get Crossfire running this well. This is, however, a field where the biggest and brightest takes the day. Right now the biggest and brightest is NVIDIA SLI technology. NVIDIA has functional SLI drivers that scale well when optimized right, prove that SLI works.

Nvidia has also proven their willingness to go above and beyond to help game developers utilize the technology correctly. They’ve also shown their commitment to the SLI platform and their belief in it.

This isn’t some over hyped promise or vaporware. These are real life results, in real life games that produce tangible desirable results. If this scaling is improved just a little bit, and the practice of optimizing games for SLI is adopted, it’s going to be a very exciting time in gaming!

We’re proud to be a part of it, and extremely excited by it! The future of SLI looms big on the horizon and you don’t have to look any farther than your local software store and FarCry 2 to get a glimpse of it.

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996