We all know that AMD will release their next generation GPU soon. While the company has not yet disclosed the full details of their upcoming GPUs, it has announced that the upcoming GPU will feature a new memory chip type called High Bandwidth Memory or HBM. AMD made this announcement during their 2015 Financial Analyst Day. AMD recently let more information out regarding HBM.

Current high-performance GPUs such as the Radeon R9 290X, the GeForce GTX Titan, and the GeForce GTX 980 all use GDDR5. The GDDR5 has been around since 2008 and has served quite well for GPUs for almost 10 years. However, GDDR5 is starting to show its age with new applications and technologies, such as 4K, which demands even higher bandwidth than what the GDDR5 can really offer. Thus, a new technology is needed to provide the GPU some much needed memory bandwidth and carry the GPU into the next 10 years.

AMD has always been at the forefront of GPU memory technology. The company first adopted GDDR5 into the Radeon HD 4870, and the company is again leading the pack with the next generation memory technology known as HBM. HBM address two main areas where current GDDR5 needs to improve: power consumption and memory bandwidth.

AMD’s own estimates show that while GPU performance has improved over time, so does the memory power consumption. This is in contrast to the direction of the industry, where reducing power consumption has been a very hot topic. Simply being able to deliver the best performance is no longer enough if it is not able to do it efficiently. Higher power consumption means higher heat output, so in order to keep up with the trend of moving into portable laptops and mobile devices and smaller systems, the performance per watt is critical.

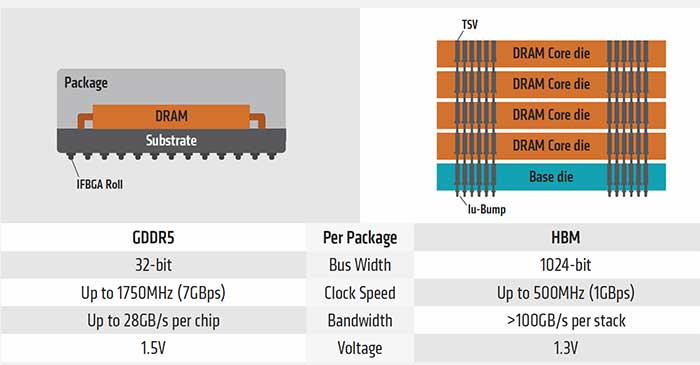

This is where HBM comes in. HBM is designed so that it can offer the memory bandwidth needed while also reducing power consumption. Unlike GDDR5, HBM takes a different approach when dealing with memory. Instead of having high clock speed memory running with a narrow memory bus, HBM takes a low clock speed but widens the memory bus.

The first gen HBM has the memory clocked at 1Gbps with a bus width of 1024-bits, whereas GDDR5 operates at 7Gbps with a 32-bit bus. However, HBM is capable of stacking memory chips which effectively amplifies the memory bus depending on the number of memory chips stacked. The example above shows that four chips are stacked on top of each other which results in four times the memory bus, or 4096-bit.

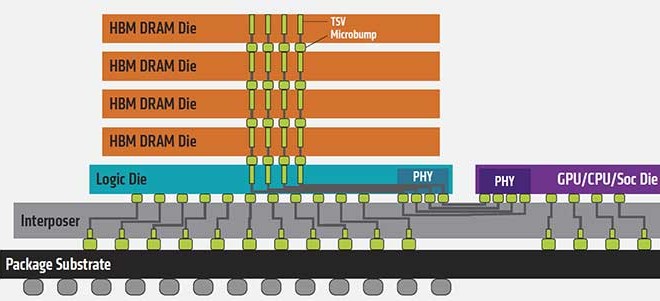

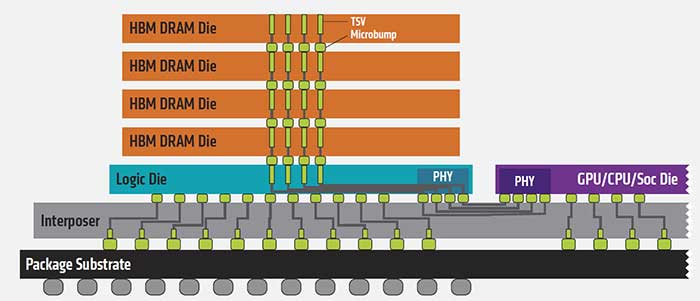

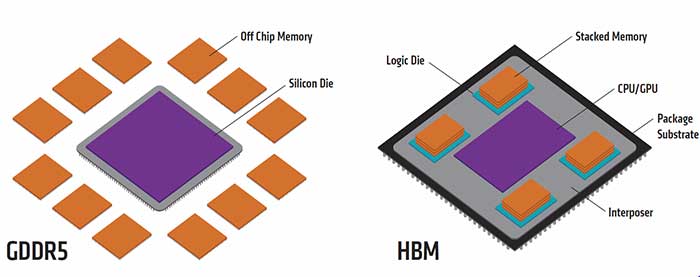

HBM achieves such high memory performance by places the memory chip very close to the GPU (or CPU) and stacks them on top of each other as opposed to spreading them out across the PCB. Vertically stacked memory chips are interconnected by microscopic wires called “through-silicon vias”, or TSV and “ubumps”. The GPU or CPU communicates with the memory chips through an interposer. The TSVs and ubumps are also used to connect the SoC/GPU to the interposer.

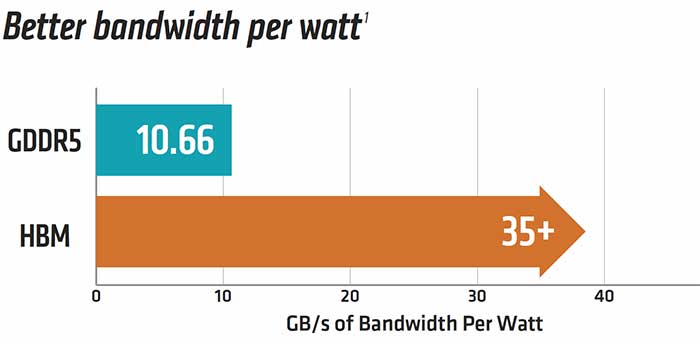

By placing the memory chip and GPU/CPU all closely together, this reduces the path that the signal needs to travel between the different resources. This, in turn, helps to reduce power and also helps to minimize latency. HBM is able to achieve four times the memory bandwidth of GDDR5 at 1.3v instead of 1.5v that the latter requires. This works out to be about three times the memory bandwidth per watt ratio.

The HBM design also helps to reduce the overall surface area needed. According to AMD, a 1GB HBM takes 94% less surface area than a GDDR5 module of the same amount. This means that future graphics cards can be even smaller, providing for a better fit for micro-ATX gaming systems and laptops.

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996