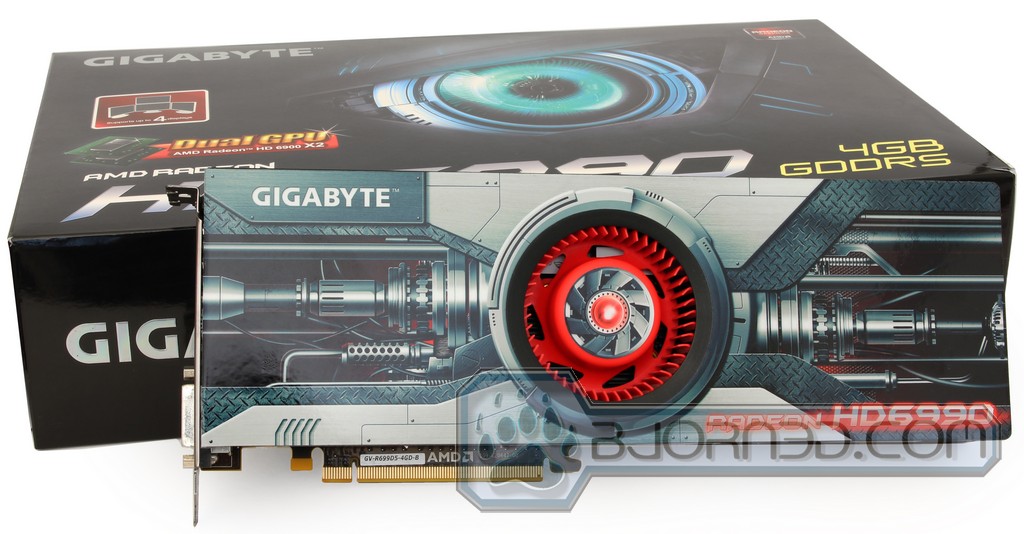

The GIGABYTE HD 6990 is a dual GPU beast that can take on any job. We’ve pushed this card to the edge, testing it on an Eyefinity setup. Keep reading to find out what this powerhouse can do.

Introduction

On March 8th, 2011 AMD has launched the new dual GPU graphics card Radeon HD 6990, codename Antilles. While the original release of these cards was expected to come in late 2010, the timing of the actual release couldn’t be better, since the release landed within a few weeks of the release of Nvidia’s GTX 590, which hit the market on March 24, 2011. Since the release of these dual GPU beasts, there has been a lot of discussion as to which one is superior. Hitting the same price range at about $700, both cards are luxury high-end performance cards capable of fully supporting a wide plethora of newest game releases. So the only justification for purchasing such cards as GTX 590 or HD 6990 is to explore gaming in full with features like Eyefinity or Nvidia Surround, which utilize multiple monitors and provide the ultimate surround performance.

Previously, we had the honor of reviewing both AMD Radeon HD 6990 and Nvidia GTX 590, which demonstrated outstanding performance.

Today we finally get a chance to take a look at the product by a rather renowned vendor–GIGABYTE. The GIGABYTE GV-R699D5-4GD-B Radeon HD 6990 is priced for $739.99 on Newegg.com, and follows the standard architecture, previously observed in the AMD HD 6990 reference card. Equipped with two fully optimized Cayman GPUs, this card is made for Eyefinity. In addition, this card has 4 GB of memory, which can be fully utilized when combined with a second HD 6990 in CrossFire.

Antilles Overview

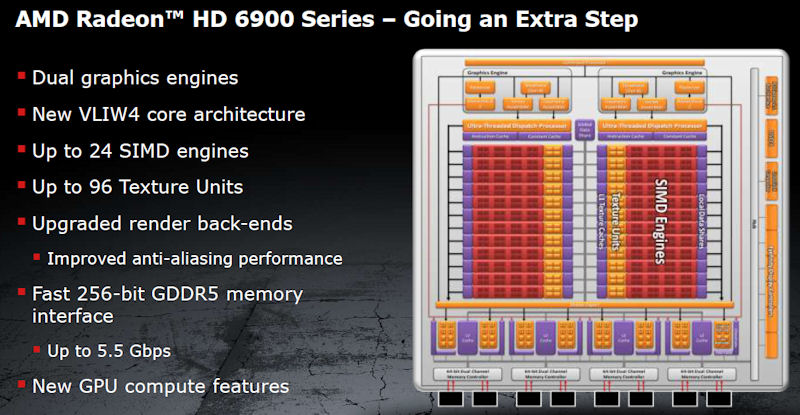

Antilles is the new GPU architecture for the AMD HD 6990 video cards. It is two AMD Cayman GPUs (HD 6970) combined on a single PCB to form a single very powerful graphics card. The Cayman GPUs on the HD6990 provide high performance.

On the Antilles architecture, the thread processors use the simplified VLIW4 architecture, and the total number of SIMD engines have been raised to 24. Each SIMD engine has a total of 64 ALUs: 16 thread processors with four ALUs each. This also includes four texture units, that have 512KB of L2 texture cache, and a 64KB cache for the local data share. So if we do a little math, we can figure out that two of these fully utilized Cayman GPUs provide a total of 3072 Shaders and 192 TMUs (Texture Memory Units). This is a high number of shaders, and should provide plenty of performance for high-end gaming. The interesting part to is that while the Cayman GPU architecture uses fewer Shader Processors than the previous Cypress architecture, the Cayman GPUs have additional SIMD engines. This provides for a more efficient rendering.

One nice improvement on the Antilles architecture is that the HD 6990 uses an ultra low latency 8647 chip to provide faster data rate through 48 PCI-E lanes. This will help reduce performance bottlenecks that some might have seen on previous generation cards. Also, with a faster interconnect between the two Cayman GPUs, the performance reaches up to 7.92 Gbps.

With both major and minor improvements on GPU architecture, and ultra low latency switches, the Antilles architecture should provide fantastic performance for those looking into getting a single video card that can max out even today’s latest video games.

SPECIFICATIONS

Let’s compare the HD6990 with the previous cards in the HD6xxx series.

The HD6990 uses the same VLIW4 core architecture as the HD6970 and the HD6950. This is an improved design that allows better utilization than the previous VLIW5 design. Some of the advantages over the previous design are a 10% improvement in performance per square millimeter, simplified scheduling and register management, and extensive logic re-use.

| HD6990 | HD6990 OC | HD6970 | HD6950 | |

|---|---|---|---|---|

| Process |

40 nm |

40 nm |

40 nm |

40 nm |

| Engine clock |

830 MHz |

880 MHz | 880 MHz | 800 MHz |

| Stream Processors |

3072 |

3072 | 1536 | 1408 |

| Compute Performance |

5.1 TFLOPs |

5.4 TFLOPs |

2.7 TFLOPs |

2.25 TFLOPs |

| Texture Units |

192 |

192 | 96 | 88 |

| Tex. Fillrate |

159.4 GTex/s |

169 GTex/s |

84.5 GTex/s | 70.4 GTex/s |

| Memory | 4 GB GDDR5 | 4 GB GDDR5 | GDDR5 | GDDR5 |

| Memory clock | 1250 MHz | 1250 MHz | 1375 MHz | 1250 MHz |

| PowerTune Max Power | 375W | 450W | 250W | 200W |

| Typical Gaming Power | 350W | 415W | 190W | 140W |

| Typical Idle Power | 37W | 37W | 20W | 20W |

The HD6990 has a default core-clock of 830 MHz, putting it between the HD6950 and the HD6970. However, it also has an enhanced performance BIOS that puts its clockspeed at 880 MHz, the same as the HD6970. The memory is clocked at 1250 MHz, same as the HD6950. The card has 4 GB GDDR5 memory (2 GB for each core). AMD is not planning a 2 GB version at this time.

In fact, if we look closely at the specifications, we can see that mostly, this card has twice what is observed on either the HD6950 or the HD6970. The HD6990 has 3072 stream processors compared to 1536 on the HD6970; it has 192 texture units compared to the HD6970’s 96 (notice a pattern here?); and the texture fillrate is either 159.4 Gtex/s or 169 Gtex/s depending on the BIOS setting, double that of the HD6950 and HD6970. This also extends to the power consumption–the typical idle power sits at around 37W (as compared to 20W for the HD6950 and HD6970) while the typical load power during gaming is around 350-415W (as compared to 140-190W for the HD6950 and HD6970).

GIGABYTE HD 6990 Specifications

| Chipset | Radeon HD 6990 |

| Core Clock | 830 MHz |

| Shader Clock | N/A |

| Memory Clock | 5000MHz |

| Process Technology | 3072 |

| Memory Size | 4GB |

| Memory Bus | 256 bit |

| Card Bus | PCI-E 2.1 |

| Memory Type | GDDR5 |

| DirectX | 11 |

| OpenGL | 4.1 |

| PCB Form | ATX |

| I/O | mini DisplayPort*4 DVI-I*1 |

| Digital max resolution | 2560 x 1600 |

| Analog max resolution | 2048 x 1536 |

| Multi-view | 5 |

| Tools | N/A |

| Card size | H38mm x L318 mm x W126 mm |

| Power requirement |

750W (two 150W 8-pin PCI Express® connector recommended) |

FEATURES

The HD6990 has the same features as the other HD6xxx cards, plus a trick or two up its sleeve.

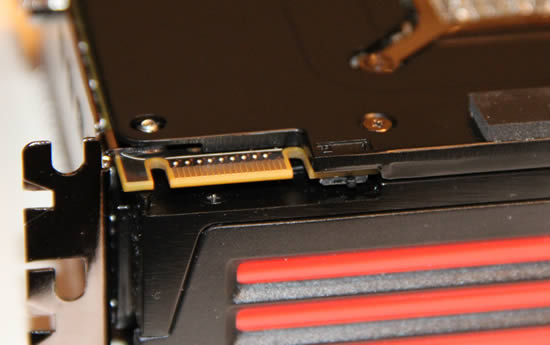

Dual-BIOS with factory overclocking

One cool feature introduced on the HD6950/HD6970 cards is the use of a dual-BIOS on the card. While the BIOS switch on the HD6950/HD6970-cards were used for backup BIOS chips, AMD used the dual-BIOS in a different way on the HD6990. The read-only BIOS is still a backup running at 830 MHz with a voltage of 1.12V, which is the stock speed for this card. However, when changed to the second setting, the card overclocks to 880 MHz and the voltage increases to 1.175V. The BIOS switch is covered with a label advising users to first read the manual.

For those wondering why AMD doesn’t use the overclocked setting as default, part of the problem could be that overclocked settings use a lot of power, which pushes the limit of what the PCI-Express specifications permit.

For those who want to continue overclocking the card even more AMD has increased the maximum available in Overdrive to 1200 MHz for the GPU cores.

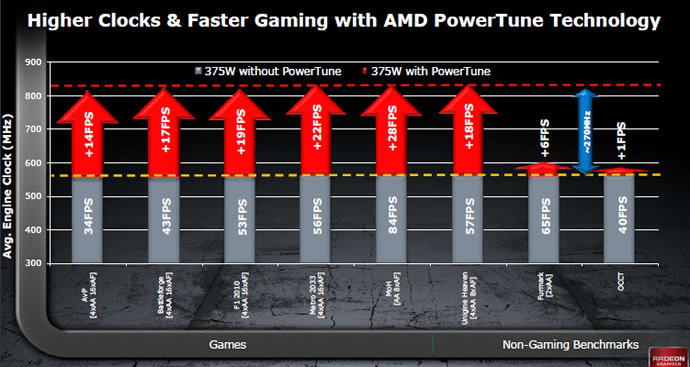

Powertune

Powertune was introduced with the HD6950/HD6970, and is an interesting technology that tries to get the most out of the cards while still staying under TDP. PowerTune dynamically adjusts the clock frequency so that the power usage never exceeds the TDP limit. This allows the card to automatically fine-tune its settings depending on the kind of application a user is running.

Manufacturers who build a card without any kind of power saving technology must design it to cope with a situation where it draws the highest amount of power possible. A few examples of this situation are “worst-case” programs like Furmark or OCCT, which are designed to load the GPU completely. However, the simple fact is that the GPU is rarely (if ever) at full load in games. With PowerTune, the GPU can be clocked at a much higher frequency; in the rare instances that the GPU is loaded so much that it consumes too much power (breaking the TDP limit), PowerTune can downclock the GPU *or GPUs) until the whole card is within the TDP limit. AMD says that without PowerTune, the HD6990 would never have been able to run at 830/880 MHz, instead having to settle for around 600 MHz. While PowerTune technology might downthrottle the GPUs a bit when using Furmark or OCCT, this benefits games as the card can be clocked much higher.

Updated Eyefinity

The HD6990 supports the new Portrait 5×1 mode that was introduced in the Catalyst drivers in late 2010.

With the right monitors, this setup should be one of the best uses of Eyefinity, as it allows users to get a much better aspect ratio when using 5 monitors in vertical orientation.

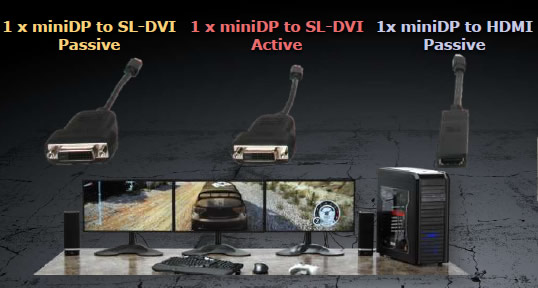

One problem for AMD with Eyefinity is that it requires at least one monitor with DisplayPort. Even though the prices for DisplayPort monitors are coming down, they still are more expensive than regular HDMI/DVI monitors. AMD has decided to get around this by including an active DVI to DisplayPort adapter with every HD6990 card. This means users will be able to set up a 3-monitor Eyefinity setup using 3 DVI monitors. This will drastically decrease the entry-price for Eyefinity.

EQAA and MLAA

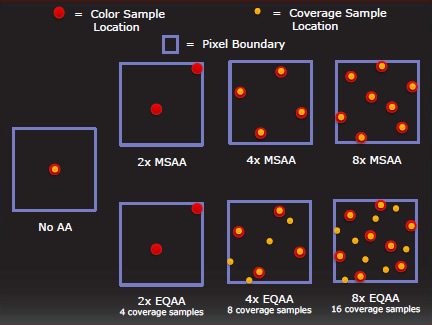

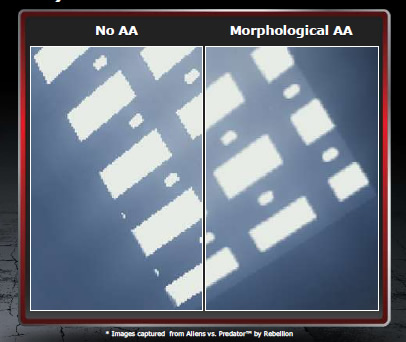

The HD6990 also supports the new anti-aliasing methods called Enhanced Quality Anti-aliasing (EQAA) and Morphological Anti-aliasing (MLAA).

EQAA is a new set of MSAA modes with up to 16 coverage samples per pixel. It has custom sample patterns and filters, and according to AMD, offers better quality at the same memory footprint. It is compatible with adaptive AA, Super-sampling AA and Morphological AA.

Morphological AA is a post-process filtering technique accelerated with DirectCompute. It delivers full-scene anti-aliasing, meaning it is not limited to polygon edges and alpha-tested surfaces. Performance is similar to edge-detect CFAA, though morphological AA applies to all edges. It can even be used on still images. Games do not need AA-support to use MLAA, and it can be turned on in the Catalyst Control Center.

Due to logistical restrictions, we could not more closely examine these different AA techniques and other image optimizations, but we hope to revisit this and include comparisons to Nvidia’s cards. As far as we can see in our testing, the image quality still is great.

3D

While AMD is not pushing 3D as much as Nvidia, they still do support 3D gaming. Instead of doing it with their own closed technique, they are supporting open standards, and many games can be played in 3D using a middle-ware program like DDD. During their presentation of the HD6990, AMD discussed 2 upcoming games: Dragon Age 2 and Deus Ex: Human Revolution. Both games will support 3D with AMD’s cards. Dragon Age 2 uses DDD to support AMD H3D3 and Deus Ex will actually have native support for HD3D. At this time we do not know much more, but it will be interesting to see how well they play in 3D on AMD’s hardware compared to Nvidia’s more matured 3D technology.

—————————————————————————————————————————

■Enjoy beautifully rich and clear video playback when streaming from the web

■Take in your favorite movies in stunning, stutter-free HD quality

■Run multiple applications smoothly at maximum speed

■Enjoy lightning fast game play and realistic physics effects

Pictures & Impression

Testing & Methodology

The OS we use is Windows 7 Pro SP1 64bit with all patches and updates applied. We also use the latest drivers available for the motherboard and any devices attached to the computer. We do not disable background tasks or tweak the OS or system in any way. We turn off drive indexing and daily defragging. We also turn off Prefetch and Superfetch. This is not an attempt to produce bigger benchmark numbers. Drive indexing and defragging can interfere with testing and produce confusing numbers. If a test were to be run while a drive was being indexed or defragged, and then the same test was later run when these processes were off, the two results would be contradictory and erroneous. As we cannot control when defragging and indexing occur precisely enough to guarantee that they won’t interfere with testing, we opt to disable the features entirely.

Prefetch tries to predict what users will load the next time they boot the machine by caching the relevant files and storing them for later use. We want to learn how the program runs without any of the files being cached, and we disable it so that each test run we do not have to clear pre-fetch to get accurate numbers. Lastly we disable Superfetch. Superfetch loads often-used programs into the memory. It is one of the reasons that Windows Vista occupies so much memory. Vista fills the memory in an attempt to predict what users will load. Having one test run with files cached, and another test run with the files un-cached would result in inaccurate numbers. Again, since we can’t control its timings so precisely, it we turn it off. Because these four features can potentially interfere with benchmarking, and and are out of our control, we disable them. We do not disable anything else.

We ran each test a total of 3 times, and reported the average score from all three scores. Benchmark screenshots are of the median result. Anomalous results were discounted and the benchmarks were rerun.

Please note that due to new driver releases with performance improvements, we rebenched every card shown in the results section. The results here will be different than previous reviews due to the performance increases in drivers.

Comparison Testing

While previously we did test both HD 6990 and GTX 590 on P67 set, GIGABYTE HD 6990 was tested on an X58 chipset, with GIGABYTE’s own G1 Sniper motherboard in order to see whether there is a performance difference between two chipset,as well as to verify the cards true potential at 880 MHz. All of the testing was performed at 880 Mhz BIOS settings.

Acoustics Testing

When we test acoustics for each video card in our system, we minimize ambient environment noise by running each test after 2AM. This prevents any ambient noise from outside due to cars and other noise. Usually all electronics and other hardware are off in the night as well, so it’s the best possible time to perform some accoustic testing. We also try to minimize noise by using low RPM fans. Since the dB(A) sound meter only records the highest noise coming from the system, as long as we use quieter fans than the video card, the extra minimal noise level should not be a problem in our testing. We set up the sound meter on a small tripod exactly 9 inches away from the video card, making sure that the sound meter points at the fan of the video card. Then we run the system and record idle fan noise when just running Windows. To record the highest noise coming from the fan, we run Unigine Heaven 2.1 for 15 minutes before we take a maximum reading.

We used an Extech Instruments 407730 Sound Meter on a monkey rubber tripod.

Temperature and Power Consumption Testing

To measure the temperature of the video card, we used MSI Afterburner and ran Metro 2033 for 10 minutes to find the Load temperatures for the video cards. The highest temperature was recorded. After playing for 10 minutes, Metro 2033 was turned off and we let the computer sit at the desktop for another 10 minutes before we measured the idle temperatures.

To get our power consumption numbers, we plugged in our Kill A Watt power measurement device and took the Idle reading at the desktop during our temperature readings. We left it at the desktop for about 15 minutes and took the idle reading. Then we ran Metro 2033 for a few minutes minutes and recorded the highest power consumption.

Test Rig

| Test Setup | |

| Case | Silverstone Temjin TJ10 |

| CPU |

Intel Core i7 2600K @ 4.8GHz |

| Motherboard |

ASUS P8P67 WS Revolution |

| Ram |

Patriot Gamer 2 Series 1600 MHz Dual-Chanel 16GB (4x4GB) Memory Kit |

| CPU Cooler |

Heatblocker Rev 3.0 LGA 1156 CPU Waterblock Thermochill 240 Radiator |

| Hard Drives |

4x Seagate Cheetah 600GB 10K 6Gb/s Hard Drives 2x Western Digital RE3 1TB 7200RPM 3Gb/s Hard Drives |

| SSD | 1x Zalman SSD0128N1 128GB SandForce SSD |

| Optical | ASUS DVD-Burner |

| GPU |

Nvidia GeForce GTX 590 (Dual-GPU Video Card) GIGABYTE Radeon HD6990 (Dual-GPU Video Card) 2x Nvidia GeForce GTX580 in 2-Way SLI Nvidia GeForce GTX 580 1536MB ASUS ENGTX580 1536MB Nvidia GeForce GTX 570 1536MB Nvidia GeForce GTX 560 Ti GIGABYTE GeForce GTX 480 SOC 1536MB Galaxy GeForce GTX 480 1536MB 2x Palit GeForce GTX460 Sonic Platinum 1GB in 2-Way SLI Palit GeForce GTX460 Sonic Platinum 1GB ASUS Radeon HD6870 AMD Radeon HD5870 |

| Case Fans |

1x Quiet Zalman Shark’s Fin ZM-SF3 120mm Fan – Top 1x Silverstone 120mm fan – Front 1x Quiet Zalman ZM-F3 FDB 120mm Fan – Hard Drive Compartment |

| Additional Cards | LSI 3ware SATA + SAS 9750-8i 6Gb/s RAID Card |

| PSU |

Sapphire PURE 1250W Modular Power Supply |

| Mouse | Razer Mamba |

| Keyboard | Logitech G15 |

Synthetic Benchmarks & Games

We will use the following applications to benchmark the performance of the Nvidia GeForce GTX 580 video card.

| Synthetic Benchmarks & Games | |

| 3DMark Vantage | |

| 3DMark 11 | |

| Metro 2033 | |

| Lost Planet 2 | |

| Civilization V | |

| Dirt 2 | |

| HAWX 2 | |

| Crysis Warhead | |

| Just Cause 2 | |

| Unigine Heaven 2.1 | |

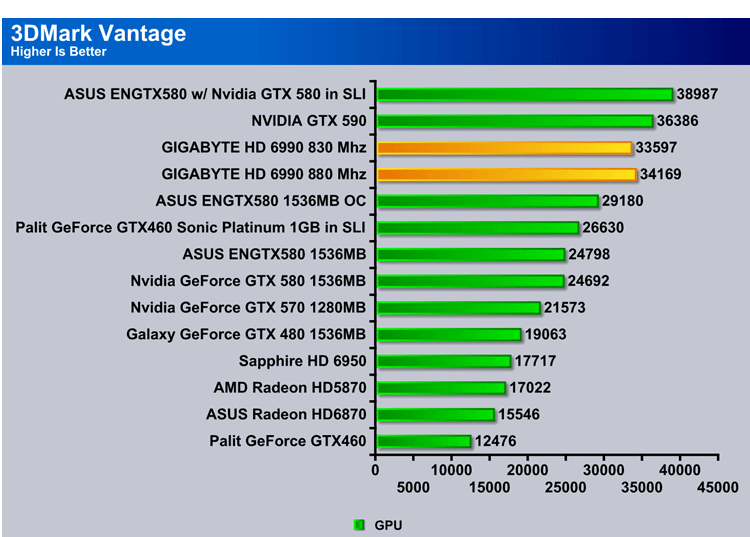

3DMark Vantage

The newest video benchmark from the gang at Futuremark. This utility is still a synthetic benchmark, but one that more closely reflects real world gaming performance. While it is not a perfect replacement for actual game benchmarks, it has its uses. We tested our cards at the ‘Performance’ setting.

Out of the entire testing suit 3D Mark Vantage is an excellent contender to start with, since the error provided throughout the benchmark is rather minimal, allowing for us to see how the GIGABYTE HD6990 fairs against other cards in DX10. Considering that overall, AMD cards perform well in DX10, we would predict for the HD 6990 to supersede its competition, the Nvidia GTX 590. However, to our surprise, the GTX 590 seems to take a lead over GIGABYTE HD 6990 by over 1500 points, a score that is definitely worth noticing.

3DMark 11

“3DMark 11 is the latest version of the world’s most popular benchmark for measuring the graphics performance of gaming PCs. Designed for testing DirectX 11 hardware running on Windows 7 and Windows Vista the benchmark includes six all new benchmark tests that make extensive use of all the new features in DirectX 11 including tessellation, compute shaders and multi-threading. After running the tests 3DMark gives your system a score with larger numbers indicating better performance. Trusted by gamers worldwide to give accurate and unbiased results, 3DMark 11 is the best way to test DirectX 11 under game-like loads.”

Unlike its DX10 predecessor, 3DMark11 is a real stress test for the newer cards, throwing a plethora of variables like volumetric lighting, polygonal tessellation and volumetric shadow refraction. The GTX 590 should outperform significantly in this test, due to the presence of 32 raster engines that specialize in polygonal tessellation. However, quite the opposite is true, and the GIGABYTE HD 6990 outperforms the Nvidia card in all but the physics test.

We reran 3DMark 11 in extreme mode to witness the true potential of both dual GPU cards. The GIGABYTE HD 6990 seems to overtake the Nvidia GTX 590 completely. In addition, to the previously observed result in graphics, including polygonal tessellation and texture calculations, GIGABYTE HD 6990 seems to even itself out in the physics test.

Unigine Heaven 2.1

Unigine Heaven is a benchmark program based on Unigine Corp’s latest engine, Unigine. The engine features DirectX 11, Hardware tessellation, DirectCompute, and Shader Model 5.0. All of these new technologies combined with the ability to run each card through the same exact test means this benchmark should be in our arsenal for a long time.

While the two cards demonstrate comparative performance at lower resolution, at standard 1080p resolution the difference in performance becomes more apparent. The extreme BIOS GIGABYTE HD6990 seems to demonstrate a 14 FPS increase over Nvidia GTX 590. However, under extreme tessellation conditions the Nvidia GTX590 is able to provide a slightly higher performance, confirming that the presence of additional raster engines clearly plays a role.

CRYSIS WARHEAD

Crysis Warhead is the much anticipated standalone expansion to Crysis, featuring an updated CryENGINE™ 2 with better optimization. It was one of the most anticipated titles of 2008.

Just Cause 2

“Just Cause 2 is an open world action-adventure video game. It was released in North America on March 23, 2010, by Swedish developer Avalanche Studios and Eidos Interactive, and was published by Square Enix. It is the sequel to the 2006 video game Just Cause.

Just Cause 2 employs the Avalanche Engine 2.0, an updated version of the engine used in Just Cause. The game is set on the other side of the world from the original Just Cause, on the fictional island of Panau in Southeast Asia. Panau has varied terrain, from desert to alpine to rainforest. Rico Rodriguez returns as the protagonist, aiming to overthrow the evil dictator Pandak “Baby” Panay and confront his former mentor, Tom Sheldon.”

In the case of Just Cause 2 the software is not nearly as optimized and we do see a difference in performance, although it is not that significant. Overall, the GIGABYTE HD 6990 performs slightly better at lower resolutions, while Nvidia GTX 590 seems to outperform HD 6990 at higher resolution.

Lost Planet 2

“Lost Planet 2 is a third-person shooter video game developed and published by Capcom. The game is the sequel to Lost Planet: Extreme Condition, taking place ten years after the events of the first game, on the same fictional planet.”

Lost Planet 2 is an excellent game that allows for true testing of DirectX 11. Filled with bloom effect, the depth of field in this game is clearly present extensively and will put the raster engines of Nvidia GTX 590 to the test. As the result of testing shows, the Nvidia GTX 590 demonstrates a higher performance increase, which is fairly expected considering that the level of polygonal tessellation and OpenCL expression is rather high, while DirectCompute clearly seems to lag behind.

Metro 2033

Metro 2033 is an action-oriented video game blending survival horror and first-person shooter elements. The game is based on the novel Metro 2033 by Russian author Dmitry Glukhovsky. It was developed by 4A Games in Ukraine and released in March 2010 for the Xbox 360 and Microsoft Windows. In March 2009, 4A Games announced a partnership with Glukhovsky to collaborate on the game. The game was announced a few months later at the 2009 Games Convention in Leipzig; a first trailer came along with the announcement. When the game was announced, it had the subtitle “The Last Refuge,” but this subtitle is no longer being used.

Metro 2033 results demonstrate yet another back-and-forth between GIGABYTE HD 6990 and NVIDIA GTX 590. The results seem to be a reversal of what was observed in Just Cause 2. In this case GTX 590 seems to perform better at lower resolution, while at higher resolution GIGABYTE HD 6990 takes over. This makes sense considering that testing was done with 4xAA in the game.

HAWX 2

Tom Clancy’s H.A.W.X. 2 plunges fans into an explosive environment where they can become elite aerial soldiers in control of the world’s most technologically advanced aircraft. The game will appeal to a wide array of gamers as players will have the chance to control exceptional pilots trained to use cutting edge technology in amazing aerial warfare missions.

Developed by Ubisoft, H.A.W.X. 2 challenges you to become an elite aerial soldier in control of the world’s most technologically advanced aircraft. The aerial warfare missions enable you to take to the skies using cutting edge technology.

While HAWX 2 benchmark is not the best example of performance, considering that the card are able to run through the bench at a really high fps rate, resulting in potentially high error, Nvidia graphics card are renowned for shining in this benchmark. As expected, the Nvidia GTX 590 does demonstrate a 30fps increase over the extreme BIOS GIGABYTE HD 6990, which is a noticeable 20% difference.

EYEFinity

As mentioned in our Features & Specifications section, Eyefinity is a neat feature that allows users to fully utilize this card, and we decided to try a 3 monitor setup on the GIGABYTE HD 6990. The most distinct feature that separates Eyefinity from Nvidia Surround is that the monitor requirements are restricted to only a resolution and/or refresh frequency. Therefore, the user can use 3-5 monitors of various brands, as long as they are able to support the same maximum resolution and have the same refresh rate of either 60 or 120 Hz. In addition, the provided Eyefinity compatible adapter kit will come in handy. When plugging in more than 2 monitors the user must make sure that all of the other adapters go through mini-DP port and are active.

Overall, there is a drop in performance between 5760×1080 and 1920×1080 resolutions, as would be expected, since the card has to process three times as many pixels as with a single monitor. However, the performance drop varies and is not 66% as one would hypothetically imagine. On average, a user should expect a 35-50% drop in performance dependent upon the level of tessellation. In this case, all of the games were run on maximum settings, and show a fair objective fps for those that wish to play their games on maximum. However, some of the newer releases will not be able to run totally smoothly at absolutely maximum settings. For example, Metro 2033 is a very demanding game, and with 4xAA, will drop fps below the desired 30 fps, resulting in a poor gaming experience. In that case, sacrificing antialiasing should bring the fps back to the playable level. On the other hand, for the majority of games, the FPS don’t seem to be a problem, and this suggests that a 5 monitor setup is a viable option.

NOise LEVEL TEST

When we test acoustics for each video card in our system, we minimize ambient environment noise by running each test after 2AM. This prevents any ambient noise from outside due to cars and other noise. Usually all electronics and other hardware are off at night as well, so it’s the best possible time to perform acoustic testing. We also try to minimize noise by using low RPM fans. Since the dB(A) sound meter only records the highest noise coming from the system, as long as the other fans in the system are quieter than the video card, the extra minimal noise level should not be a problem in our testing. We set up the sound meter on a small tripod exactly 12 inches away from the video card, making sure that the sound meter pointed at the fan of the video card. Then we ran the system and recorded idle fan noise in Windows. To record the highest noise coming from the fan, we ran Unigine Heaven 2.1 for 15 minutes before taking the maximum reading.

We used an Extech Instruments 407730 Sound Meter on a monkey rubber tripod.

Acoustic Levels (Fan Noise)

| Video Card | Idle – db(A) | Load – db(A) |

|---|---|---|

| GIGABYTE Radeon HD 6990 |

45.4 |

58.6 |

| NVIDIA GeForce GTX 590 | 45.7 | 48.5 |

From the table above, we can tell that the GIGABYTE Radeon HD 6990 is twice as loud as the Nvidia GTX 590, because every 10dB(A) increase is percieved as twice as loud by our ears. Here we have a quick video which can provide an idea of how loud each card is:

We have the proof of the noise level testing in our video, however we would like to note that we could not include the 100% fan speed noise for the HD 6990 because of editing issues. However, we have never heard any card as loud as the HD 6990. The HD 6990 was 73 dB(A) at 100% fan speed, as compared to the GTX 590’s 57 dB(A).

Overclocking the GIGABYTE 6990

Overclocking of GIGABYTE HD 6990 is made nice and easy for any user due to the fact that HD 6990 is equipped with distinctly different sets of BIOS. The standard BIOS or BIOS profile 1 is downclocked version of the Cayman GPU with core clock at 830 Mhz resulting in individual performance lower than that of 6970. The extreme BIOS profile on the other hand is fully developed 880 Mhz core clock profile allowing for the release of the true card potential. The difference between the two setting is apparent in change of Pixel and Texture Fillrate. However,with the increase in performance there is a price to pay in terms of heat and power consumption, which is listed below

These overclocking settings have been used extensively in all of our testing in order to understand the difference between two profiles. (Refer to the results section). Overclocking voltages with other software at this point is not currently accessible and is not recommended, considering that dual GPU cards usually don’t do well under higher voltages, and are highly prone to overheating.

TEMPERATURES

To measure the temperature of the video card, we used MSI Afterburner and ran Metro 2033 for 10 minutes to find the Load temperatures for the video cards. The highest temperature was recorded. After playing for 10 minutes, Metro 2033 was turned off and we let the computer sit at the desktop for another 10 minutes before we measured the idle temperatures.

| Video Cards – Temperatures – Ambient 23C | Idle | Load (Fan Speed) |

|---|---|---|

| ASUS GeForce GTX 580 | 38C | 73C (66%) |

| NVIDIA GeForce GTX 590 (GPU 1 / GPU 2) | 40C /42C | 85C / 85C |

| GIGABYTE Radeon HD 6990 830 Mhz(GPU 1 / GPU 2) (50% fan speed) | 39C /44C | 74C / 72C |

| GIGABYTE Radeon HD 6990 880 Mhz(GPU 1 / GPU 2) (50% fan speed) | 41C/47C | 79C/76C |

In terms of temperature, the GIGABYTE Radeon HD 6990 seems to perform rather well, considering that it is a dual GPU card. However, the idle temperatures and load temperatures are subject to change depending upon a multitude of variables, including case ventilation and fan speed. The GIGABYTE HD 6990 is equipped with an extremely powerful fan that is able to keep the system in the low 60C area under 100% fan load. However, the price to pay is the noise level. However, if the user wishes to sacrifice some of the cooling for quiet fan performance, fan speeds at 33% are recommended, and will allow for the card to run smooth for at least a few hours under load.

POWER CONSUMPTION

To get our power consumption numbers, we plugged in our Kill A Watt power measurement device and took the Idle reading at the desktop during our temperature readings. We left it at the desktop for about 15 minutes and took the idle reading. Then we ran Metro 2033 for a few minutes minutes and recorded the highest power consumption.

| Video Cards – Power Consumption | Idle | Load |

|---|---|---|

| NVIDIA GeForce GTX 590 |

368W |

593W |

| GIGABYTE Radeon HD 6990 830 Mhz | 349W | 563W |

| GIGABYTE Radeon HD 6990 880 Mhz |

363W |

578W |

Surprisingly, the power consumption of the GIGABYTE HD 6990 actually seems to be slightly lower than that of the Nvidia GTX 590. However, the TDP of 450W for the Extreme BIOS setting is not a myth and will be used if the card is used in Eyefinity mode. Just like with the temperature, the power consumption when using multiple monitors will skyrocket and turn any room without proper air conditioning into sauna. In terms of power consumption efficiency on a single monitor, the GIGABYTE Radeon HD 6990 is better.

Conclusion

The GIGABYTE HD 6990 has demonstrated a rather impressive level of performance throughout the rigorous testing, while retaining its cool. Surprisingly, after 2 hours of benching on overclocked settings at 50% fan speed, the card did not break the 78 C temperature mark, suggesting that the cooling design implemented in this product is definitely worth it. On a single screen, the GIGABYTE HD 6990 is able to max out any game with ease and deliver outstanding gaming performance. Fully exploring the panoramic gameplay provided by the Eyefinity feature, the GIGABYTE HD 6990 is an excellent choice. This luxury high-end performance card is powerful enough to support any graphically intense application and was only challenged the 3DMark 11 Benchmark under Extreme settings.

The value of the card follows its performance. While coming at the rather high cost of $739.99 this card is able to deliver the performance equivalent to that of 2x GTX 570 that could be purchased at the same price, and lands itself fairly close to the performance of 2×6970 in CrossFire or 2xGTX580 in SLI. While it is not the most compact card and in fact is approximately an inch longer than NVIDIA GTX 590, it only takes up one PCI-E slot, freeing up room for other PCI-E devices.

While not necessarily jam-packed on features, the GIGABYTE HD 6990 does have a nice and simple dual BIOS. The provided software for the GIGABYTE HD 6990 is fairly easy to get used to, allows for very easy and convenient control of desktop configurations. The card is well equipped to accommodate the dual GPU and uses only one PCI-E slot.

Overall, this is an excellent choice for those that require an extremely powerful card to run highly demanding applications. In terms of its performance compared to the GTX 590, it is difficult to say as to which card is superior. Throughout the testing the lead for performance was not apparent for either candidates. Therefore, the best choice for consumer is solely left up to the preference. The GIGABYTE HD 6990 does have an extraordinarily loud cooling system compared to the Nvidia GTX 590. In terms of power consumption, both cards are demanding, so the final choice is up to the end-user.

| OUR VERDICT: GIGABYTE HD 6990 | ||||||||||||||||||

|

||||||||||||||||||

| Summary: The GIGABYTE HD 6990 is a worthy card for any top of the line gaming system. Despite its extremely high price, it delivers great performance across the board, has efficient cooling, and can support multiple monitor setups. For its performance and quality, it earns the Bjorn3D Golden Bear Award. |

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996