Needless to say when GIGABYTE offered us their newest 8800GT TurboForce edition graphics card for review we were elated. We plan to find out if extreme performance and energy savings are mutually exclusive of one another or if the two can effective coexist in harmony.

INTRODUCTION

If you’ve been watching any of the hoopla associated with the forthcoming Presidential election you have probably noticed that two of the larger issues issues being debated are change and energy conservation. Right now you’re probably wondering if you have logged into the right forum and what in the world are we talking about! Hang in there for a minute and I think this will make sense to you as we continue. If you think about it these two terms transcend more than just the political arena and are quite applicable to many of the newer computer products being released today.

Most manufacturers tout the astounding new features each product bears in the form of marketing hype to create a certain buying frenzy for the product(s) they are readying for release to the consumer market. In many cases, especially with graphics cards, you’re looking at a few tweaked features that are essentially added to a reference design. These features are generally performance enhancing and are superfluous to the other 99% of the features that remained standard. These performance enhancing features generally cost the owner not only in the initial price they pay, but also in their power bills. In most case better performance translates into higher energy consumption.

We at Bjorn3d have been awakened to a new measure, performance to power consumption ratio, which we feel in the coming years will become a very important statistic even for the extreme computer enthusiast in choosing the products they purchase. We have been doing some extensive reading regarding these improvements and have noted that GIGABYTE™ appears to be leading the way in this arena. They have released an entire new line of motherboards and graphics cards sporting their power saving enhancements. Needless to say when GIGABYTE offered us their newest 8800GT TurboForce edition graphics card for review we were elated. We plan to find out if extreme performance and energy savings are mutually exclusive of one another or if the two can effective coexist in harmony.

GIGABYTE: The Company

GIGABYTE, one of the most well-known brands in the industry, started as a motherboard technology research laboratory with the passion of a few young engineers two decades ago. With the vision and insights to the market, GIGABYTE has become one of the world’s largest motherboard manufacturers. On top of motherboards and graphics accelerators, GIGABYTE has further expanded its product portfolio to include notebook and desktop PC’s, digital home entertainment appliances, networking servers, communications, mobile and handheld devices. GIGABYTE has rise from an eight man office to a world-class enterprise in the IT industry.

Today, GIGABYTE is a constant product winner of international design awards and professional media’s most recognized IT provider. With employees across continents and sales channels in almost every country, GIGABYTE can tailor to the needs of its business partners and customers with online and on-site technical support and service centers. GIGABYTE has transformed its core technologies into a force to make your life better and easier.

For decades, GIGABYTE has been in the community to delight people with the latest digital innovations. Everyday, GIGABYTE members are fully engaged at work simply knowing they can create better life experiences for all customers. This is why GIGABYTE is always committed to research and development of the latest technology while striving for the highest quality in design innovation and customer services. GIGABYTE aims for customer’s total satisfaction because that is what builds trust and adds values to the brand. Thus, the vision for the company to see people enjoy lives with GIGABYTE.

FEATURES & SPECIFICATIONS

On paper the GIBABYTE™ 8800GT TurboForce statistically appears to fall into the upper midrange of factory overclocked 8800GTs. We at Bjorn3D learned a long time ago that you can’t always “judge a book by its cover” and “beauty isn’t always skin deep” when it comes to computer components. We’ll push this baby as far as we can and see exactly what it has to offer.

| GIGABYTE™ 8800GT TurboForce Model GV-NX88T512HP Comparative Specifications |

||

| Specification | GIGABYTE 8800GT TurboForce | NVIDIA 8800GT Reference |

| RAMDACs | Dual 400 MHz | Dual 400 MHz |

| Memory BUS | 256 bit | 256 bit |

| Memory | 512 MB | 512 MB |

| Memory Type | DDR3 | DDR3 |

| Memory Clock | 920 MHz (1840 MHz effective) | 900 MHz (1800 MHz effective) |

| Stream Processors | 112 | 112 |

| Shader Clock | 1715 MHz | 1500 MHz |

| Clock Rate | 700 MHz | 600 MHz |

| Chipset | GeForce™ 8800 GT (G92) | GeForce™ 8800 GT (G92) |

| Bus Type | PCI-E 2.0 | PCI-E 2.0 |

| Fabrication Process | 65nm | 65nm |

| Highlighted Features | HDCP Ready Dual DVI Out RoHS HDTV ready SLI ready TV Out |

HDCP Ready Dual DVI Out RoHS HDTV ready SLI ready TV Out |

Features

- Powered by NVIDIA GeForce 8800GT GPU

- Supports PCI Express 2.0 and 112 Stream processors

- Integrated with 512MBGDDR3 memory and 256-bit memory interface

- Supports SLI multi-GPU Technology

- Supports Pure VideoTM HD & H.264 decoding & VC-1Technology

- Microsoft DirectX 10, OpenGL 2.0 and Shader Model 4.0 support

- Supports HDTV function and HDTV cable enclosed

- Features Dual Dual-link DVI-I / D-SUB (by adapter) / TV-OUT

- Dual-link HDCP capable.

- Hot Games:

- NeverWinter Nights 2

Unique Features

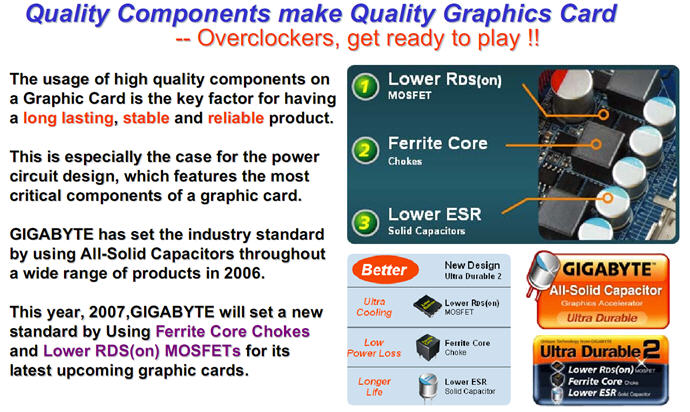

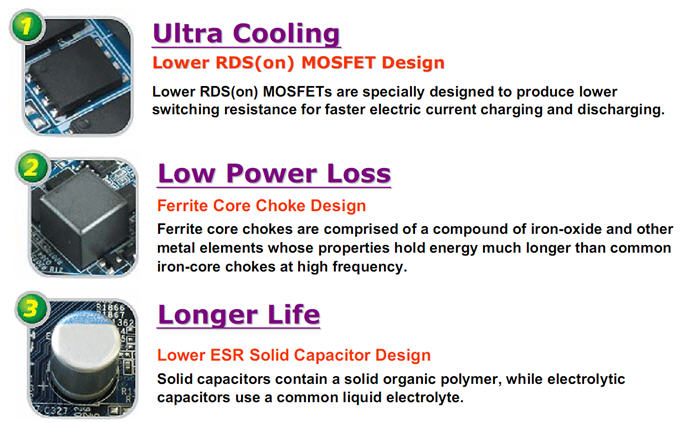

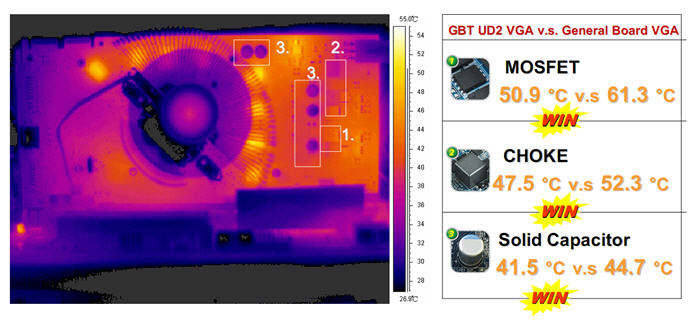

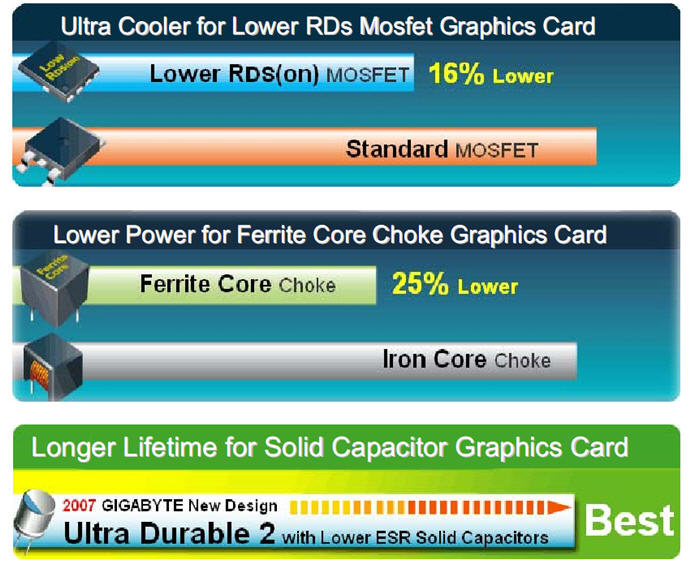

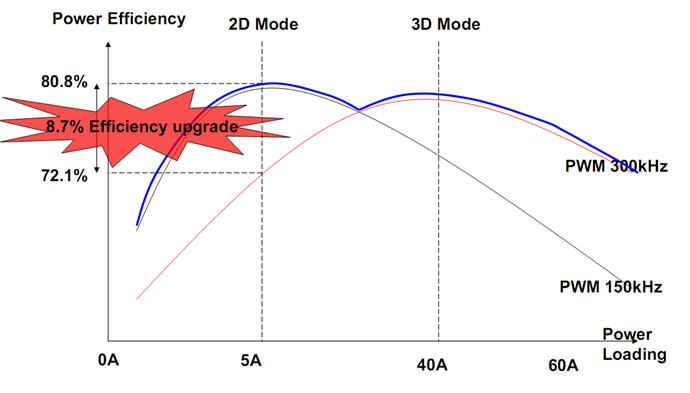

The GIGABYTE 8800GT TurboForce Graphics card is by far not the standard NVIDIA reference 8800GT. GIGABYTE utilizes new, higher grade component technology which they term Ultra Durable2. Ultra Durable2 uses Lower RDS (on) MOSFET Design, Ferrite core chokes, and Lower ESR Solid Capacitors. The images provided below will help to better describe the function of each of these components as well as how they perform together to vastly improve the functionality of the 8800GT TurboForce.

PACKAGING

With the most recent graphics cards we received for review we have noted that many of the manufacturers have opted for a smaller, less bulky container to house their product. GIGABYTE chose a more middle of the road sized package to ship their products that is no less protective of the container’s valuable contents. We’d really like to see all computer products vendors use the most minimal approach possible that still offers ample protection to the valuable cargo you spent your hard earned dollars on. Not only will it save a few trees but it might even save a few dollars that the manufacturer could pass on to their customers.

Click a picture to see a larger view

|

|

|

|

CONTENTS

So many of the graphic card manufacturers had decreased the bundled accessories that they offer with their graphics solutions. Back in the day it was not unusual to get everything supplied but also as many as two full edition games. We fully understand that manufacturing costs have risen and are glad to see that GIGABYTE has included a full version of the popular RPG Neverwinter Nights 2. In addition they include:

- 1 – GIGABYTE GV-NX88T512HP 8800GT TurboForce Edition card

- 2 – DVI-VGA Connectors

- 1 – S-Video Adapter Cable

- 1 – Neverwinter Nights 2 Game Disc

- 1 – Neverwinter Nights 2 Manual

- 1 – Graphic card Manual

- 1 – Drivers CD

Bundled Accessories

THE CARD & FIRST IMPRESSIONS

Upon removing the GIGABYTE 8800GT TurboForce Edition card from its protective enclosure, the very first thing that caught our eye was its naked. By this we mean it doesn’t have the full-bodied cooler that’s typical of other NVIDIA reference based 8800GT series cards. The next thing was that it’s tiny, again in comparison to the reference standard cards. The GIGABYTE 8800GT is approximately 1.7 inches shorter than the reference standard card that measures 9.1 inches in length.

We were especially impressed with the completely copper clad Zalman VF830-Cu GPU cooler mounted on the GIGABYTE™ 8800GT TurboForce. This cooler replaces the Zalman VF720 used on previsous versions of this card and is incredibly quiet. We were completely stoked as we’ve never been huge fans of NVIDIA’s reference coolers, they cool well but can get obnoxiously loud when the card heats up. We’ll look forward in the testing phase of this review to determine how much difference, if any, the factory installed after-market cooler makes. It should further be noted that the depth of the Zalman VF830-Cu GPU cooler actually makes this card take up two slots as opposed to one with the NVIDIA reference cooler.

Click a picture to see a larger view

|

|

|

|

|

|

|

|

|

|

|

|

The new NVIDIA 8800GT(G92) is manufactured with a die shrink of their chipset from the previous 90nm standard down to 65nm. This die shrink process has allowed the G92 chip to utilize roughly 754 million transistors as opposed to approximately 681 Million in its G80 counterpart. We would expect to see a marginal drop in temperatures with the G92 which is also directly related to shrinking the die size to 65nm and also from the addition of the components listed above. It should also be noted that with the die shrink to 65nm the 8800GT GPU operates at 1.1V as opposed to 1.5V for it 90nm, G80 counterpart.

BUNDLED SOFTWARE

One of the coolest things that is included with the GIGABYTE 8800GT TurboForce card is their Heads Up Display (HUD) software. While many of the graphics solutions vendors include some type of overclocking utility with their product, GIGABYTE is the first we’ve seen that allows you to regulate the voltage your card uses. This is especially useful when overclocking the card even though the maximum voltage allowed is only 1.2V. The HUD utility also allows real-time overclocking of the TurboForce’s core clock speed, memory clock speed, and shader clock speed. Finally, there is an auto optimized feature the utility offers that minimizes the cards speed and voltage consumption for use in 2D/3D mode when surfing the Web, checking email, and performing other daily chores that are non-performance related. We will see just how effective this product is when we overclock this card to its maximum later in the review.

GIGABYTE HUD Utility Interface

TESTING REGIME

Every time we receive a new graphics card to review we struggle with the dilemma of which other card(s) should we compare it to. In this case we had just finished a review comparing the three fastest cards in three of the four 8800 categories, which included the ASUS EN8800GT TOP, the XFX 8800GT XXX and the XFX 8800GTX XXX. We will be using those for comparative purposes in our testing today and will find out how the GIGABYTE 8800GT TurboForce card stacks up against these behemoths..

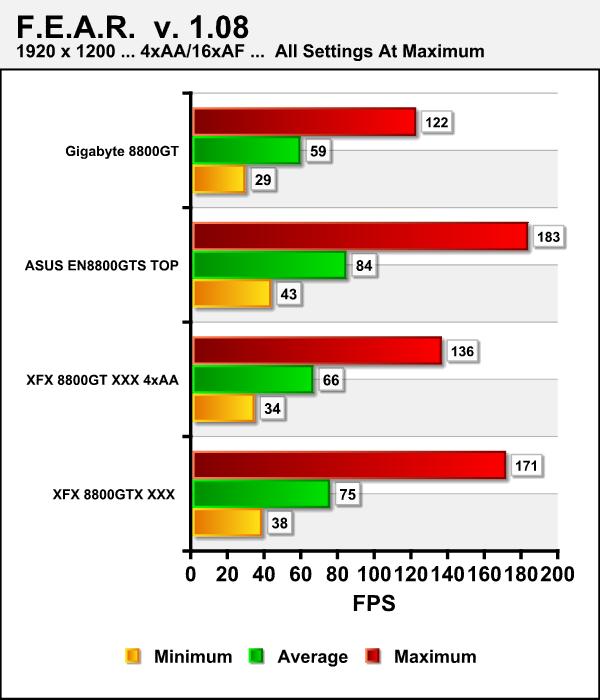

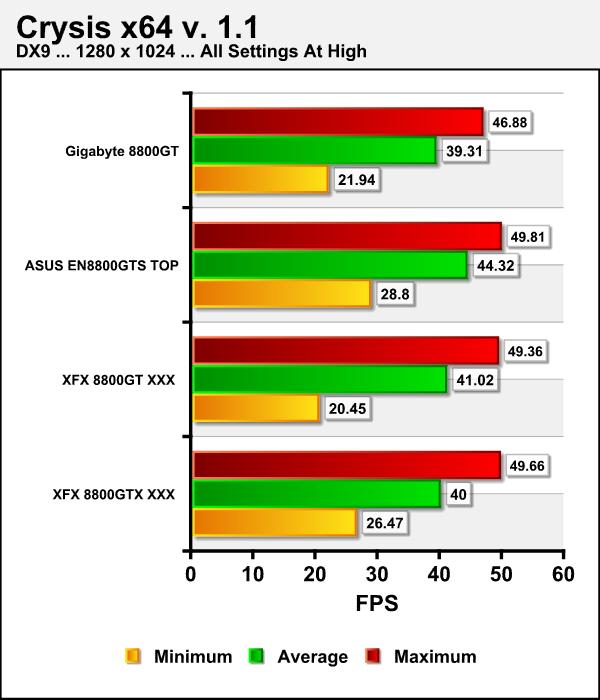

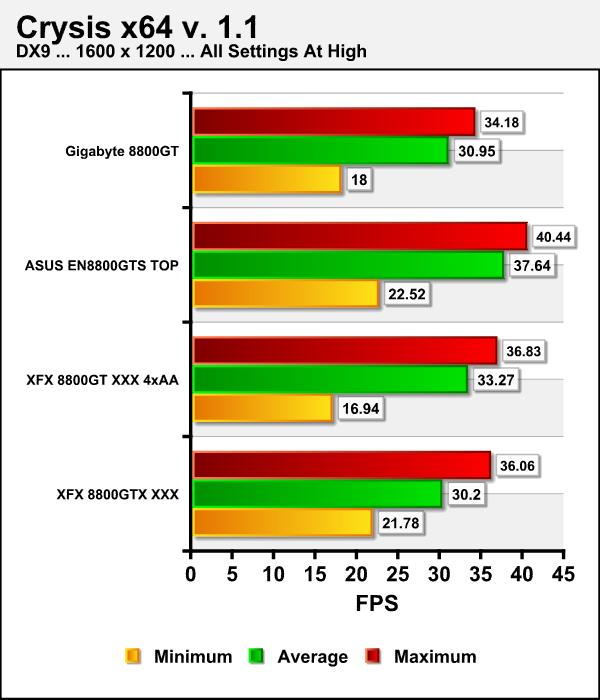

We will run our captioned benchmarks with each graphics card set at default speed. All of our synthetic and gaming tests will be run at the 1280 x 1024, 1600 x 1200, and 1920 x 1200 some without Antialiasing and Anistropic Filtering and others using various level of this eye candy. For those games that offer both DX9 and DX10 rendering we’ll test both. Each of the tests will be run individually and in succession three times and an average of the three results calculated and reported. F.E.A.R. benchmarks were also run with soft shadows disabled.

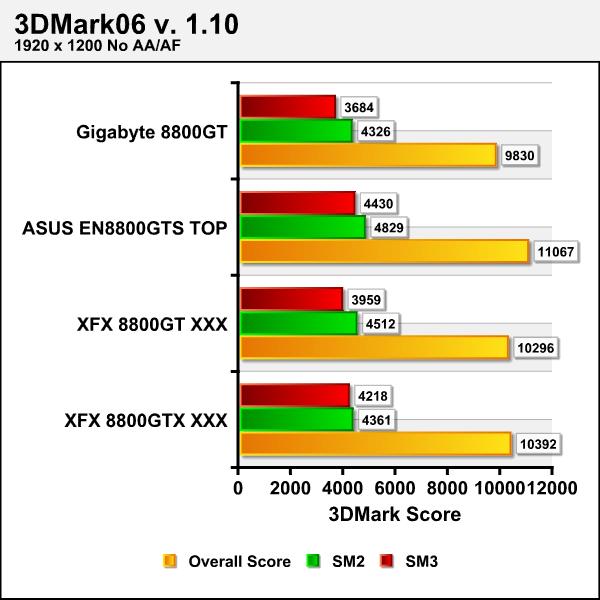

As the chart below shows the GIGABYTE 8800GT TurboFOrce’s stock statistics don’t quite match up to the other three cards, nonetheless this should give us a good barometer of the card’s performance.

| Comparative Specifications | ||||

| Specification | GIGABYTE 8800GT TurboForce | ASUS EN8800GTS TOP | XFX 8800GT XXX | XFX 8800GTX XXX |

| Memory | 512 MB | 512 MB | 512 MB | 768 MB |

| Memory Clock | 1.84 GHz | 2.07 GHz | 1.95 GHz | 2 GHz |

| Stream Processors | 112 | 128 | 112 | 128 |

| Shader Clock | 1700 MHz | 1800 MHz | 1675 MHz | 1500 MHz |

| Clock Rate | 700 MHz | 740 MHz | 670 MHz | 630 MHz |

|

Test Platform |

|

|

Processor |

Intel Q6600 Core 2 Duo @ 2.4GHz |

|

Motherboard |

ASUS Maximus Formula (Non-SE) BIOS 0907 |

|

Memory |

4GB of Mushkin XP-2 6400 DDR-2 4-3-3-10 |

|

Drive(s) |

2 – Seagate 1TB Barracuda ES SATA Drives |

|

Graphics |

Video Card # 1: GIGABYTE™ GeForce® 8800GT TurboForce running ForceWare 169.25 64-bit WHQL |

|

Cooling |

Enzotech Ultra w/ 120mm Delta Fan |

|

Power Supply |

Antec 650 Watt Neo Power & Antec 550 Watt Neo Power |

|

Display |

Dell 2407 FPW |

|

Case |

Antec P190 |

|

Operating System |

Windows Vista Ultimate 64-bit SP1 RC1 |

|

Synthetic Benchmarks & Games |

|

3DMark06 v. 1.10 |

|

Call of Heroes v. 1.71 DX 9 & 10 |

|

Crysis v. 1.1 DX 9 & 10 |

|

World in Conflict Demo DX 9 & 10 |

|

F.E.A.R. v 1.08 |

|

Serious Sam 2 v. 2.068 |

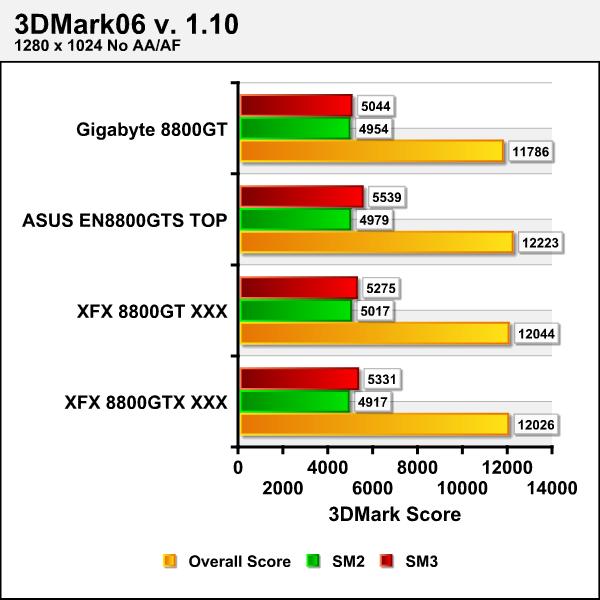

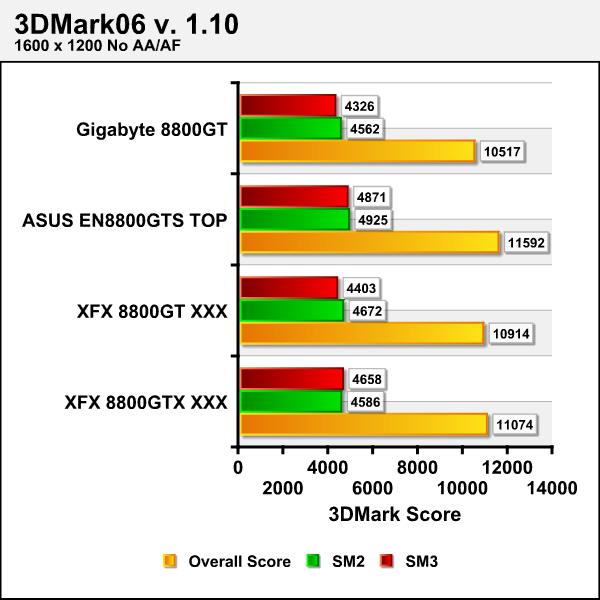

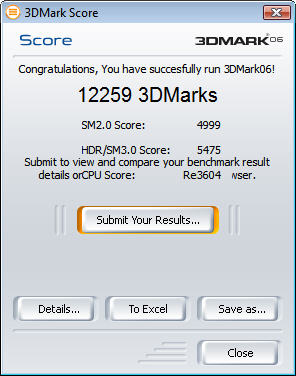

3DMARK06 v. 1.1.0

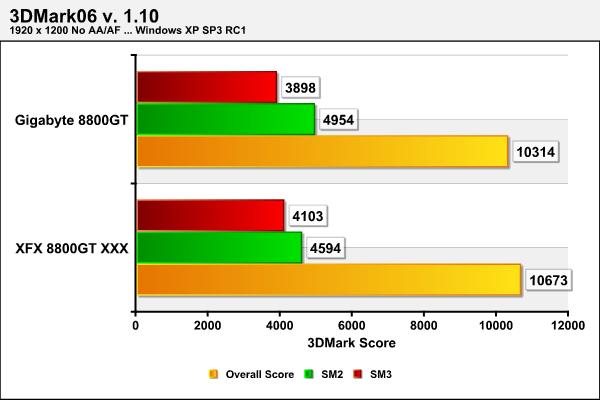

3DMark06 developed by Futuremark is a synthetic benchmark used for universal testing of all graphics solutions. 3DMark06 features HDR rendering, complex HDR post processing, dynamic soft shadows for all objects, water shader with HDR refraction, HDR reflection, depth fog and Gerstner wave functions, realistic sky model with cloud blending, and approximately 5.4 million triangles and 8.8 million vertices; to name just a few. The measurement unit “3DMark” is intended to give a normalized mean for comparing different GPU/VPUs. It has been accepted as both a standard and a mandatory benchmark throughout the gaming world for measuring performance.

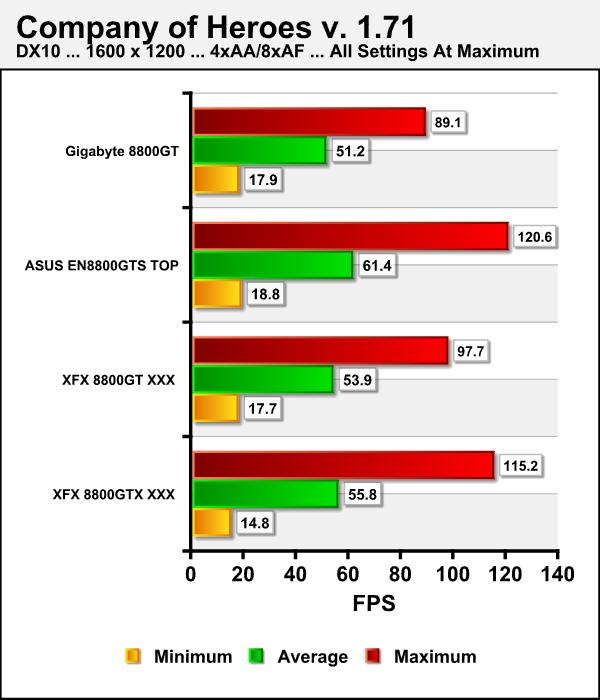

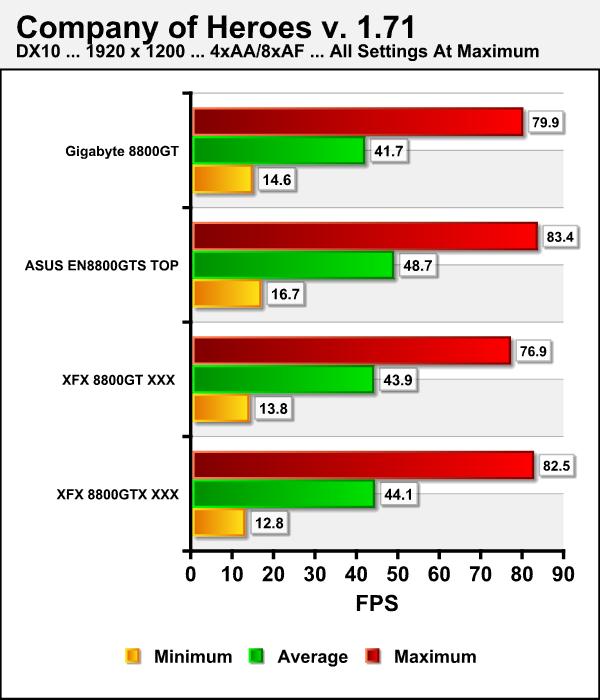

COMPANY OF HEROES v. 1.71

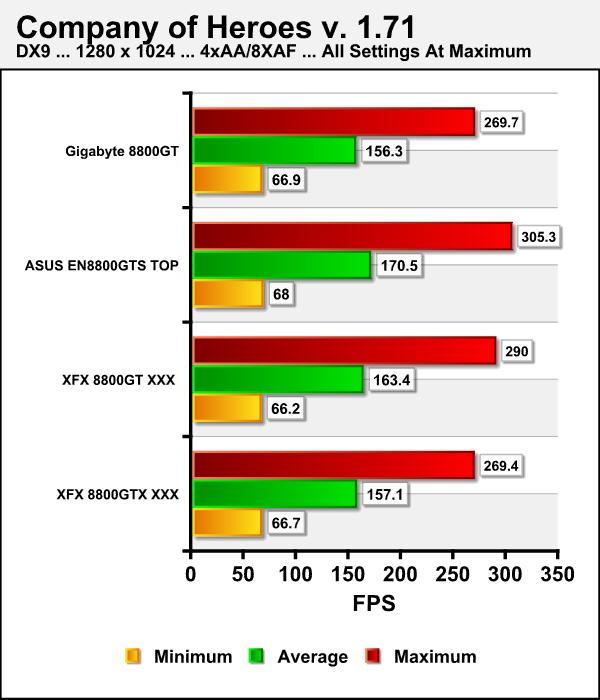

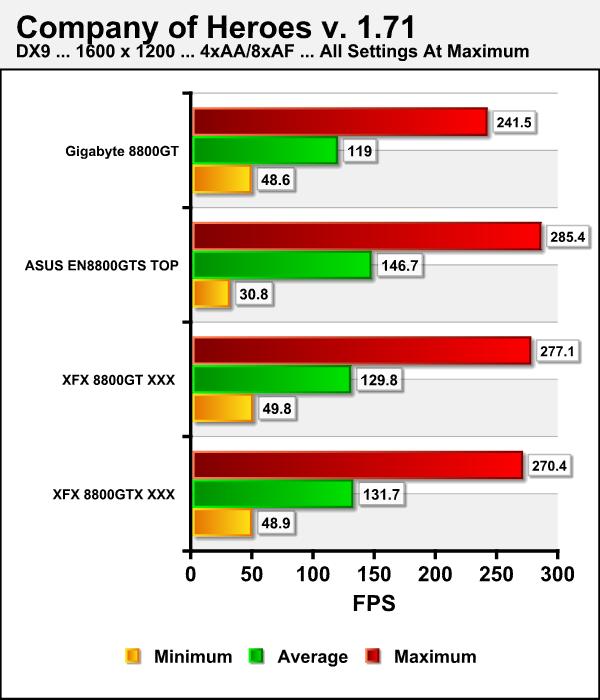

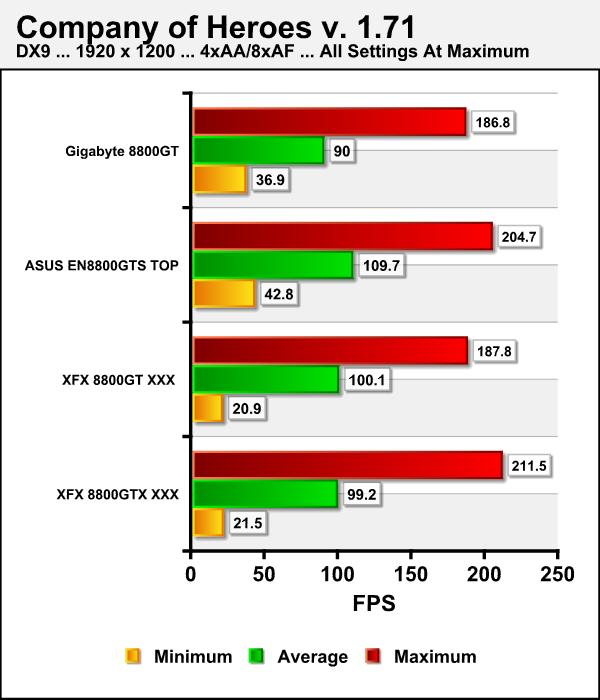

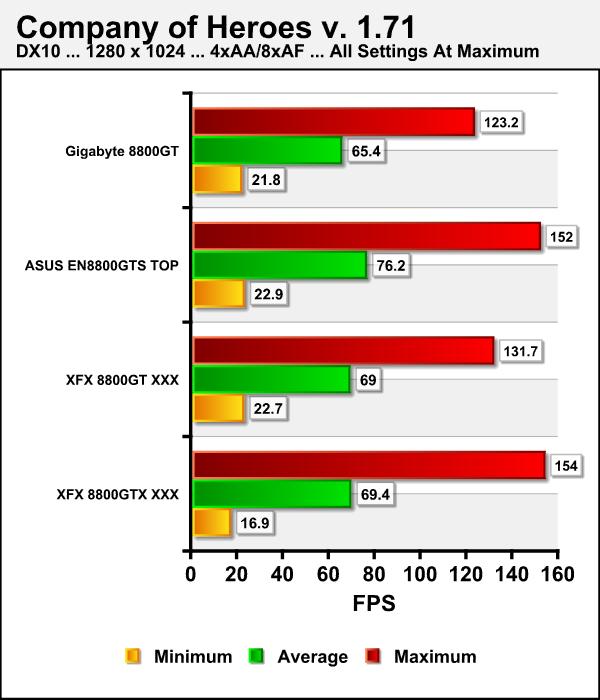

Company of Heroes(COH) is a Real Time Strategy(RTS) game for the PC, announced on April, 2005. It is developed by the Canadian based company, Relic Entertainment, and published by THQ. We gladly changed from the first-person shooter based genres of the rest of our gaming benchmarks to this game which is RTS. Why? COH is an excellent game that is incredibly demanding on system resources thus making it an excellent benchmark. Like F.E.A.R. the game contains an integrated performance test that can be run to determine your system’s performance based on the graphical options you have chosen. It uses the same multi-staged performance ratings as does the F.E.A.R. test. We salute you Relic Entertainment!

Note: After discussing it numerous times with my fellow reviewers, we decided to run this benchmark with Vsync disabled to prevent being locked in the 60fps range. Easy huh? Well all you generally have to do is go into the NVIDIA control panel and globally force Vsync off. Notice I said generally, as that didn’t work for us in this test arena. After uninstalling and reinstalling drivers and trying everything in our bag of tricks we turned to the Internet. We read some posts on various forums of people having the same issues or similar issues with Vista. Well we’re not jus using Vista 64 we’re also using SP1 RC1 which heretofore hadn’t show any problems. To cut to the chase we tried a solution we use to use back in days gone by we used the “-novsync” command at the end of the target in the program icon and voila it worked. We’re just passing this along as a tip in case you should have similar dilemma.

DX9

COMPANY OF HEROES v. 1.71

DX10

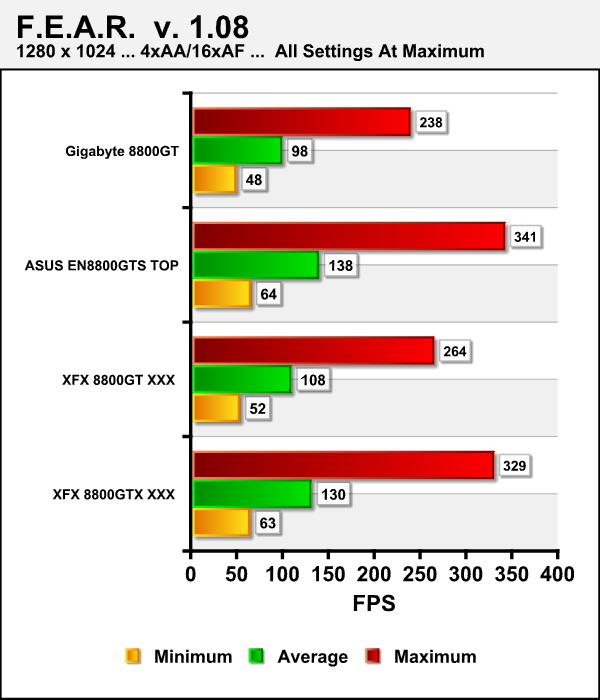

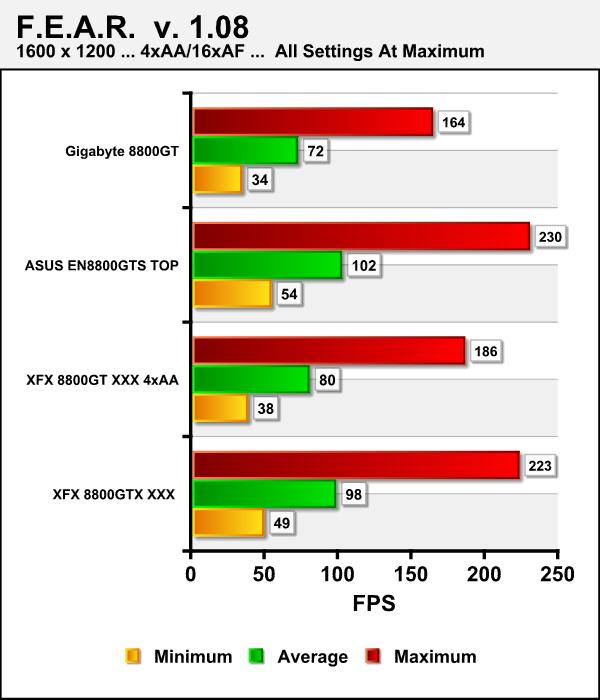

F.E.A.R v. 1.08

F.E.A.R. (First Encounter Assault Recon) is a first-person shooter game developed by Monolith Productions and released in October, 2005 for Windows. F.E.A.R. is one of the most resource intensive games in the FPS genre of games ever to be released. The game contains an integrated performance test that can be run to determine your system’s performance based on the graphical options you have chosen. The beauty of the performance test is that it gives maximum, average, and minimum frames per second rates and also the percentage of each of those categorical rates your system performed. F.E.A.R. rocks both as a game and as a benchmark!

CRYSIS v. 1.1

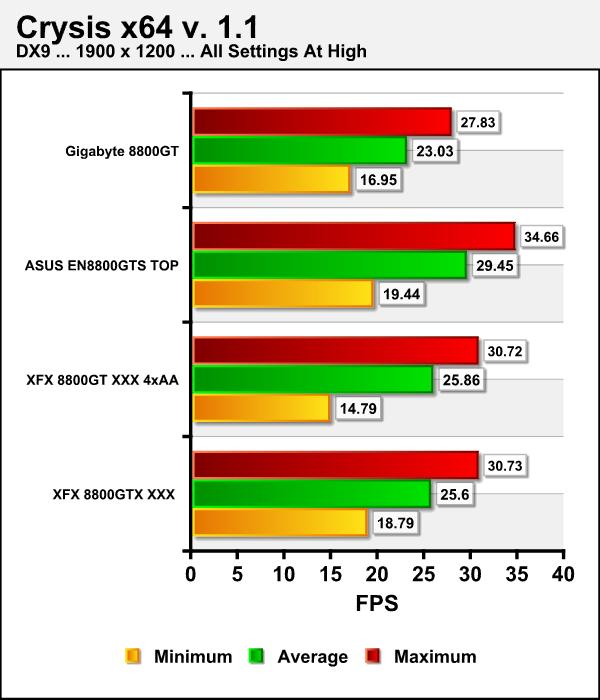

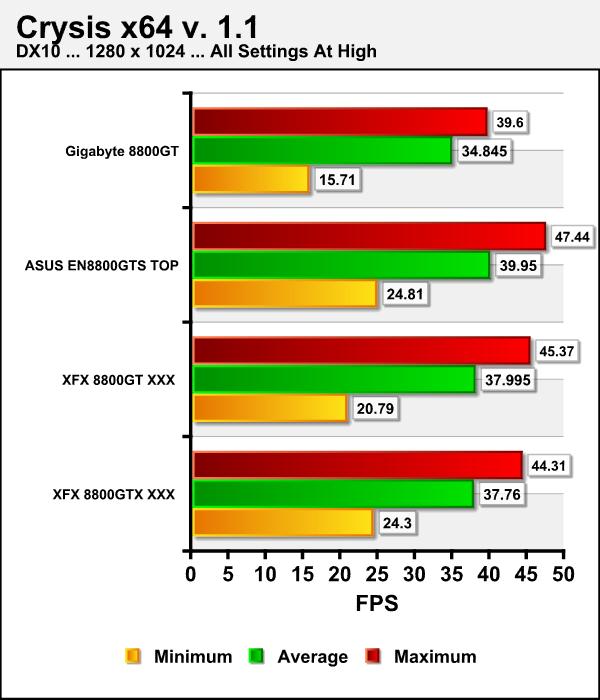

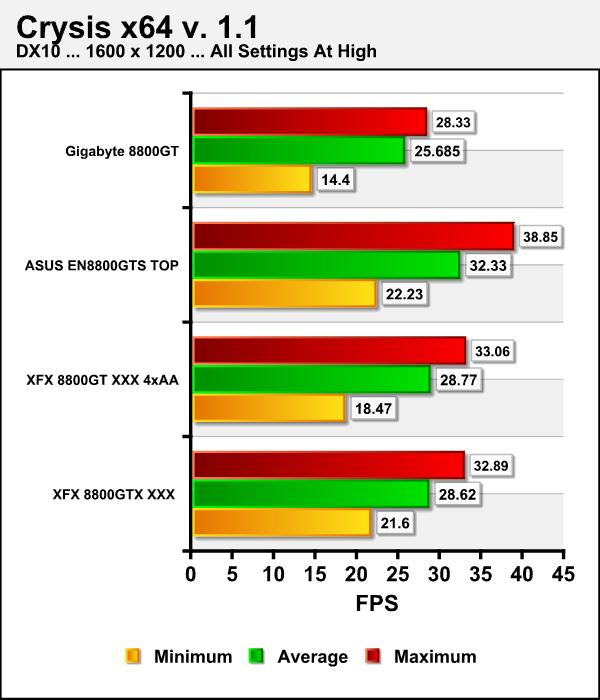

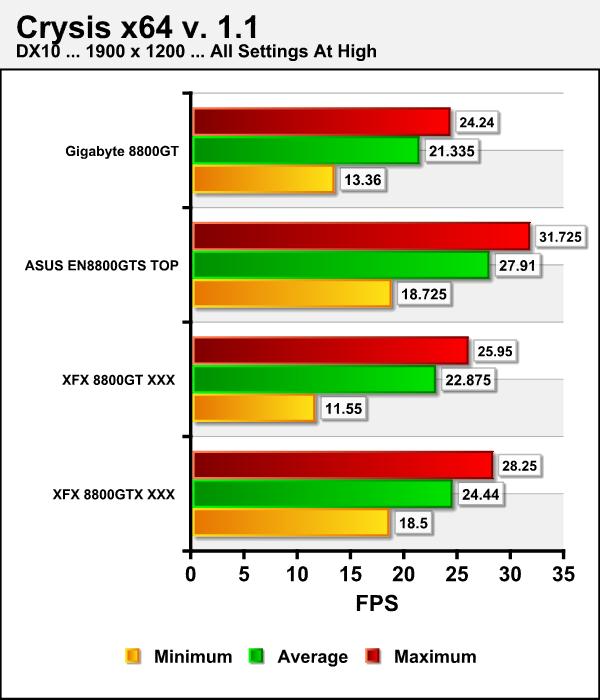

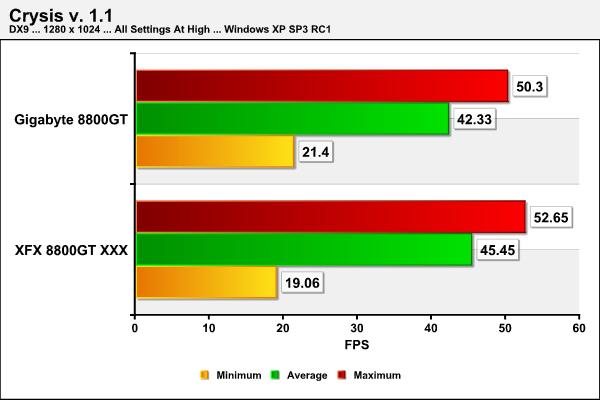

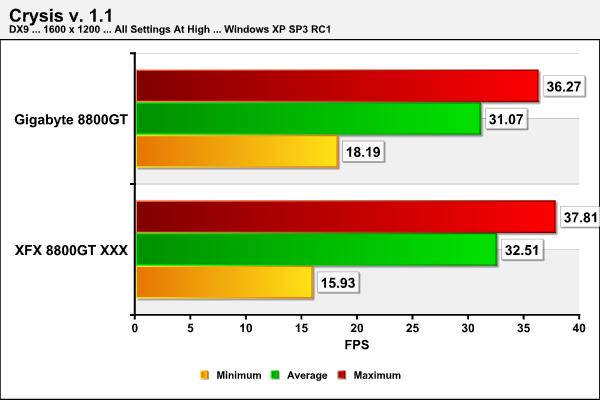

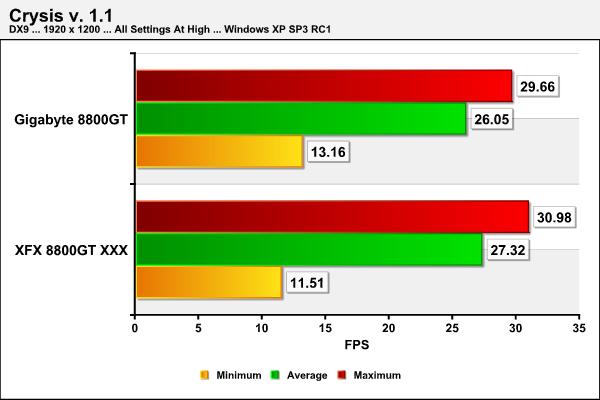

Crysis is the most highly anticipated game to hit the market in the last several years. Crysis is based on the CryENGINE™ 2 developed by Crytek. The CryENGINE™ 2 offers real time editing, bump mapping, dynamic lights, network system, integrated physics system, shaders, shadows and a dynamic music system just to name a few of the state of-the-art features that are incorporated into Crysis. As one might expect with this number of features the game is extremely demanding of system resources, especially the GPU. We expect Crysis to be a primary gaming benchmark for many years to come.

DX9

CRYSIS v. 1.1

DX10

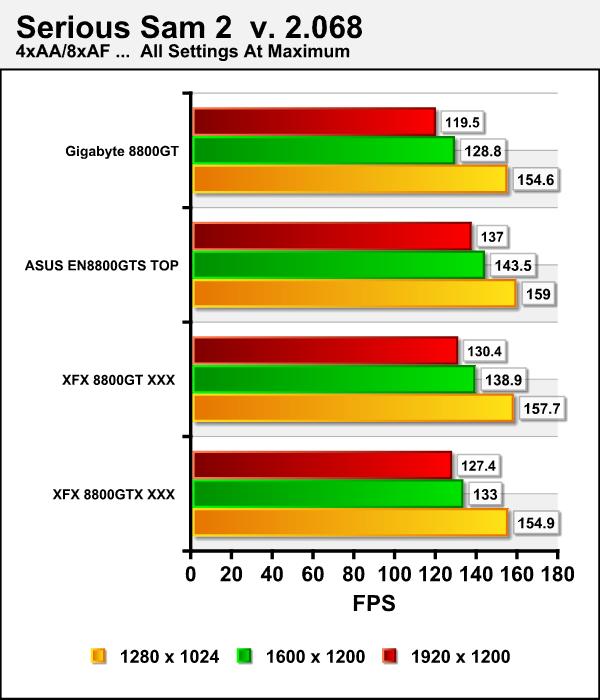

SERIOUS SAM 2 v. 2.068

Serious Sam 2 is a first-person shooter released in 2005 and is the sequel to the 2002 computer game Serious Sam. It was developed by Croteam using an updated version of their Serious Engine known as “Serious Engine 2”. We feel this game serves as an excellent benchmark which provides a variety of challenges for the the GPU/VPU you are testing. We once again automate the benchmarking process by using benchmarking software from HardwareOC to automate and refine the process.

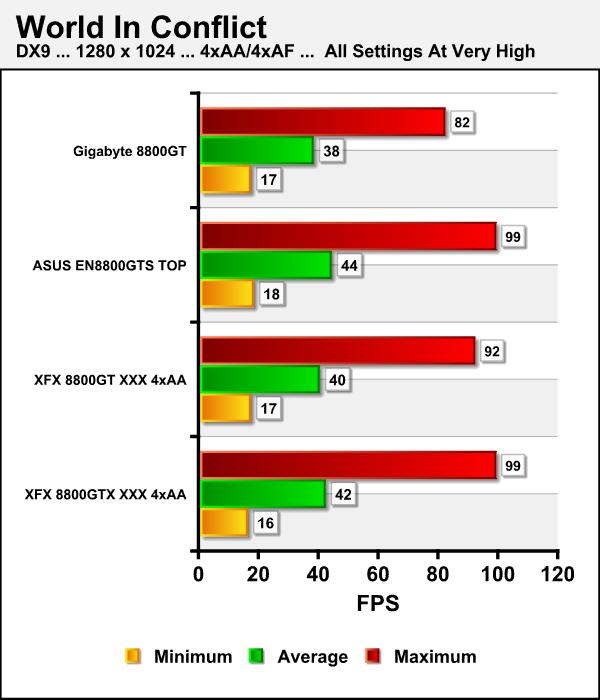

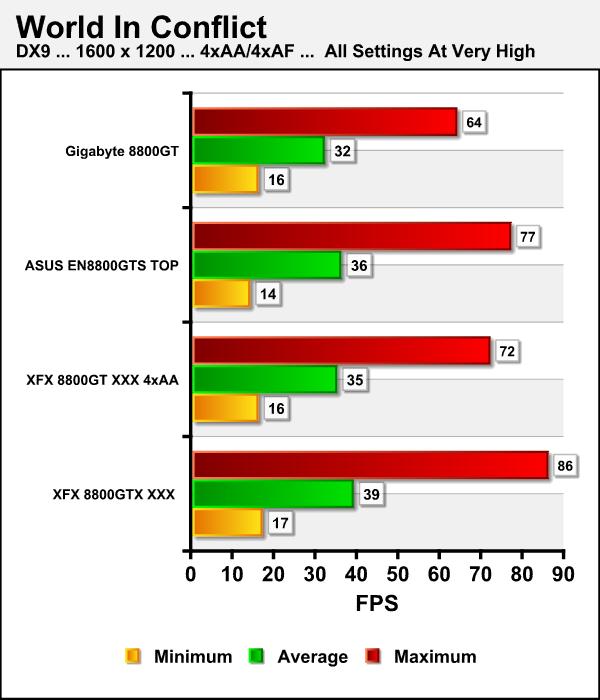

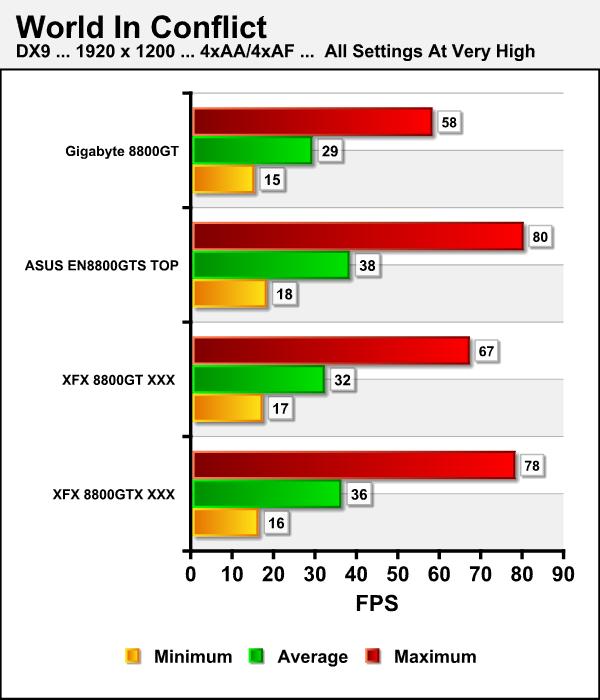

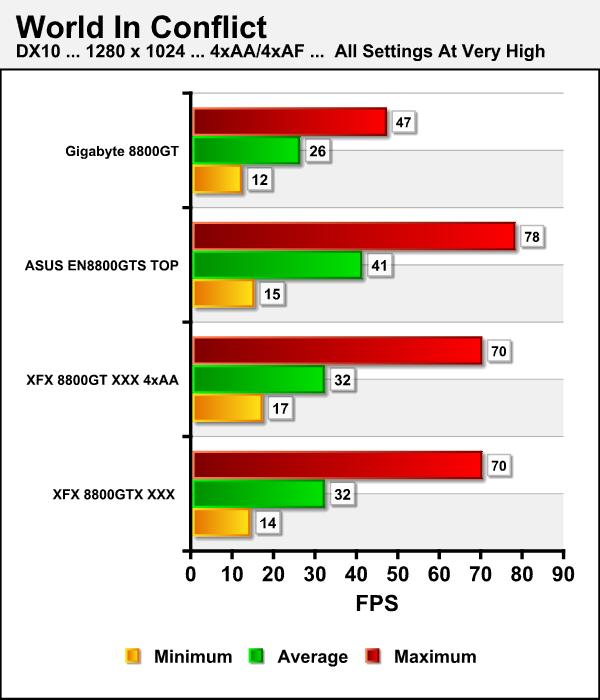

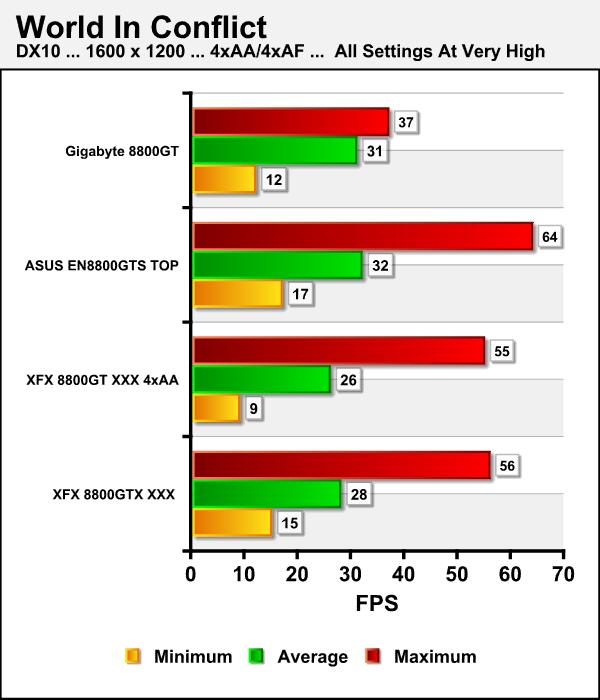

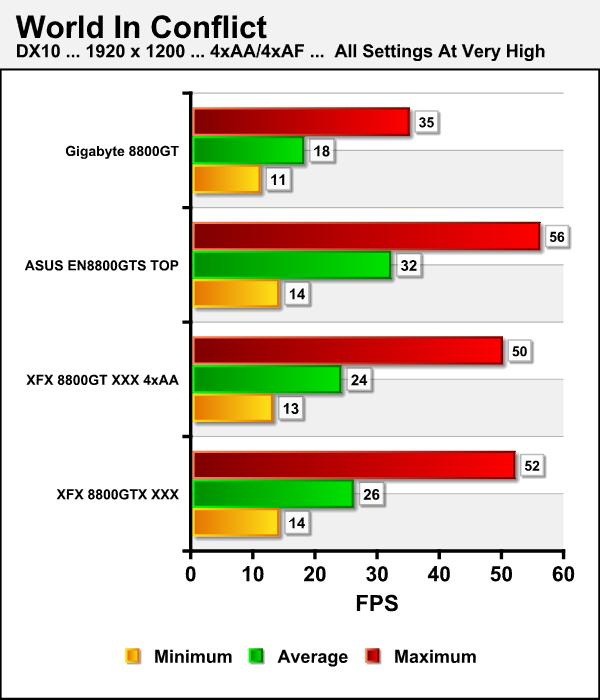

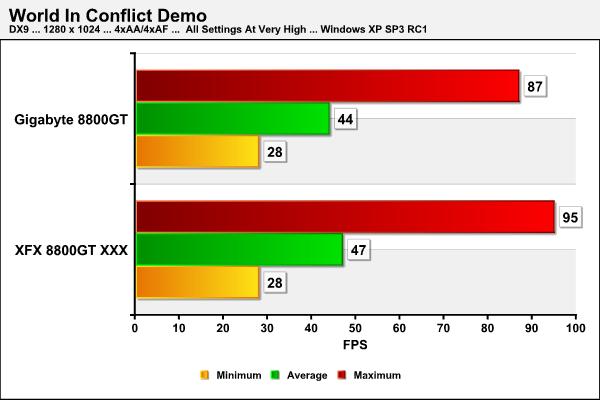

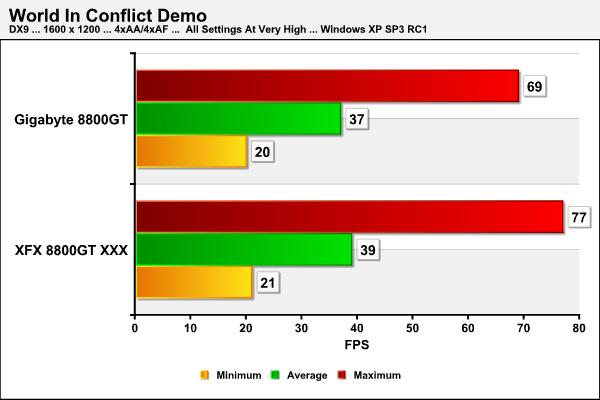

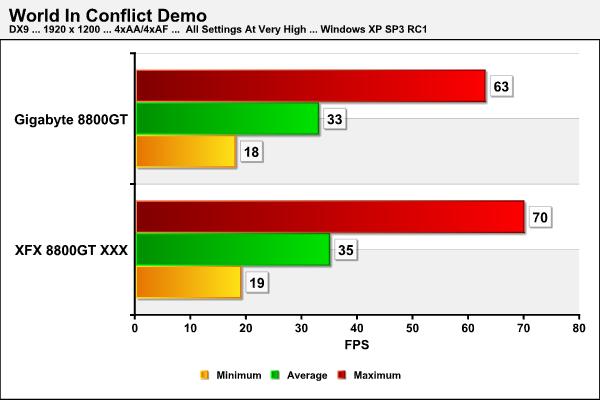

WORLD IN CONFLICT DEMO

World in Conflict has superb graphics, is extremely GPU intensive, can be rendered in both DX9 and DX10, and has built-in benchmarks. Sounds like a match made in heaven for benchmarking!

DX9

WORLD IN CONFLICT DEMO

DX10

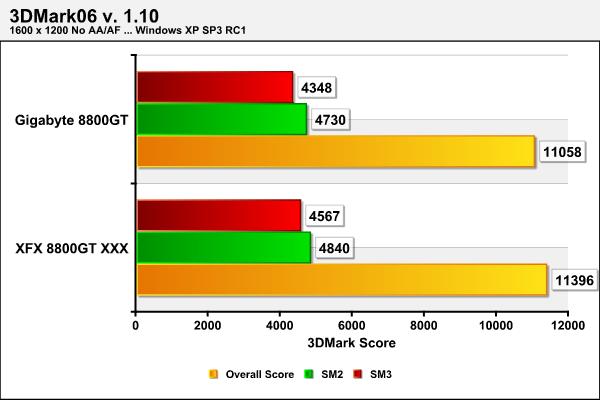

LEVELING THE PLAYING FIELD

How many times have you read a review and seen a graphics card compared to others that were either faster or slower than it? In most cases this would encompass the vast majority of all the reviews written. How many times have you asked yourself, why didn’t they clock two of the cards the same and do a heads-up comparison? We’ve asked ourselves that question a number of times! In most cases it was difficult or near impossible to get a good match between two or more of the cards we were testing or near impossible to match the clocks exactly without down clocking. Today is one of those special days when the planets seemed properly aligned and we were able to accomplish this task.

We saw how easy the GIGABYTE 8800GT TurboForce overclocked and we were able to match that clock exactly to the clock speed of out current “King of the Hill” in the 8800GT class, the XFX 8800GT. For this series of tests both cards will have a core clock of 670MHz, a memory clock of 1.95GHz, and a shader clock of 1.7GHz. We will repeat three of the benchmarks: 3DMark06, Crysis, and World in Conflict using the same settings as in the previous tests. The only difference will be that we will remove the Vista factor by using Windows XP SP3 as our operating system which will preclude us from running DX10.

3DMARK06 v. 1.1.0 COMPARISON

LEVELING THE PLAYING FIELD

CRYSIS v. 1.1 COMPARISON

WORLD IN CONFLICT DEMO COMPARISON

Personally, we really like this manno a manno type of comparison and think it makes for a much fairer view of the products’ performance.

POWER CONSUMPTION

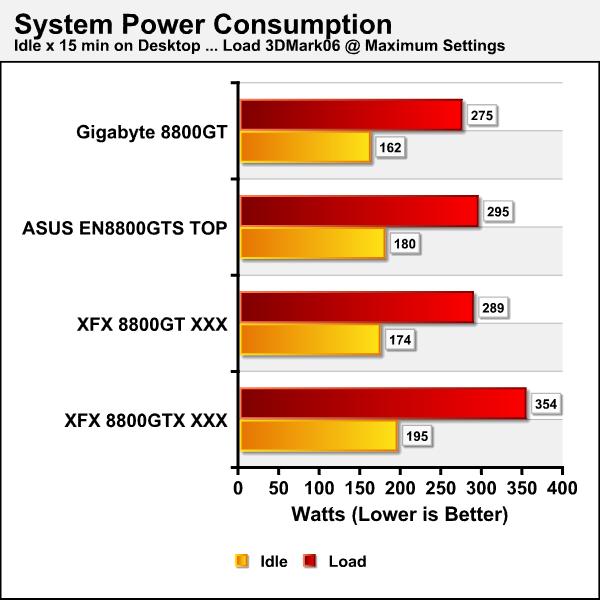

As we alluded to in the introduction decreasing power consumption by equipment is quickly becoming a major issue with many manufacturers. In our research and not just because we’re reviewing their product we’ve noticed that GIGABYTE appears to have taken the most steps forward with regard to decreasing power consumption. From what we understand they are completely replacing their current line of motherboards and graphics cards with ones that are more energy efficient. Some enthusiasts have touted this effort while others feel that the same results can be achieved by choosing conservative settings with the current systems. Hmmm sounds like a topic for a review, but I digress. We found that without invoking the optimizer that the GIGABYTE 8800GT TurboForce utilized approximately 12-14 less Watts than than the XFX 8800GT XXX. Again we’re not comparing apples to apples as the XFX 8800GT XXX has a higher over clock.

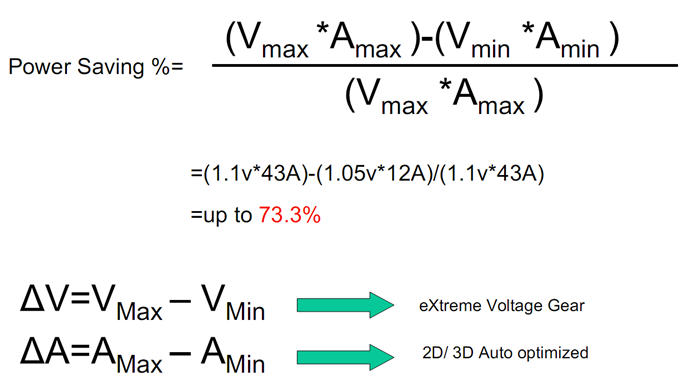

GIGABYTE asserts a power savings with their 8800GT TurboForce card to be in the neighborhood of that shown in the next image. They base this assertion on a user who spends 70% of his/her time surfing the Web, checking email, and doing other less graphics challenged functions.

The next image shows the formula for computations used to arrive at the results demonstrated in the previous graph.

Finally, the next image is a graph of our findings. To measure power we used our Seasonic Power Angel a nifty little tool that measures a variety of electrical values. We used a high-end UPS as our power source to eliminate any power spikes and to condition the current being supplied to the test systems. The Seasonic Power Angel was placed in line between the UPS and the test system to measure the power utilization in Watts. We measured the idle load after 15 minutes of totally idle activity on the desktop with no processes running that mandated additional power demand. Load was measured after looping 3DMark06 for 15 minutes at maximum settings with all the eye candy turned on.

We can attest that in our testing we experienced around a 15 Watt decrease in power consumption even without invoking any of the power saving optimization features GIGABYTE offers on this card. This power consumption drop was in comparison to the XFX 8800GT XXX. We can only assume this is due to non-reference architecture used in building this card and the use of Lower RDS(on) MOSFETs, Ferrite Core Choke, and ESR Solid Capacitors. You can look for us to explore power consumption of components in much more detail in a future article and we’re sure many of GIGABYTE’s will be used as examples.

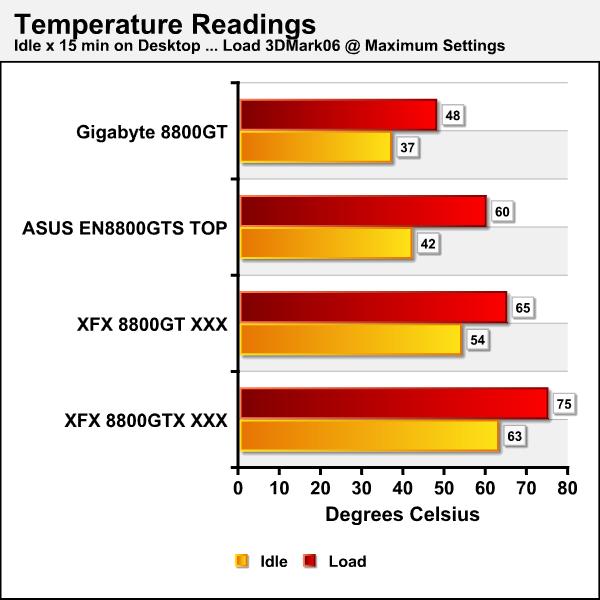

TEMPERATURE

The temperatures of the cards tested were measured using Everest Ultimate Edition v. 4.20.1170 to assure consistency and remove any bias that might be interjected with the respective card’s utilities. The temperature measurements used the same process for measuring “idle” and “load” capabilities as we did with the power consumption measurements.

These results should remove any doubt in your mind about the benefit of adding an aftermarket cooler to your graphics card!

OVERCLOCKING

As evidenced in our testing the GIGABYTE 8800GT TurboForce isn’t quite the performer that its closest rival in our comparison, the XFX 8800GT XXX, is; although it’s quite close. Again we’re not comparing apples to apples as the core clock, shader clock, and memory clok of the two cards are different. As in any review our goal is to push these products to their limit and back off just a bit to enforce stability. We found the 8800GT TurboForce to be the best overclocking graphics cards that we have had the pleasure of testing in quite some time. The images below reflect our findings and even at that level of performance we only saw maximum temperatures of 52 degrees Celsius. You should note that being able to over-volt the card via the HUD utility to 1.2V certainly aided us in this effort. In case you have trouble reading the HUD Screen it reflects a core clock of 800MHz, a shader clock of 1840, and a memory clock of 2.1GHz. Fantastic we’d say!

We would have liked to have seen the limit increased to 1.3V as we’ve seen some incredible results on other cards that have been volt modified to between 1.25V and 1.3V. We can however understand from a business perspective how this may not have been a wise decision.

CONCLUSION

The GIGABYTE™ 8800GT TurboForce Model GV-NX88T512HP graphics card is quite an impressive piece of equipment. We can easily say this as we have reviewed more of the 8800 series of cards than almost any other review site. The 8800GT TurboForce offers the consumer an almost endless array of possibilities. Our initial tests showed some marginal differences between this card and our current “King of the Hill”, the XFX 8800GT XXX. These differences dropped to in most cases only a couple of FPS when the GIGABYTE card was changed to the same clock settings.

We are most happy with the proactive stance GIGABYTE has taken with their Heads Up Display utility provided with the 8800GT TurboForce. To our knowledge they are the only manufacturer to date that has condoned as well as supported this practice. As you saw in our overclocking results, even a 0.1V increase does make difference and we saw only negligible temperature increases. The GIGABYTE 8800GT TurboForce is without a doubt the best overclocking graphics card in the 8800 series we’ve had the pleasure to work with!

Speaking of temperatures, the Zalman GPU after-market cooler on the 8800GT TurboForce is a godsend and certainly keeps the temperatures in the lowest range we’ve again seen on any stock 8800 series card. It averages 10 degrees Celsius cooler than any other card we’ve tested in this performance range. Even when this card was overclocked to a gargantuan level we only experienced a load temperature of 52 degrees Celsius, which is amazing!

As we alluded to earlier the GIGABYTE 8800GT TurboForce graphics card’s size is around 1.5 inches shorter than it’s reference style counterparts. Even though the depth of the Zalman cooler precludes the use of the slot immediately adjacent to the PCI-e slot in use, imagine the possibilities for a home entertainment PC. The current street prices for the GIGABYTE 8800GT TurboForce graphics card are in the $250 – $280 USD range, which for what you are getting we feel is a bargain price.

We can heartily recommend this card to any consumer wanting an extremely fast graphics solution that can be used successfully in a myriad of different environments. Bjorn3D caters to the computer enthusiast market where FPS may not be the only thing, but they certainly are a major driving force. For that reason alone, we would be remiss in not stating that the only thing that keeps us from giving this card an even higher score is it’s not the fastest kid on the block, even though its not missing it by much. While not the fastest kid the GIGABYTE 8800GT TurboForce with all of its extras delivers one of the best price performance ratios that we’ve seen to date.

Pros:

+ Vibrant and very life-like image rendering

+ 1.7GHz Shader clock speed

+ The shortest 8800 series card to date

+ 700MHz core clock

+ 1.84GHz memory clock

+ SLI™ certified

+ Utilizes an after-market Zalman GPU cooler, making it the coolest 8800 we’ve tested.

+ Factory supported overvolting utility included

+ The best overclocking 8800 series card we’ve tested to date

+ The quietest 8800 series card we’ve tested to date

+ Designed to save on power consumption

+ A quality product

+ A perfect player in the home theater arena

Cons:

– Doesn’t support the forthcoming release of DirectX 10.1 and Shader 4.1, but then no other 8800GT currently does

– Only a three year warranty

– The depth of the Zalman cooler precludes the use of the slot immediately adjacent to the PCI-e slot in use

Final Score: 9.0 out of 10 and the Bjorn3D Seal of Approval.

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996